If you have spent any time working in financial services over the last decade, agentic AI in finance would have probably already touched your workflow. Chatbots answering balance queries, fraud scoring models that flag risky transactions, OCR systems extracting invoice data, generative AI tools summarizing earnings reports or drafting compliance memos and much more.

In recent times, the whole industry is talking about “agentic AI” and it is slightly unclear what is genuinely new versus existing with fresh branding. As per Gartner report, 33% of enterprise software applications will include agentic AI by 2028, up from less than 1% in 2024, yet most financial services leaders are still trying to understand the architectural difference between what they already have and what agentic AI actually requires.

The distinction becomes especially important in the financial services sector, where AI technology must interact with fragmented infrastructure, approval workflows, compliance controls and audit requirements rather than simply generating outputs humans act on manually.

This guide maps the three layers across the workflows you actually deal with, so you can see exactly where the shift happens and why it matters.

Understanding Three Layers of AI in Finance: Traditional, Generative and Agentic

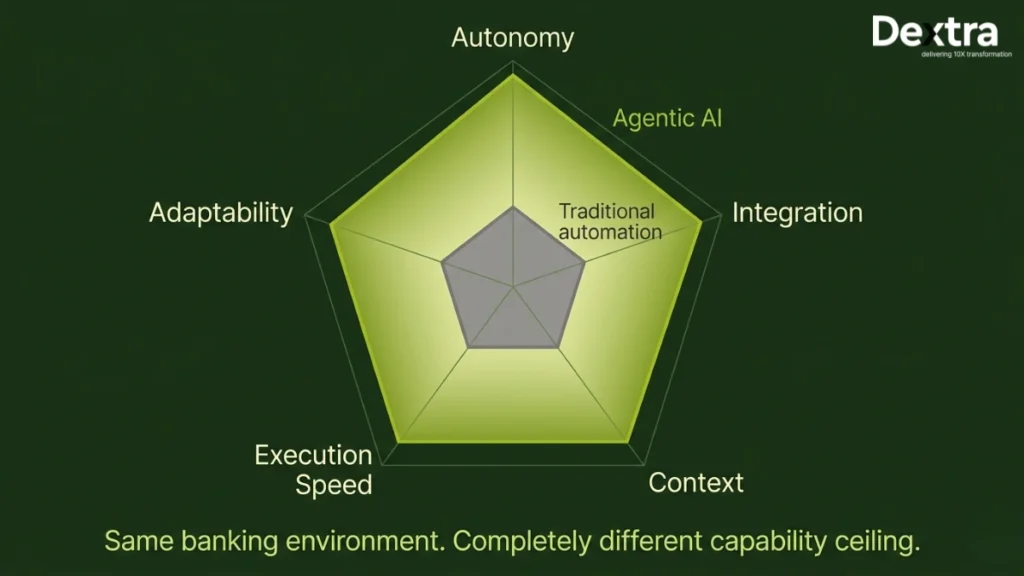

Understanding agentic AI starts with what each layer was designed to do, because the operational gap between them is wider than most discussions acknowledge.

1. Traditional AI (Rule-Based and Machine Learning)

Traditional AI systems follow predefined rules or learn patterns from historical data. They react to inputs and produce outputs within fixed parameters. They do not reason, adapt in real time, or take multi-step actions across systems.

Finance example: A fraud scoring model evaluates each transaction against learned patterns and assigns a risk score. If it exceeds a threshold, it creates an alert. It does not investigate. It does not pull context. It flags and waits.

2. Generative AI (LLMs and Content Generation)

Generative AI systems create new content based on prompts. They synthesize information across structured and unstructured data but are prompt-dependent and do not take action in external systems.

Finance example: A generative AI tool summarizes a 200-page regulatory filing into a two-page brief. Useful, but it does not check your portfolio against the new regulation, update your compliance controls, or notify affected teams. You need to read the summary and do those things manually.

3. Agentic AI (Autonomous and Goal-Directed)

Agentic AI refers to autonomous artificial intelligence systems designed to independently plan, execute and adapt complex financial tasks with minimal human oversight. These autonomous AI agents pursue goals across multiple steps, tools and systems, with human oversight applied at critical decision points rather than every step.

Finance example: An AI agent detects a new regulatory update, identifies which of your current controls are affected, drafts updated compliance procedures, routes them for review to the responsible control owners and logs the entire decision trail for audit. You review and approve; you do not initiate each step.

Consider reading “Top 10 Agentic AI Examples and Real Use Cases in 2026“, if you want enhance your practical knowledge & production ready use cases.

Why Moving From Generative AI to Agentic AI Is Operationally Difficult?

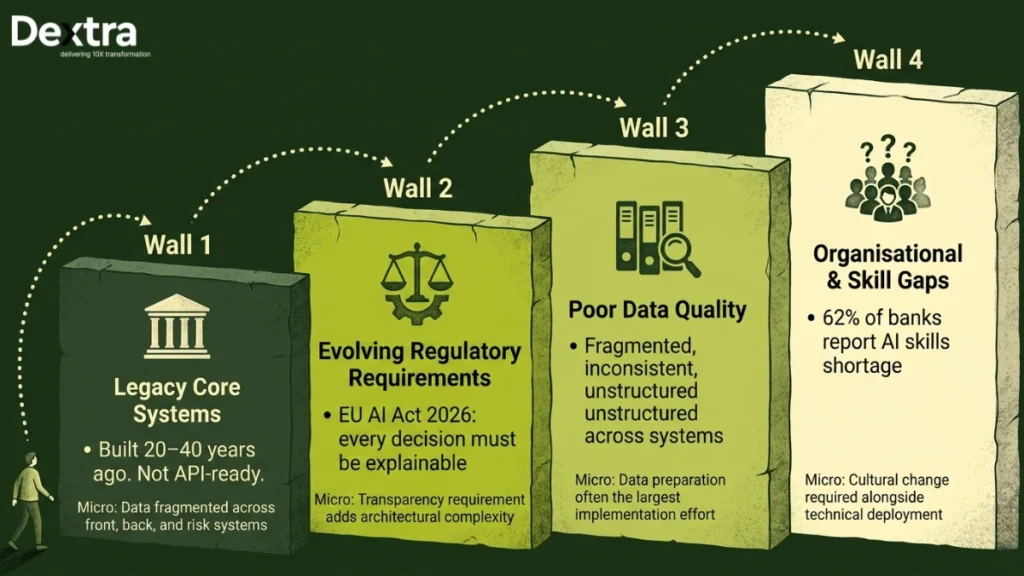

Moving from generative AI to agentic AI is operationally difficult because most financial institutions already have machine learning (ML) models, traditional automation tools and generative AI copilots in production. The challenge is not introducing another AI layer.

It is coordinating reasoning, memory, approvals, tool usage and execution across existing banking systems without compromising governance or compliance. This is where enterprise implementation architecture becomes critical.

At Dextra Labs, agentic AI systems are designed as orchestrated AI environments rather than standalone models. The focus is on integrating LLM reasoning, retrieval systems, workflow orchestration and policy-controlled execution into existing financial infrastructure while maintaining explainability and human oversight across every decision.

This is what separates AI agents in finance that deliver operational value from those that stay as isolated pilots.

How Traditional AI, Generative AI and Agentic AI Handle the Same Finance Workflows?

Traditional AI, generative AI and agentic AI handling the same finance workflows become clear when these three layers are applied to identical financial operations and decision-making processes.

| Finance Workflow | Traditional AI | Generative AI | Agentic AI |

| Fraud Detection | Traditional AI scores each transaction by risk probability, flags the suspicious ones and drops an alert into the analyst queue. That is where its involvement ends. It stops at the signal and waits for a human to take it from there. | Generative AI steps in when a human asks it to, summarizing patterns across flagged transactions and drafting a case narrative from the data already in the system. It stops at the summary and hands the investigation back to the analyst. | The agentic AI system takes the alert and runs with it. It pulls the full transaction history, checks device signals, cross-references the counterparty against fraud intelligence databases, assembles the complete evidence package and delivers a disposition recommendation. By the time the analyst opens the case, the investigation is already done. |

| Compliance Monitoring | It runs periodic checks against a predefined rule set and flags the violations it was programmed to recognize. Anything outside those parameters goes undetected, because the system can only find what it was explicitly told to look for. | When a human asks, generative AI will summarize a regulatory update and draft a compliance memo from the text provided. It does not monitor independently and it does not act unless it is prompted to do so. | The agentic AI system monitors regulatory feeds around the clock without being asked. When something changes under evolving regulations, it identifies which internal policies are affected, drafts the updated procedures, routes them to the right control owners and logs every step of the decision trail for audit, all before a human has even opened the document. |

| Accounts Payable | It extracts invoice data via OCR, matches it against purchase orders using rigid rules and flags any mismatches for a human to resolve. It stops there and waits for someone to take the next step. | This can suggest GL codes based on historical patterns and draft vendor communications when a human asks for them. It does not move the invoice forward or process the workflow end to end on its own. | The agentic AI system handles the entire invoice lifecycle without handoffs. It extracts and validates the data; matches it against PO and contract terms; resolves exceptions that fall within defined policy tolerance; routes approvals to the right people; and schedules payment in time to capture early discount windows. This is what procure-to-pay automation looks like when it actually closes the loop. |

| Credit Underwriting | Traditional AI scores each applicant against historical credit data and produces a risk rating. A human underwriter then takes that score and drives the rest of the process from there. | Generative AI summarizes financial statements and drafts underwriting memos from the application data when prompted. The underwriter is still the one running the process, using the AI output as a starting point rather than a finished product. | The agentic AI system reviews the full application package from end to end. It verifies income against tax records, checks alternative data sources, runs multi-factor risk scoring and generates a complete approval recommendation with fully documented reasoning. Autonomous systems in lending and underwriting streamline mortgage and credit approvals by quickly extracting and verifying data from documents, so the underwriter reviews a finished package rather than assembling one. |

| Customer Service | Traditional AI routes each inquiry to the appropriate queue based on keywords and delivers scripted responses within fixed parameters. It handles routing and repetition well, but anything outside the script goes straight to a human. | Generative AI generates personalized responses and summarizes account history for the service agent handling the interaction. It makes the agent faster and better informed, but the agent is still the one resolving the issue. | The agentic AI system resolves the inquiry from start to finish without passing it off. It accesses the customer’s account data, identifies the issue, checks applicable policies, executes account management changes within defined guardrails and follows up directly with the customer. Different agents coordinate across functions so that only genuine complexity reaches a human agent. Beyond reactive resolution, agentic AI systems proactively reach out to customers with relevant insights, building satisfaction by anticipating needs before they become problems. |

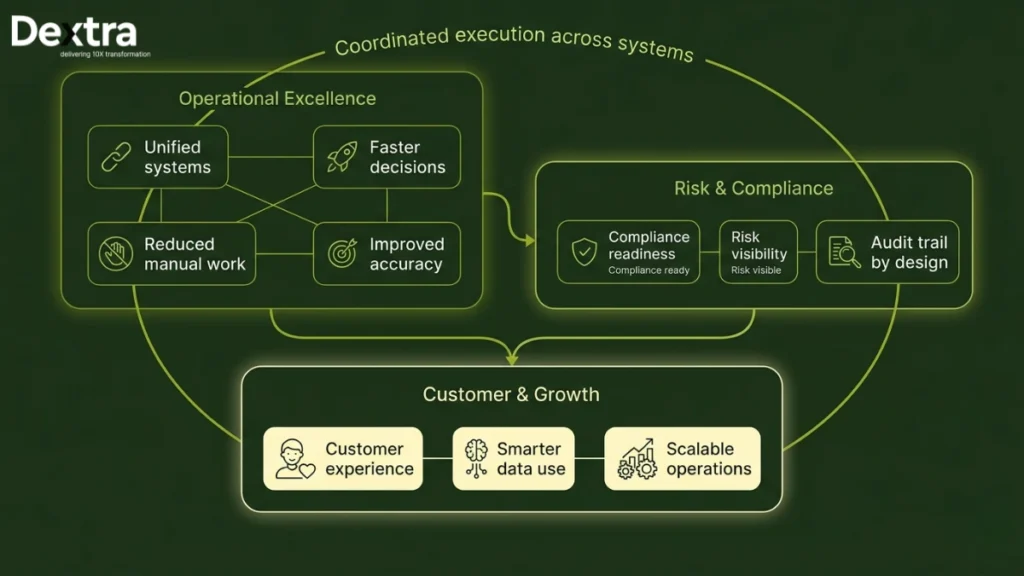

The pattern is consistent across every workflow. Traditional AI detects and flags. Generative AI summarizes and drafts. Agentic AI investigates, decides and executes. This is a structural shift, not an incremental one. Finance teams move from an execution-led model, where humans do the work and AI assists, to a supervision-led model, where AI does the work and humans govern.

According to Moodys, 90% of AI interactions in financial services are now focused on high-value analytics, signaling this shift toward more meaningful customer engagement and service delivery. Agentic AI also automates customer onboarding, providing personalized and adaptive journeys that improve the overall customer experience in banking.

These are the kinds of agentic AI applications in finance that are moving from pilot to production across the financial services sector today.

Why Most Financial AI Systems Still Stop at Assistance?

Most financial AI systems still stop at assistance because many financial institutions still operate in an assistance-first model where AI generates outputs while human teams manage investigation, approvals, escalation and execution manually across disconnected systems. The limitation is rarely the model itself. The larger challenge is operationalization.

Production-grade agentic AI requires:

- Workflow orchestration

- Structured memory management

- Real-time data access

- Approval and escalation layers

- Audit trails

- Policy enforcement

- Supervised autonomy

Without these key capabilities, most AI solutions remain isolated copilots rather than fully operational systems.

This is one of the primary areas Dextra Labs focuses on when designing enterprise AI architectures for financial institutions, building the infrastructure that allows different agents to coordinate and move from generating recommendations to executing decisions within defined governance boundaries.

Why the Shift to Agentic AI Matters Now for Financial Services?

The shift to agentic AI matters now for financial services comes down to three compounding pressures that have reached a turning point simultaneously.

Reason 1: The Talent Math No Longer Works

Financial institutions face a structural workforce challenge that hiring alone cannot solve. In accounting alone, 75% of CPAs who became licensed in the 1970s and 1980s are now retirement-eligible, creating a knowledge and capacity gap arriving faster than the pipeline can replace it.

Compliance functions face the same problem. Modern financial institutions operate across 500 or more control points spanning dozens of overlapping regulations and headcount cannot scale proportionally with that complexity.

Agentic AI is the only viable path to maintaining operational capacity without proportional headcount growth, because it handles the high-volume, repetitive tasks that consume the majority of analyst time, freeing finance teams to identify opportunities, deliver quick wins and enhance accuracy on higher-value work.

Reason 2: Generative AI Has Hit a Ceiling

Most financial institutions started deploying generative AI tools in 2023 and 2024. The results were genuinely useful but structurally limited, such as faster document summaries, better chatbot responses and improved memo drafting.

Finance teams quickly realized that the gap between “AI drafts a compliance memo” and “AI manages the compliance workflow” is not a prompt engineering problem. It requires a fundamentally different architecture. Generative AI produces outputs. Agentic AI closes loops.

That finance transformation is what finance functions are now investing in and it requires implementing AI agents rather than simply layering more generative AI capabilities on top of existing systems, a distinction that is increasingly clear to agentic AI for finance and accounting teams who have lived through both deployments.

Reason 3: Early Movers Are Already Proving the Returns

The window for being an early mover in agentic AI is still open, but it is closing faster than most finance leaders realize. The institutions that moved first are not just ahead on technology. They are ahead on cost structure, risk accuracy and operational capacity and that advantage compounds every quarter their peers spend still deliberating.

The returns are no longer theoretical. After deploying agentic AI research workflows, Moody’s reported a 30% reduction in task completion time alongside a 60% increase in research consumption among users, meaning analysts are producing more output with exactly the same headcount.

HSBC’s fraud detection agents reduced false positives by 60% while improving detection rates two to four times over their previous baseline. JPMorgan is actively deploying agents across fraud, lending and capital markets functions, building operational infrastructure that late movers will spend years trying to replicate.

The institutions that act now are not just solving today’s efficiency problem. They are building the institutional knowledge, the governance frameworks, the trained models and the integration architecture that will take competitors 18 to 24 months to catch up to.

The implementation of agentic AI in financial services can accelerate financial close activities by 30 to 50%, transforming month-end close into a faster, more value-driven process. According to Accenture, by 2026, agentic AI is expected to create scaled transformation leading to the emergence of the 10x bank model, where a single individual leads a team of AI co-workers delivering exponentially greater output.

The question for financial services leaders today is not whether agentic AI delivers returns. The question is whether your institution is building that lead or falling behind it.

What a Production-Grade Agentic AI Stack in Finance Actually Looks Like?

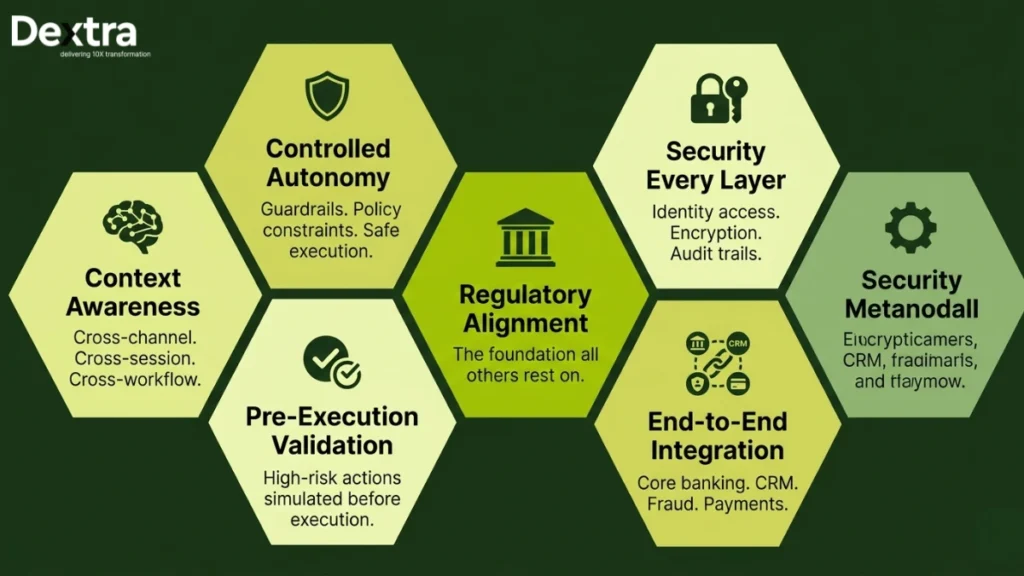

A production-grade agentic AI stack in finance is a coordinated enterprise architecture built to support reasoning, memory, governance, orchestration and autonomous execution across financial systems. Now, we will explore this layer by layer:

Layer 1: Reasoning Layer

The LLM handles planning, task decomposition and multi-step decision logic across complex workflows. Deep learning-based reasoning chains are architected here and their quality determines whether the agent produces coherent, auditable decision paths or unpredictable outputs regulators cannot accept.

Layer 2: Memory Layer

Financial AI systems require persistent memory across interactions and sessions. This layer is built on vector databases and retrieval-augmented generation pipelines that access both structured transaction data and unstructured financial documents with contextual relevance, allowing agents to maintain context across multi-day credit reviews or ongoing compliance assessments.

Layer 3: Orchestration Layer

This layer coordinates workflow execution across multiple tools and other AI agents using multi-agent frameworks. Agentic AI systems require integration with modern, interconnected banking systems, highlighting real challenges for institutions relying on legacy technology.

User inputs and refined strategies are managed here across complex, multi-step financial workflows involving different agents working in parallel.

Layer 4: Execution Layer

The agent interacts with live systems through APIs, reducing constant human intervention across high-volume financial workflows. Autonomous systems at this layer automate repetitive tasks such as document parsing and data verification, significantly reducing human error.

Successful integration often depends on collaboration with third-party services, as 84% of financial services leaders indicate their businesses rely on such integrations to enhance financial services products.

Organizations that implement agentic AI with cloud-native infrastructure report improved performance and reliability, enabling rapid experimentation and real-time data processing.

Layer 5: Governance Layer

Effective governance requires robust data curation, structured decision-tracking, and human-in-the-loop oversight, ensuring outputs can be interrogated and overridden when necessary.

Financial institutions must invest in explainable AI models that provide clear reasoning behind AI-generated decisions to address ethical considerations and ensure compliance with regulatory standards. To maintain compliance, financial institutions are required to document AI decision-making processes in ways that ensure interpretability without compromising operational efficiency, as regulatory bodies demand higher levels of transparency.

Infosys reports only 2% of companies have implemented adequate AI governance controls, meaning most institutions are generating decisions they cannot adequately explain or defend. Agentic AI systems can also autonomously monitor markets and detect correlations, allowing investment firms to optimize capital allocation and enhance operational efficiency in real time.

Dextra Labs also helps clients protect sensitive financial information throughout the deployment lifecycle, ensuring agentic systems handle account management and user inputs within clearly defined security boundaries. Our enterprise AI deployments are structured around these five layers to ensure agentic systems remain scalable, explainable and controllable in regulated financial environments.

What Agentic AI Doesn’t Change (And What Still Needs Humans)

Agentic AI automates execution. It doesn’t automate judgment, accountability, or data governance. Before deploying agents across finance workflows, be clear about where the boundaries sit – not as limitations to work around, but as design constraints to build into the system. Let me walk you through where even agentic AI will require human-in-the-loop.

1. Judgment Calls on Ambiguous Situations

Agentic AI handles high-volume, rules-governed workflows well, but genuine ambiguity is a different problem. A client outside standard credit criteria, a vendor dispute layered with negotiation history, or a regulatory gray area requiring interpretation, these still need human judgment. The best agentic systems recognize where their authority should stop and escalate accordingly.

2. Accountability and Regulatory Liability

When an agent makes a decision that results in a fair lending complaint, the institution owns it, not the model, not the vendor. Agents execute within guardrails, but humans set those guardrails and are responsible for the outcomes. Governance frameworks must evolve as AI assumes a more autonomous role in credit and risk decisions.

3. Data Quality as a Prerequisite, Not a Parallel Workstream

Agentic AI amplifies whatever data quality the institution brings to the table. Clean, well-governed data produces reliable outputs. Fragmented, inconsistent data produces confident but wrong outputs at scale, which is worse than no automation at all. Fix the data foundation first.

Where to Start with Agentic AI in Finance?

Starting with agentic AI in finance follows three consistent principles across successful deployments.

1. Start with One High-Volume, Rules-Governed Workflow

Do not try to transform everything simultaneously. Pick the workflow where your finance teams spend the most time on repeatable investigation work, including fraud alert triage, invoice matching and compliance monitoring.

Build one agent, prove the ROI against a documented baseline, then expand. Focused pilot projects that demonstrate real value in a narrow scope consistently outperform broad transformation initiatives that distribute effort too thin to show results.

2. Deploy in Supervised Mode First

Let the agent process workflows but require human approval on every action for the first 30 to 60 days. You need to measure accuracy, false positive rates and processing time against your manual baseline. Increase autonomy gradually as confidence scores stabilize and performance patterns become clear.

Scaling AI too fast before the governance layer is validated is one of the most common reasons agentic AI deployments produce unexpected outcomes in regulated environments.

3. Invest in The Audit layer From Day One

Regulators will ask how the agent made its decisions. Build explainability and decision logging into the architecture from the start rather than retrofitting it after the system is in production.

Retrofitting audit trail automation is significantly more expensive and disruptive than building it correctly at the outset and it is the single most common gap found when financial institutions face regulatory examination of their AI solutions.

For most financial institutions, the challenge is not proving that agentic AI can work. The challenge is deploying it reliably across fragmented infrastructure, governance processes and high-risk operational environments.

This is why enterprise deployments increasingly focus on supervised execution models first; where agents operate within clearly defined policies, escalation paths and approval boundaries before autonomy is expanded gradually.

At Dextra Labs, this phased deployment approach is commonly used across enterprise AI implementations to help organizations move from isolated pilots toward scalable, production-grade AI systems with built-in auditability, orchestration and operational control.

Conclusion

In conclusion, the shift from traditional AI to agentic AI in finance is not about replacing what already works. Fraud scoring rules still catch known patterns. ML models still assess lending and underwriting risk. Generative AI tools still accelerate document analysis and reporting. What agentic AI adds is the orchestration layer that was always missing: reasoning, memory, tool execution and governance working together across systems continuously, without waiting for a human to initiate each step.

The institutions that gain the most from this shift are those that treat agentic AI as long-term operational infrastructure, not a technology experiment. That means investing in the five layers that make autonomous execution reliable in regulated environments: reasoning, memory, orchestration, execution and governance. Without all five, agents remain isolated copilots rather than production-grade systems.

To explore how these architectures can be designed for your specific regulatory environment, connect with Dextra Labs to evaluate scalable agentic AI systems built for explainability, governance and operational reliability.

FAQs:

Q1. What makes Dextra Labs’ approach to agentic AI different from just adding another AI tool?

Dextra Labs designs agentic AI as orchestrated environments, not standalone models. The focus is integrating LLM reasoning, retrieval systems, workflow orchestration, and policy-controlled execution into your existing financial infrastructure while keeping every decision explainable and auditable.

Q2. How does Dextra Labs handle compliance and regulatory requirements during deployment?

Explainability and decision logging are built into the architecture from day one, not retrofitted later. Every agent action is tracked, structured for audit, and designed to meet regulatory examination standards without compromising operational efficiency.

Q3. Can Dextra Labs work with our legacy banking systems?

Yes. Enterprise deployments are specifically structured around integrating with fragmented legacy infrastructure, coordinating multiple agents across disconnected systems, approval workflows, and compliance controls, exactly where most AI implementations break down.

Q6. What if our data quality isn’t perfect — can we still deploy agentic AI?

Data quality is a prerequisite, not a parallel workstream. Dextra Labs flags this upfront: agentic AI amplifies whatever data quality you bring. Fragmented data produces confident but wrong outputs at scale. Part of the engagement involves ensuring your data foundation is ready before autonomous execution begins.

Q7. Honestly, how hard is it to move from generative AI to agentic AI?

Harder than most vendors admit. The model is rarely the problem; the challenge is coordinating reasoning, memory, approvals, tool usage, and execution across systems you already have, without breaking compliance. That orchestration layer is exactly what Dextra Labs builds.

Q8. How does Dextra Labs ensure sensitive financial data stays protected?

Dextra Labs builds clearly defined security boundaries throughout the deployment lifecycle, ensuring agentic systems handle account management and user inputs within governed, policy-controlled parameters at every layer.