Industry: Healthcare / Clinical Trials | Region: London, United Kingdom | Client Type: Enterprise

About the Client

The client is a London-based health technology company that provides operational infrastructure for clinical trials. They work with pharmaceutical companies and NHS-partnered research institutions to manage the operational complexity of running multi-site trials across the UK and Europe. Their platform handles everything from patient recruitment tracking to regulatory submissions.

At the time they came to Dextra Labs, they were managing adverse event reporting for twelve concurrent studies, ranging from Phase II oncology trials to Phase III cardiovascular studies. The regulatory burden was enormous and growing.

The Problem

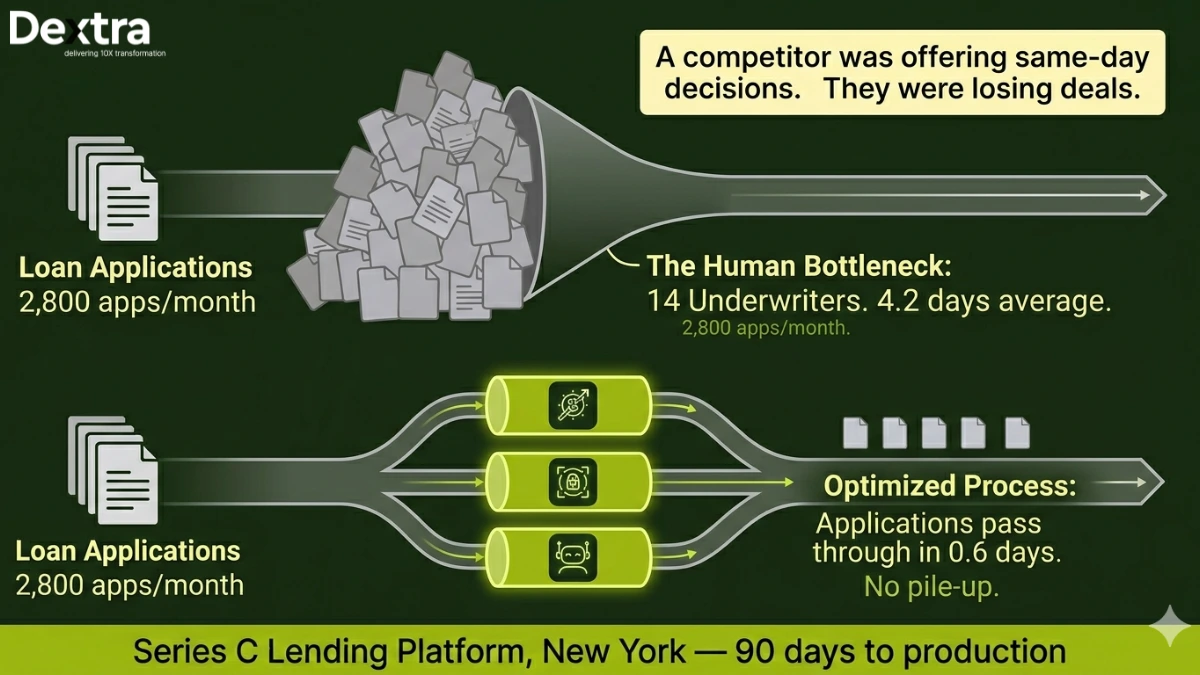

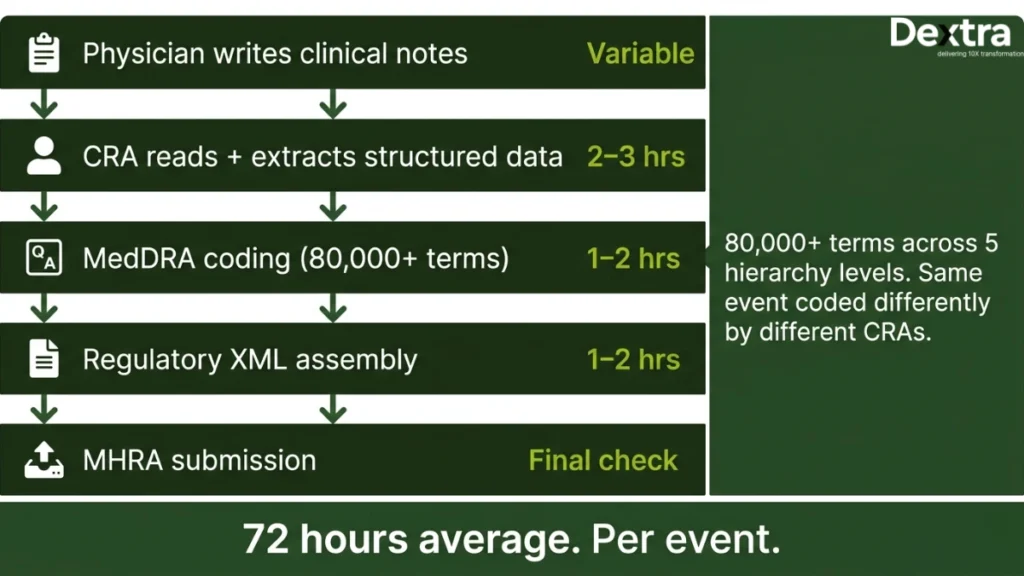

In clinical trials, when a participant experiences a negative health event, it must be reported to the regulatory authority, in the UK’s case, the MHRA. This process is called adverse event reporting and it’s one of the most labor-intensive, error-sensitive workflows in the industry.

Here’s what a single adverse event report looked like at this company. A physician at a trial site writes clinical notes about the event. These notes might be typed, dictated, or handwritten. A Clinical Research Associate (CRA) then reads the notes and extracts structured data: what happened, when, what medications the patient was on, the severity, the suspected relationship to the study drug. The CRA maps these details to MedDRA codes, the standardized medical terminology that regulators require. Then someone assembles the regulatory submission in the correct XML format and files it.

The entire process took an average of 72 hours per event. They had a team of contractors dedicated to nothing but this work, costing £320,000 per year. And the quality was inconsistent, MedDRA coding has over 80,000 terms across five hierarchy levels and even experienced CRAs would code the same event differently depending on how they interpreted the physician’s language.

What Dextralabs Built?

We built a two-agent pipeline that takes a physician’s clinical notes as input and produces a review-ready MHRA submission as output.

Agent 1: Clinical Extraction AI Agent

This ai agent reads clinical notes and extracts structured medical information. That sounds simple until you’ve seen actual clinical notes. Physicians use abbreviations that aren’t in any standard dictionary (“pt c/o N+V x3d” means “patient complains of nausea and vomiting for three days”). They use negation in subtle ways (“no evidence of cardiac involvement” is very different from “evidence of cardiac involvement”). Dictated notes contain transcription errors. Handwritten notes are their own category of challenge.

We fine-tuned a medical NER model on 12,000 annotated clinical notes from the client’s historical database. The model was specifically trained to handle NHS-specific abbreviations, dictation artifacts and the particular negation patterns that appear in British clinical writing. It extracts entities like symptoms, diagnoses, medications, dosages, temporal relationships and severity assessments.

Agent 2: Compliance and Submission AI Agent

The second ai agent takes the extracted entities and does three things. First, it maps them to the correct MedDRA preferred terms, using a combination of semantic similarity matching and hierarchical validation (a term must be consistent across the SOC, HLGT, HLT, PT and LLT levels). Second, it cross-references the extracted data against the specific study protocol, loaded via a RAG pipeline, to determine relatedness to the study drug and expected versus unexpected classification. Third, it generates the regulatory submission in the XML format that MHRA requires.

The critical design choice was the confidence scoring system. Every field in the extracted report has a confidence score. Fields above 0.85 are considered reliable. Fields between 0.65 and 0.85 get highlighted in amber, the system is fairly confident but wants a human to verify. Fields below 0.65 are flagged in red. The clinical reviewer sees a pre-populated form where most fields are already filled in and they can focus their attention on the uncertain fields rather than reviewing everything from scratch.

Architecture and Technical Decisions

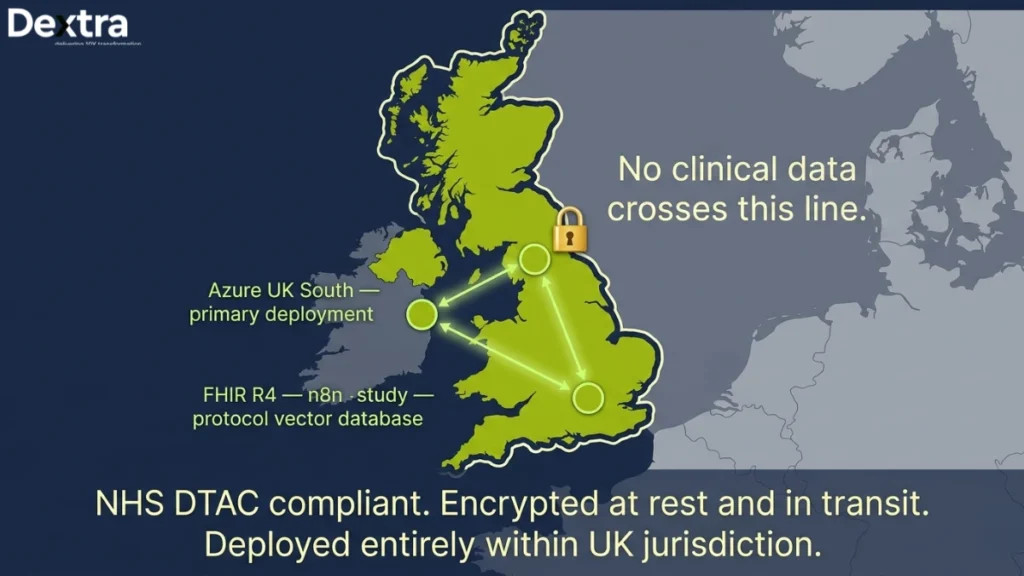

Data sovereignty was non-negotiable. The system is deployed entirely within Azure UK South. No clinical data leaves the UK boundary. All data is encrypted at rest and in transit and the deployment passed the NHS DTAC (Data Technology Assessment Criteria) review.

We chose Claude 3.5 Sonnet as the primary reasoning model because of its strong performance on medical text comprehension. The fine-tuned NER model runs on spaCy with biomedical extensions. The vector database for the RAG pipeline over study protocols is Weaviate, hosted within the same Azure region. Workflow orchestration runs through n8n, which the client’s team was already familiar with.

Tech Stack

Claude 3.5 Sonnet · spaCy (Biomedical) · MedDRA API · Weaviate · Azure UK South · FHIR R4 · n8n · PythonResults

| 72h → 45 min Adverse event reporting cycle | 60% CRA time reclaimed for high-value work | £320K/yr Contractor cost eliminated | 97.1% MedDRA coding accuracy |

The average adverse event report now takes 45 minutes from clinical note submission to review-ready status. The 45 minutes includes the agent’s processing time (roughly 8–12 minutes depending on note complexity) plus the clinical reviewer’s time checking the flagged fields.

The contractor team was fully decommissioned within four months. The client’s in-house CRAs now spend roughly 40% of their time on AE review (down from nearly 100%) and the rest on higher-value clinical monitoring activities. MedDRA coding accuracy, validated against a panel of three senior medical coders, is 97.1%, consistently higher than the 89–93% accuracy the contractor team had been producing.

“The confidence scoring changed everything. Instead of reviewing every submission line by line, our team now focuses only on the cases the agent is uncertain about. We’ve reclaimed 60% of our CRAs’ time.”

— Director of Clinical Operations, Health-Tech Company, London

Engagement Timeline

As an AI agent development company in USA, we took 18 weeks, which included a 4-week NHS compliance review process that ran in parallel with development.

Weeks 1–3: discovery, data audit and annotation pipeline setup.

Weeks 4–8: NER model fine-tuning and validation.

Weeks 6–12: Compliance Agent development and MedDRA mapping optimization.

Weeks 12–14: integration testing with historical AE cases.

Weeks 14–18: production rollout, parallel running and compliance sign-off.

Working in healthcare, pharma, or regulated industries?

Let’s discuss how AI agents can automate your compliance-heavy workflows without compromising data sovereignty.

👉 Book a 30-min AI Consultation