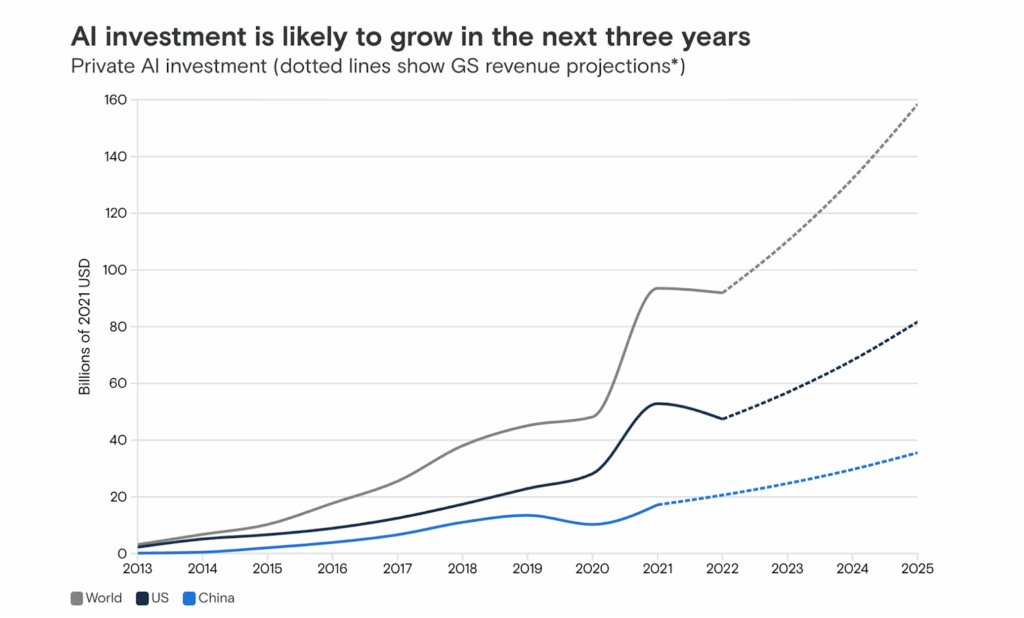

Did you know why every CIO is Recalculating AI ROI in 2025? As per Goldman Sachs, In 2025, global enterprise spending on AI infrastructure is projected to exceed $200 billion, yet fewer than 35% of organizations can accurately measure their AI ROI. It’s one of the most significant disconnects in modern technology leadership: massive investment, shallow returns, and increasing pressure from boards and CFOs to prove that AI is more than a science experiment.

The conversation has changed. We’ve moved from proof of concept to proof of profitability.

The hype cycle has quieted; enterprise expectations have not. CIOs and CTOs are discovering that while AI’s capabilities grow exponentially, its cost curve is rising even faster, driven by compute-intensive models, multi-agent architectures, and relentless demand for inference. Meanwhile, CFOs want clearer answers: What is the AI return on investment? What is the payback period? What is the cost per decision?

And that’s where the shift begins. At Dextralabs, we describe the new mandate clearly:

“True AI ROI isn’t just about cutting costs, it’s about optimizing reasoning efficiency, scaling sustainably, and aligning every model decision with measurable business outcomes.” – CEO Dextra Labs

Welcome to the new era of AI ROI economics, where the leaders are the ones who can master infrastructure strategy, custom silicon, and reasoning efficiency, not merely deploy more models.

Why Every CIO Is Recalculating AI ROI in 2025?

The inflection point is here. Enterprises that once relied on public cloud GPU instances “just to get started” are now facing runaway compute bills, unpredictable inference costs, and performance ceilings that strain operations.

As enterprise AI adoption accelerates across business units, cost pressures are intensifying and infrastructure demands are rising.

The drivers are clear:

1. Larger models = more compute

Models with billions of parameters amplify both training and inference costs.

2. Multi-agent and chain-of-thought reasoning = higher token consumption

Better results often require deeper reasoning, but that reasoning comes at a price.

3. Enterprises scaling AI across multiple business units

What began as a pilot in customer service is now embedded across operations, HR, finance, risk, engineering, and product.

All of this creates a new kind of pressure. AI is no longer an innovation initiative; it’s an ongoing cost center. And without an ROI framework, it becomes difficult to justify additional budget.

This is the central tension of 2025. AI power is rising, but so are the costs of sustaining it. The organizations that win won’t necessarily be the ones spending the most. They’ll be the ones calculating the smartest, making better decisions about architecture, silicon, cloud contracts, and model design.

What Is AI ROI, and Why Is It Hard to Measure?

Every enterprise leader talks about AI Return On Investment, but very few can define it precisely.

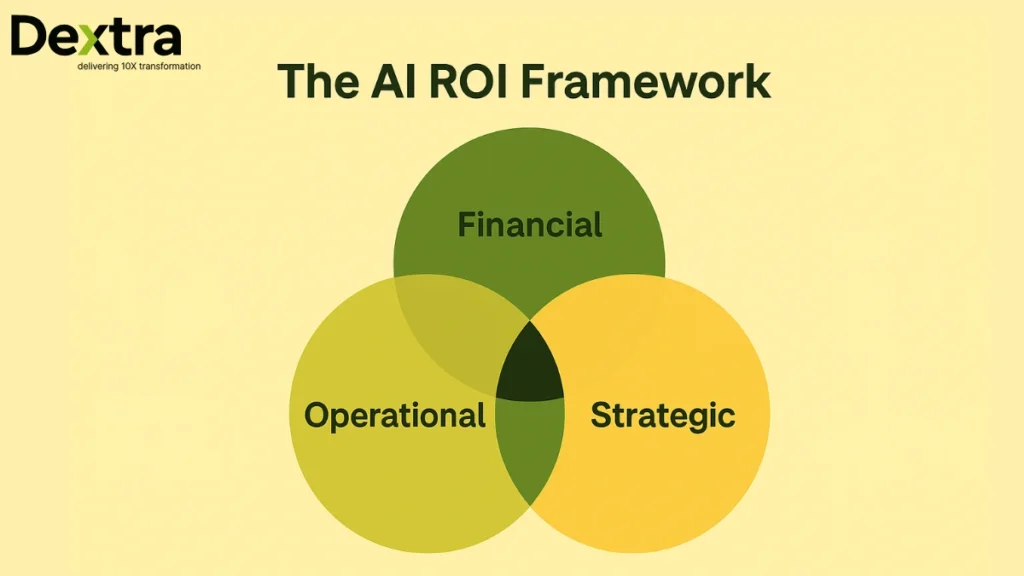

AI ROI or AI Return On Investment is the balance of financial, operational, and strategic outcomes produced by AI relative to the total cost of building, training, deploying, and scaling those systems.

But unlike traditional IT projects, AI’s value is often diffuse, cross-functional, and compounding, which makes it notoriously difficult to measure.

The Three Layers of AI ROI:

1. Financial ROI

This is the most straightforward metric: increased revenue, reduced costs, or lower total cost of ownership.

Examples: automation savings, reduced call center load, faster procurement cycles.

2. Operational ROI

This includes efficiency, accuracy, error reduction, decision speed, and workflow enhancement.

Often, this is where AI shines, yet these benefits rarely show up immediately in dollar terms.

3. Strategic ROI

A clear AI investment strategy helps organizations prioritize high-impact use cases and avoid scattered deployments.

For example, A bank is reducing fraud detection latency from minutes to seconds.

These are real, material benefits, but incredibly difficult to quantify in isolation.

Why Measuring AI ROI Is So Hard?

Let’s have a look:

A. Intangible benefits are hard to value: What is the dollar value of better decisions? Faster time-to-market? Improved employee productivity?

B. AI rarely works alone: AI’s impact is tied to other systems, processes, and organizational changes. Is that improvement from AI or from cloud migration? Or workflow redesign?

C. Benefits compound over time: AI systems improve with usage, data, and feedback loops, unlike linear IT systems.

Yet most organizations still lack this foundation; Wavestone’s 2025 Global AI Survey found that 46% of enterprises do not have any structured AI ROI measurement framework in place. This makes it even harder for CIOs and CFOs to accurately map AI performance to business impact.

The Shifting Economics of AI: Cloud Costs, Compute Bottlenecks, and the New Reality

The economics of AI have fundamentally changed.

A few years ago, the cloud was the obvious choice for AI experimentation: fast provisioning, minimal capital investment, and instant access to GPUs. Today, the story is different. These trends highlight a fundamental shift in AI infrastructure economics, reshaping how organizations budget for compute, storage, and inference.

Cloud AI Costs Are Rising Faster Than AI Value

The largest line items are predictable:

- GPU compute

- High-memory instances

- Long-running inference workloads

- Data movement across cloud regions

- Energy and cooling (passed through to customers)

According to industry research, inference costs have grown as much as 300% year-over-year for enterprise-scale LLM deployments.

Why? Because inference is the long tail, occurring millions of times more often than training.

The Compute Tension

Understanding AI infrastructure economics is now essential for balancing accuracy, reasoning depth, and total cost of ownership. Two things can be true at once:

- Larger models deliver better accuracy.

- Larger models deliver worse economics.

As enterprises shift toward reasoning-heavy applications, multi-step workflows, agentic orchestration, and knowledge-intensive tasks, the cost per output increases.

This is the paradox:

Enterprises want deeper reasoning, but deeper reasoning increases token usage, compute demands, and latency.

At Dextralabs, we summarize it as: “The next phase of AI ROI depends on optimizing every layer, from silicon to system to prompt.”

To move forward, organizations need a structured approach.

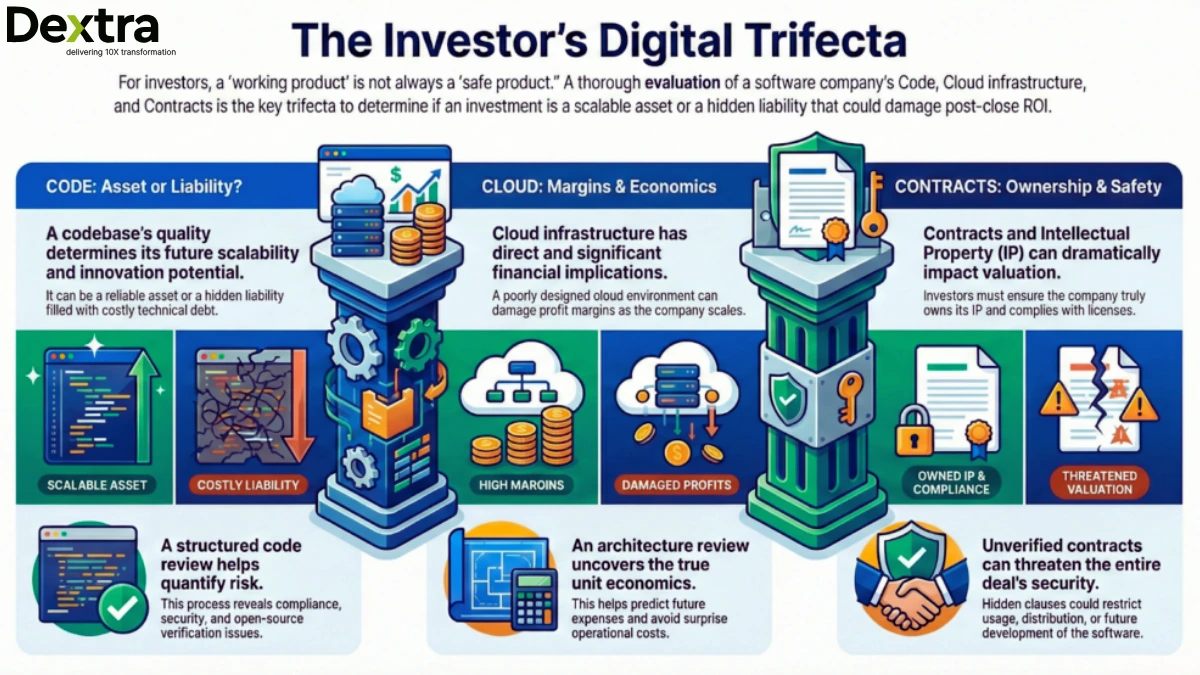

How to Measure AI ROI: The Dextralabs Enterprise Framework

Based on client engagements, industry research, and real-world deployments, Dextra Labs developed the AI ROI Framework, a structured model to align AI investments with measurable results.

The Framework Has Three Dimensions

Let’s have a look at them:

1. Financial Dimension

Measure direct impact on revenue, cost savings, and TCO reduction.

Before/after comparisons and forecasting models help quantify value over time.

2. Operational Dimension

Measure how AI improves business processes: faster decisions, higher accuracy, fewer manual steps, lower error rates.

3. Strategic Dimension

Measure innovation enablement, improved compliance, enhanced risk posture, and competitive differentiation.

A key principle of the framework is continuity.

AI ROI must be measured continuously , not once.

Enterprises are now deploying AI ROI dashboards, tracking model cost, performance, usage, quality, and reasoning depth in real time. These dashboards integrate with FinOps workflows and provide CFOs a clear view of cost-per-output.

Cloud AI Cost Optimization: Doing More With Less

As enterprises scale LLM workloads, cloud AI cost optimization is becoming a critical priority for managing GPU demand and long-running inference costs.

Most enterprises dramatically overspend on cloud-based AI. The good news: reducing those costs doesn’t require sacrificing performance, it requires embracing smarter architecture.

Here are the four pillars of modern cloud AI cost optimization.

1. Resource Management

Strong resource allocation practices directly support cloud AI cost optimization by reducing idle GPU time and preventing overprovisioning. Cloud waste remains the biggest contributor to overspending.

CIOs are adopting:

- Autoscaling

- Workload-aware scheduling

- GPU right-sizing

- Distributed training schedules

- Dedicated GPU pools for predictable inference loads

Techniques like workload-aware scheduling significantly improve AI cloud efficiency by matching compute supply to workload patterns.

2. Cost Governance

FinOps is no longer optional; it’s essential.

Enterprises now track:

- Cost tagging across models, teams, workloads

- Daily usage patterns

- Token-per-output ratios

- Alerts for unexpected cost spikes

- Weekly audits of GPU utilization

This allows finance teams to anticipate spend instead of chasing it.

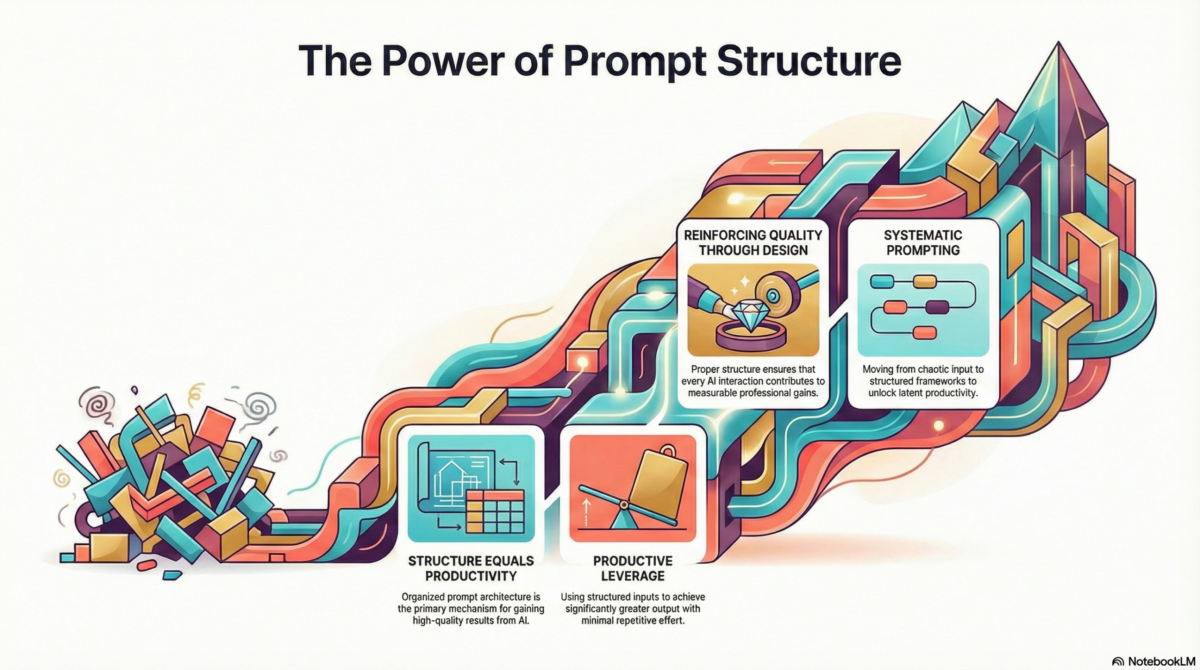

3. Model Optimization

This is where the largest savings often hide.

Techniques include:

- Model quantization to reduce precision and compute requirements

- Pruning to eliminate redundant model parameters

- Distillation to produce smaller, more efficient models

- Prompt optimization to reduce unnecessary tokens

- Context compression to shorten input sequences

A single optimization pass can reduce inference costs by 30–50%.

Strategic Procurement

CIOs now evaluate:

- Open-source models vs proprietary APIs

- Fine-tuning vs retrieval-augmented techniques

- Reserved GPU capacity vs on-demand cloud pricing

- Dedicated instances vs shared clusters

- AI-specific cloud providers vs general-purpose cloud

The result is clear:

Smart procurement can reshape the entire ROI curve.

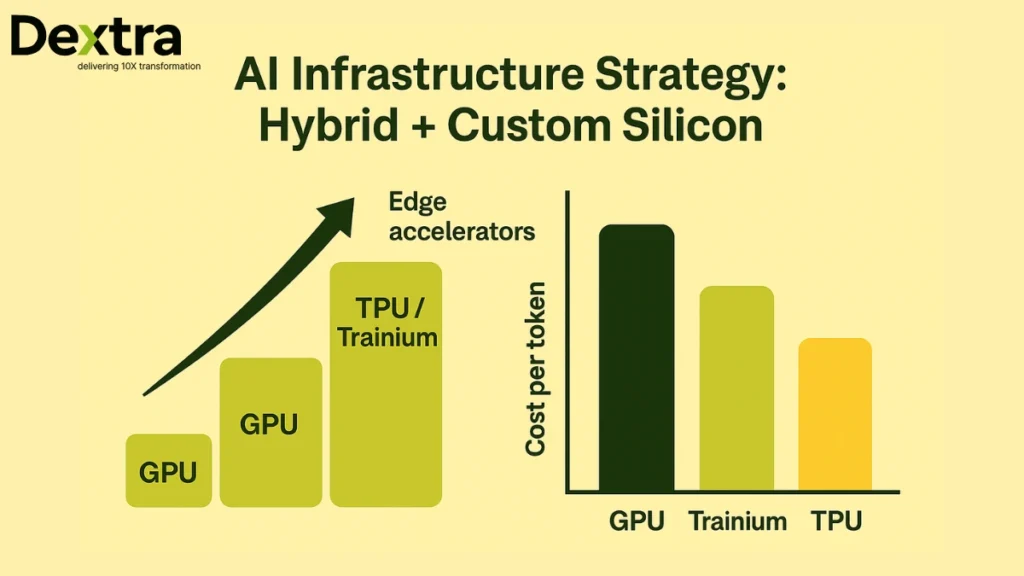

The Rise of Custom Silicon: The New Frontier of AI Efficiency

The fastest-growing trend in AI infrastructure isn’t bigger clusters, it’s custom silicon.

To meet the rising compute demands, enterprises are increasingly adopting custom AI chips designed specifically for transformer and LLM workloads.

General-purpose GPUs are no longer the most efficient option for all workloads. They are powerful, but expensive, energy-intensive, and often inefficient for specific operations.

That has led to the rise of domain-specific AI chips, designed explicitly for AI training and inference.

Why Enterprises Are Shifting to Custom Silicon?

- 2–3x faster training throughput

- 30–50% lower power consumption

- Lower cost per token generated

- Optimized for transformer models, attention mechanisms, and LLM workloads

The Major Players

The market for custom AI chips is expanding rapidly as hyperscalers build silicon optimized for training and inference efficiency.

- Google TPU

- AWS Trainium & Inferentia

- Microsoft Maia

- Meta MTIA

Enterprises are also conducting feasibility studies for on-premise accelerators from Habana, Cerebras, Groq, and SambaNova.

And the momentum is growing. Industry analysts estimate that custom silicon could represent over 50% of global AI semiconductor revenue by 2030.

This trend is not a matter of performance; it’s a matter of ROI. Hardware is becoming a competitive advantage.

As Dextralabs states:

“In the age of trillion-parameter models, hardware defines your AI ROI curve.”

Over time, custom AI chips will become a central factor in determining an organization’s AI cost structure and scalability.

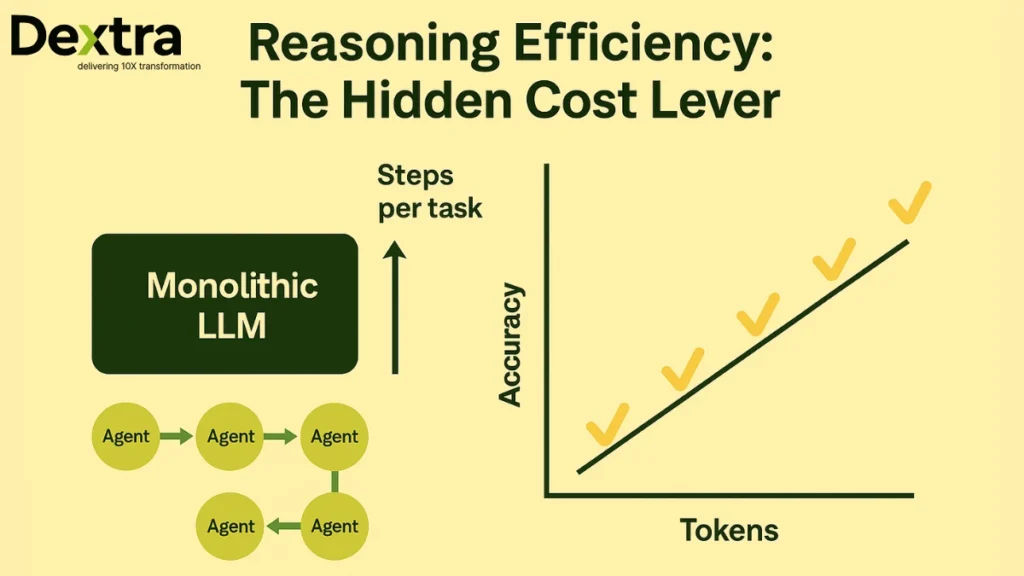

Reasoning Efficiency: The Hidden Multiplier of AI ROI

Here’s the concept almost no enterprise is discussing, yet it determines the majority of AI cost outcomes:

Reasoning Efficiency

It refers to the model’s ability to complete tasks using fewer steps, fewer tokens, and less computational intensity while maintaining or improving accuracy.

In modern LLM systems, especially agentic AI architectures, reasoning is the cost center.

Key Reasoning Metrics:

- Steps per task

- Token usage per decision

- Accuracy per dollar spent

- Latency per reasoning depth

- Quality stability across reasoning modes

The emergence of multi-agent systems is reshaping this dynamic.

Monolithic Models vs Agentic Systems

Have a look at Monolithic Models vs Agentic Systems

Monolithic LLMs

- High token usage

- Expensive inference

- Less modular

- Harder to optimize

Agentic Architectures

- Specialized agents handling specific subtasks

- Reusable context and memory

- Lower token consumption

- Better alignment with business workflows

This is why Dextralabs builds Reasoning-Oriented Agents, designed to:

- Reduce inference overhead

- Minimize unnecessary reasoning steps

- Increase accuracy per token

- Deliver predictable performance at scale

This is the future of enterprise AI economics: Smarter reasoning, not bigger models.

The Business Case for Sustainable AI Economics

CIOs today are not just technologists, they are stewards of enterprise investment. The shift toward sustainable AI economics aligns directly with the core goals of the business.

Here’s how optimized AI infrastructure creates tangible business value:

Faster Payback Periods

Companies shorten the time between investment and return by aligning models with efficient hardware and cloud strategies.

Reduced Carbon Footprint

Custom silicon, optimized models, and hybrid AI clouds consume less energy — enabling sustainability reporting and ESG alignment.

Better Financial Predictability

CFOs gain visibility into monthly inference costs, enabling better planning and budgeting.

Cross-Functional Resilience

CIOs collaborate with procurement, finance, operations, and data science to establish unified governance.

Dextralabs plays a crucial role here by bridging the gap:

“Dextralabs partners with enterprises to align AI investments with measurable financial outcomes, connecting technical efficiency to business profitability.”

Case Insight: How Dextralabs Improves Enterprise AI ROI

Enterprises work with Dextralabs for a simple reason:

They need real ROI, not slideware. Here’s how the process typically works:

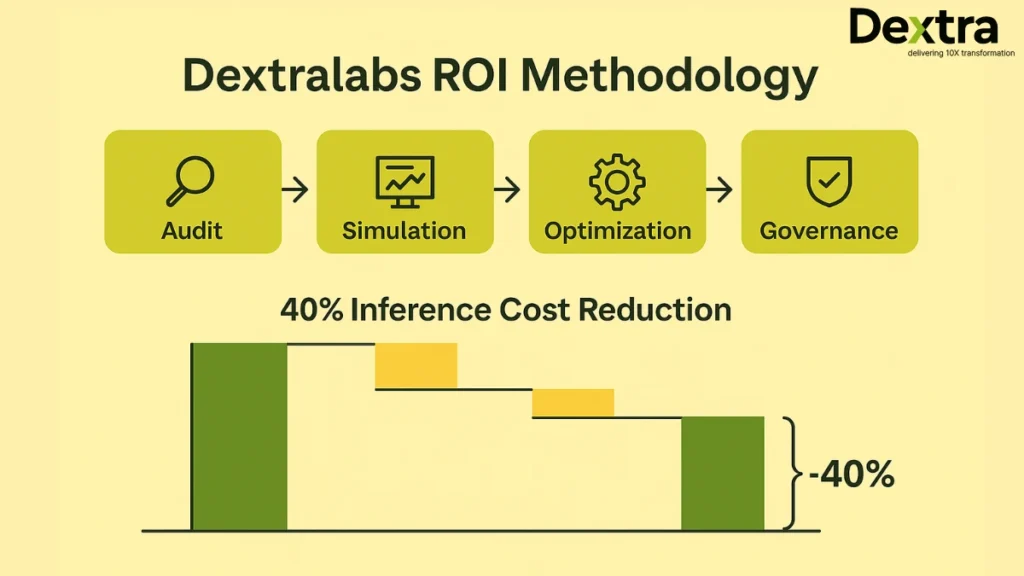

1. AI Cost Audit

Dextralabs analyzes compute, cloud, model architecture, data pipelines, and token usage to identify overspending zones.

2. ROI Simulation Models

Scenario-based simulations forecast return under various improvements — from switching silicon to optimizing prompts.

3. Infrastructure Optimization Roadmap

A step-by-step plan covering:

- Model distillation

- Retrieval optimization

- Silicon migration

- Cloud strategy

- Governance improvements

4. Governance & Observability

Establishing policies, guardrails, dashboards, and cost controls.

Outcome Example

A large enterprise reduced inference spending by 40% after Dextralabs redesigned their LLM architecture and applied quantization + agentic routing.

This is the kind of ROI transformation that is becoming standard, not exceptional.

Conclusion: AI ROI Is No Longer a Finance Metric; It’s a Strategy

Enterprises that master cloud AI cost optimization will achieve faster payback periods and more predictable operating costs. We’ve entered the era where the economics of AI matter as much as the innovation.

CIOs and CTOs are revisiting everything, from how models reason to what silicon they run on.

The winners will be the enterprises that master:

- Reasoning efficiency

- Cloud cost strategy

- Model optimization

- Custom silicon adoption

- Continuous ROI measurement

AI is no longer a “deploy and forget” technology. It’s a living system: economic, strategic, architectural. Every decision influences its ROI, impact, and sustainability.

If your organization is scaling AI but struggling to control costs, justify investment, or deliver measurable outcomes, now is the time to act.

Ready to Maximize Your AI ROI?

Dextralabs helps enterprises turn AI from a cost center into a value engine.

Through architecture intelligence, model optimization, and cloud cost strategy, we help organizations build the most efficient, scalable, and economically sustainable AI systems possible.