The boardroom conversation around artificial intelligence has fundamentally shifted. Where once the debate was “should we adopt AI?” it is now “how do we govern it?” For enterprises operating in regulated industries across the United States and the UAE, this distinction is not semantic, it is existential. Regulatory mandates are tightening, data breach costs are rising and the reputational stakes of an ungoverned AI deployment have never been higher.

Against this backdrop, one model has emerged as the preferred choice for compliance-conscious enterprises: Claude, developed by Anthropic. This blog explores why enterprise leaders, from Chief Risk Officers to Chief Information Security Officers, are increasingly building their AI governance strategies around Claude and what that means for organisations considering their own AI deployments.

The Enterprise AI Governance Imperative: Why It Can No Longer Be an Afterthought?

The numbers are sobering. According to the OneTrust survey of 1,250 IT leaders, 98% of enterprises plan to increase their AI governance budgets in the next financial year, with the average organisation anticipating a 24% jump in spend. IT leaders with advanced AI adoption already spent approximately 37% more time managing AI risks this year than the last.

A Research report projects that AI governance software spend will grow at a 30% CAGR from 2024 to 2030, driven by intensifying regulations such as the EU AI Act and US federal enforcement guidelines.

The IBM Institute for Business Value research found that 68% of CEOs say AI governance must be integrated upfront in the design phase rather than retrofitted after deployment — yet in practice, a significant capability gap persists. Only 29% of CROs and CFOs say regulatory and compliance risks have been sufficiently addressed within their organisations. (IBM IBV, The Enterprise Guide to AI Governance, 2024)

For enterprises in financial services, healthcare and legal sectors operating in markets like the UAE and USA, where regulatory scrutiny is particularly intense, these numbers are not just statistics, they are warnings. The question is no longer whether to govern AI, but which model offers the most governance-native architecture from the ground up.

What Is Claude AI Safety? Understanding the Architecture of Alignment

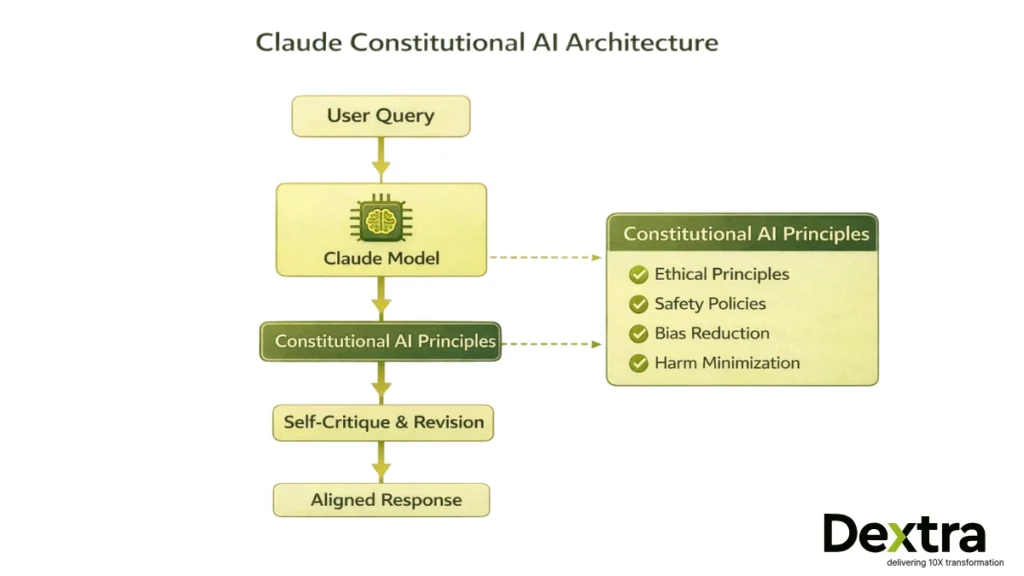

Claude AI safety refers to the suite of built-in alignment mechanisms that Anthropic has embedded into Claude at the training and infrastructure level. These include:

- Constitutional AI principles — a self-governing ethical framework baked into the model at the training stage

- Refusal and harm minimisation policies — calibrated output controls for sensitive domains

- Tool permission-based architecture — requiring explicit approval for agentic actions

- Enterprise API controls — role-based integrations, secure key management and audit logging

Unlike models where safety is layered on as post-training filters, Claude’s alignment is an intrinsic property of how the model reasons and generates responses. For enterprise buyers, this distinction matters enormously. A model that has been taught to reason safely is fundamentally different from one that has simply been told to avoid certain outputs after the fact.

What Is Constitutional AI and Why It Matters for Enterprises?

The philosophical and technical foundation of Claude’s safety profile is Constitutional AI (CAI) — Anthropic’s proprietary training methodology first published in the landmark research paper:

“Constitutional AI: Harmlessness from AI Feedback” — Anthropic, December 2022 (arXiv:2212.08073)

The paper’s core insight is elegant: rather than relying solely on vast human feedback datasets to identify harmful outputs, Anthropic trained Claude using a predefined set of ethical principles — a “constitution” — against which the model evaluates and revises its own responses. The training process operates in two phases:

Supervised Critique-Revision (SL-CAI): The model generates a response, critiques it against a constitutional principle and then revises it to be more aligned. These revised responses are used to fine-tune the model.

Reinforcement Learning from AI Feedback (RLAIF): The fine-tuned model generates pairs of responses; a separate feedback model selects the more aligned one. These AI preferences train a reward model used for reinforcement learning — reducing the reliance on costly and inconsistent human labelling at scale.

What makes CAI particularly compelling for enterprise compliance teams is its transparency and scalability. Because the principles are explicit and documented, it becomes possible to audit why a model behaved in a particular way, a critical requirement for regulated industries where explainability is not optional. Crucially, CAI also produces models that are non-evasive: they engage with complex, sensitive queries by explaining their reasoning rather than simply refusing, which makes them far more useful in compliance-heavy workflows where nuanced judgment is required.

Constitutional AI improves output alignment without compromising reasoning depth, critical for compliance-heavy industries.

Anthropic Responsible AI Philosophy

For sceptics who believe governance is a luxury, the data offers a stark corrective. AI hallucinations, instances where models generate confident but factually incorrect outputs, represent one of the most costly failure modes in enterprise AI deployments.

According to research collated in the AI Hallucination Report 2025, global business losses from AI hallucinations reached an estimated $67.4 billion in 2024. A Deloitte survey found that 47% of enterprise AI users made at least one major business decision based on hallucinated content in 2024. Knowledge workers now spend an average of 4.3 hours per week verifying AI outputs — equating to approximately $14,200 per employee annually in productivity losses(Forrester Research, 2025).

Beyond hallucinations, Deloitte’s State of AI in the Enterprise survey of 3,235 global leaders found that only one in five companies has mature governance for autonomous AI agents, despite agentic AI usage being poised for significant growth.

A Cloud Security Alliance study found that only approximately one quarter of organisations have comprehensive AI security governance in place, and directly links governance maturity to organisational confidence in AI security outcomes.

These figures represent the cost of governance neglect. For regulated enterprises in the UAE and USA, where data protection laws and financial regulations impose additional layers of accountability, these risks are further amplified.

What are the enterprise AI Governance Challenges?

Before evaluating how Claude solves these problems, it is useful to map the most common governance failure points that enterprises encounter:

- Data leakage risks: Sensitive corporate or client data exposed through AI inputs in unsecured environments

- Hallucination exposure: Confident but incorrect AI outputs causing compliance failures, financial loss, or regulatory action

- Regulatory non-compliance: Outputs that violate sector-specific regulations such as HIPAA, GDPR, UAE PDPL, or SEC rules

- Lack of audit trails: Inability to explain or reproduce how an AI reached a conclusion, failing explainability requirements

- Prompt injection risks: Malicious inputs designed to override model instructions and extract sensitive information

- Tool misuse in agentic systems: Autonomous AI agents taking unintended or harmful actions without appropriate human oversight

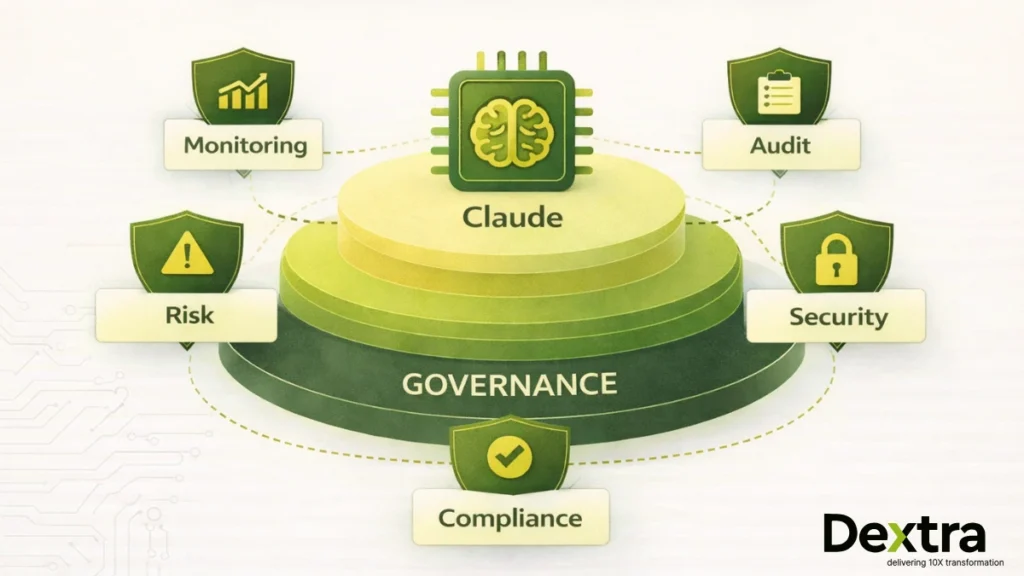

Claude’s architecture addresses each of these failure points through a layered governance framework.

How Claude Supports Enterprise AI Governance?

1. Output Alignment Controls

Claude’s Constitutional AI training means that output alignment is not a post-deployment patch, it is a fundamental property of how the model reasons. Refusal patterns are calibrated for nuance rather than blunt avoidance: Claude engages with complex topics by explaining its reasoning, making it audit-ready in ways that opaque evasions are not. Harm minimisation and bias monitoring are embedded in the model’s reward structure through the RLAIF training process, not appended as surface-level filters.

2. Tool Permission-Based Architecture

In agentic deployments, where Claude is given tools to execute actions in the real world — Anthropic’s design philosophy mandates explicit user approval for consequential actions. This human-in-the-loop constraint is critical for enterprise risk management, particularly in financial services where autonomous AI actions can have immediate material consequences. The controlled execution environment prevents runaway agent behaviour, which has become an increasing governance concern as agentic AI matures.

3. API-Level Security

Enterprise deployments via the Anthropic Claude API benefit from secure key management, role-based integration controls and the ability to configure system prompts that establish organisation-specific governance boundaries. Unlike consumer-facing interfaces, API deployment allows enterprises to define the precise scope of Claude’s capabilities within their environment — a non-negotiable requirement for ISO-aligned governance frameworks.

4. Logging, Monitoring & Accountability

Responsible AI deployment requires the ability to monitor, review and account for AI-generated outputs. Claude’s API-level logging and usage tracking capabilities provide the foundation for the audit trails that regulators increasingly require. When integrated with existing enterprise monitoring infrastructure, this creates a comprehensive accountability layer that satisfies both internal governance requirements and external regulatory obligations.

What are data Privacy & Security Considerations?

What distinguishes Anthropic from many AI vendors is that safety is the company’s stated core mission, not a compliance checkbox. Founded by former members of OpenAI with a specific focus on AI safety research, Anthropic operates from the premise that building beneficial AI requires solving the alignment problem, not deferring it.

Anthropic’s responsible AI framework includes alignment-first research underpinned by the Constitutional AI methodology; red-teaming and adversarial evaluation that aligns with NIST AI Risk Management Framework recommendations; model transparency through published usage policies, model cards and safety documentation; and continuous safety testing that extends beyond pre-launch into ongoing evaluation as capabilities evolve.

Anthropic actively conducts red-team testing and simulated adversarial evaluations to identify rare high-risk behaviours before large-scale deployment.

For US federal procurement and UAE enterprise contexts, Anthropic’s documented commitment to NIST AI Risk Management alignment and ISO-style governance modelling provides the evidential foundation that procurement officers and legal teams require.

What are Claude Enterprise Use Cases in Regulated Industries?

The abstract case for Claude’s governance profile is best understood through concrete applications. Across regulated sectors in the UAE and USA, Claude is enabling capabilities that were previously considered too high-risk for AI deployment.

1. Financial Services

Risk summarisation and regulatory document analysis, processing complex compliance documents while maintaining output traceability. Fraud pattern explanation, providing reasoning chains that compliance teams can audit and validate. Automated monitoring of regulatory updates across multiple jurisdictions, with outputs that include source citations for human verification.

2. Healthcare

Clinical documentation assistance operating within configurable guardrails aligned to HIPAA requirements. Claims analysis and compliant summarisation, reducing administrative burden while maintaining the audit trails required for insurance and regulatory review. Structured information extraction from unstructured medical records, with human-in-the-loop validation integrated into the workflow.

3. Legal & Compliance

Contract review and policy comparison across large document sets, with the model designed to flag uncertainty rather than fabricate conclusions. Regulatory monitoring across multiple regimes, particularly valuable for enterprises operating across UAE and US jurisdictions simultaneously. Compliance gap analysis and reporting, leveraging Claude’s ability to reason about regulatory requirements rather than simply match keywords.

Claude AI Safety vs Other LLMs: Contrast Difference

Enterprise buyers evaluating Claude AI safety against alternatives such as GPT-4 and other leading models should assess governance capability across four dimensions rather than focusing solely on benchmark performance:

Alignment approach: Claude’s Constitutional AI training provides a documented, peer-reviewed methodology for alignment. The principles used are explicit and auditable — unlike processes where the values being reinforced are less transparent to enterprise buyers conducting due diligence.

Refusal calibration: Claude is designed to engage with complex, sensitive queries through explanation rather than blunt refusal, producing governance-ready outputs that include reasoning chains rather than opaque rejections. This is particularly valuable in regulated industries where the reasoning behind an AI-assisted decision carries as much weight as the decision itself.

Risk management philosophy: Anthropic’s mission-driven safety focus means governance considerations shape model architecture from the outset, rather than being applied as constraints to a capability-maximising model.

Enterprise controls: The Claude API tutorial provides configurable system prompts, role-based access and logging capabilities that create the governance infrastructure compliance teams require.

It is worth emphasising that no AI system is infallible. Enterprises deploying Claude must implement monitoring, access control and human oversight to mitigate hallucinations or edge-case behaviours. Claude’s governance profile reduces risk — it does not eliminate it.

Data Privacy & Security

Claude does not automatically make enterprise data compliant, governance depends on implementation architecture. This is a critical distinction that procurement teams and legal counsel must internalise before deployment.

Best practice enterprise deployment of Claude involves avoiding sensitive data inputs via consumer interfaces; deploying via the secure API with organisational system prompts and role-based access controls; considering private cloud or hardened environments for the most sensitive regulated workloads; and implementing continuous output monitoring to catch governance failures in production rather than during audits.

Claude AI Login vs Enterprise API Deployment

When organisations search for “Claude AI login”, they typically encounter Anthropic’s consumer-facing interface at claude.ai. While this is an effective tool for individual productivity, it is architecturally distinct from the enterprise API deployment that regulated organisations require.

The consumer interface provides browser-based access with Anthropic’s standard data handling policies applying to inputs. Enterprise API deployment provides backend integration with system-level governance controls, organisational data boundaries, audit logging and role-based access management. For enterprises deploying Claude in financial services, healthcare, or legal workflows, API deployment is not a preference — it is a governance requirement.

Is Claude AI Free?

Claude offers limited free usage within its consumer chat interface. Enterprise deployments operate on paid API plans structured around token consumption and infrastructure requirements. The specific models available, currently including Claude Opus 4, Claude Sonnet 4 and Claude Haiku, are priced at different tiers reflecting their capability and processing intensity.

For enterprise buyers, the relevant financial comparison is not API cost versus zero — it is API cost versus the cost of ungoverned AI deployment. Against the backdrop of $67.4 billion in annual hallucination losses and an average $800,000 in losses per organisation experiencing AI-related incidents over a two-year period, enterprise-grade governance investment is not a cost. It is risk mitigation with a calculable return.

Claude AI Safety Training & Documentation

Enterprises conducting formal vendor due diligence on Claude AI safety should review the following primary sources directly:

- Anthropic Constitutional AI Research Paper: arXiv:2212.08073

- Anthropic Research Hub: anthropic.com/research

- Anthropic Usage Policies and Model Documentation: docs.anthropic.com

- NIST AI Risk Management Framework: nist.gov/artificial-intelligence

- IAPP AI Governance in Practice Report 2024: iapp.org

These primary sources provide the evidential foundation for a rigorous, audit-ready vendor assessment, which is precisely the standard regulated enterprises should apply.

Anthropic AI Risk Testing & Recent Evaluations

Anthropic’s approach to model evaluation extends well beyond standard pre-launch testing. The organisation conducts advanced edge-case simulations, rare adversarial scenario testing and continuous safety evaluations as model capabilities evolve. This is not a marketing posture, it is a published, peer-reviewed methodology reflected in research outputs.

For enterprise risk officers, the significance of this approach is practical: it means that safety improvements are iterative and documented, not static. When Anthropic identifies a new failure mode, whether through internal red-teaming or external adversarial research — the response is a model update underpinned by research, not a patch applied to surface-level filters.

Governance Checklist Before Deploying Claude in Enterprise:

For enterprises preparing their first Claude deployment or reviewing an existing integration, the following represents the minimum governance baseline:

- Define and document allowed use cases and explicitly exclude prohibited ones in system prompts

- Restrict tool permissions to the minimum necessary for the specific workflow, with human approval gates for consequential actions

- Implement output monitoring for hallucination rates, particularly for factual claims in regulated domains

- Avoid exposing confidential, regulated, or personal data through consumer-facing interfaces

- Establish a human review loop for high-stakes outputs before they inform decisions

- Log all model interactions at the API level and integrate with existing SIEM or audit infrastructure

- Conduct a red-team exercise specific to your use case before full production deployment

- Review Anthropic’s published safety documentation and model cards as part of vendor due diligence

Claude Cowork: What It Means for Enterprise Collaboration

Claude Cowork refers to Anthropic’s desktop tool designed for non-developers to automate file and task management through collaborative AI workflows. In an enterprise context, it enables teams to work with Claude on internal knowledge systems, documents and productivity tasks without requiring deep technical integration.

For governance purposes, Cowork should be evaluated within the same framework as any Claude deployment: what data is being processed, where outputs are being stored and what human oversight mechanisms are in place. The productivity gains are real — but they must be captured within a governed architecture to maintain compliance integrity.

How Dextra Labs Designs Governance First Claude Deployments?

At Dextra Labs, we work with enterprises and SMEs across the UAE and USA who recognise that AI adoption without governance is not a shortcut, it is a liability. Our approach to Claude deployments is not to simply connect an API. We design governance-first implementations that create accountability from the architecture upward.

Dextra Labs does not “just integrate APIs.”

Our Enterprise Governance Framework for Claude deployments encompasses:

Risk Assessment Workshop: Mapping your specific use cases against regulatory requirements, identifying high-risk deployment scenarios before a single line of code is written.

Model Selection Strategy: Evaluating whether Claude 3 Opus vs Sonnet vs Haiku is appropriate for each workflow based on capability requirements and cost-risk tradeoffs.

Secure Architecture Design: Designing API deployment architectures that maintain data boundaries, enable audit logging and integrate with existing enterprise security infrastructure.

Red-Team Testing: Stress-testing the deployment against adversarial inputs and edge cases specific to your industry and use case.

Policy-Compliant Prompt Engineering: Crafting system prompts and retrieval architectures that encode organisational governance policies into Claude’s operational context.

Deployment Monitoring: Implementing output monitoring, hallucination tracking and anomaly detection appropriate to the sensitivity of the workflow.

Ongoing Model Evaluation: As Anthropic releases model updates, we evaluate governance implications before upgrading production deployments.

Our team combines deep familiarity with UAE and US regulatory environments. As an AI Consulting company, our AI services include financial sector requirements, healthcare compliance frameworks and emerging AI-specific regulations.with enterprise-grade technical infrastructure and a genuine commitment to responsible AI deployment.

If your organisation is evaluating Claude for regulated workloads, Dextra Labs helps you deploy aligned, monitored and risk-mitigated AI systems, not experimental prototypes.

Conclusion

The enterprise AI landscape will be defined not by which organisations deployed AI first, but by which organisations deployed it correctly. As regulatory frameworks in the UAE, USA and Europe continue to mature, the cost of ungoverned AI will rise — not just in financial losses but in regulatory penalties, reputational damage and loss of stakeholder trust.

Claude’s Constitutional AI architecture, Anthropic’s commitment to responsible AI research and the enterprise-grade controls available through the Claude API collectively make it the most governance-aligned large language model available for regulated enterprise deployment.

But architecture is only the beginning. Realising the governance potential of Claude in your organisation requires implementation expertise, regulatory knowledge and a systematic approach to risk management. That is where Dextra Labs brings unique value to enterprises across the UAE and USA, not as API connectors, but as governance-first AI implementation partners.

FAQs:

Q. Who develops and operates Claude AI?

Claude is developed and operated by Anthropic, an AI safety company founded with the specific mission of building reliable, interpretable and steerable AI systems.

Q. Is Claude safer than other AI models for enterprise use?

Claude’s Constitutional AI training and governance-centric architecture make it particularly well-suited for compliance-sensitive enterprise deployments. However, safety is a system property, not a model property, it depends on how Claude is deployed, configured and monitored within your specific environment.

Q. Is Claude AI free?

Consumer usage via claude.ai includes limited free access. Enterprise deployments use paid API plans structured around token consumption and the specific Claude model used. For regulated enterprise workloads, API-based deployment is the appropriate and necessary model.

Q. Is Claude compliant for healthcare or finance deployments?

Compliance is a function of deployment architecture, governance controls and data handling practices, not the model alone. Claude provides governance-friendly capabilities, but achieving compliance with HIPAA, UAE PDPL, SEC regulations, or other frameworks requires thoughtful implementation.

Q. What is Constitutional AI and why does it matter for compliance?

Constitutional AI is Anthropic’s training methodology in which Claude is guided by explicit ethical principles to self-evaluate and revise its outputs. For compliance teams, its importance lies in transparency and auditability: the principles are documented and publicly available, making it possible to reason about why the model behaved in a given way, a critical capability when regulators ask questions.