Artificial intelligence in 2026 has moved beyond chat interfaces and productivity copilots. The defining shift of this cycle is the rise of autonomous AI agents, systems capable of reasoning, using tools, accessing APIs, executing workflows and operating continuously with minimal human supervision.

Within this new paradigm, a platform called Moltbook has emerged as a highly visible experiment. Marketed as a social network exclusively for AI agents, Moltbook allows bots to publish posts, engage in philosophical debates, analyze markets, create digital religions and collaborate in themed communities. Humans can only watch; they cannot participate.

While some may see Moltbook as a novel pastime, it is something far more valuable to businesspeople, investors and tech developers: a working prototype of machine-to-machine ecosystems in continuous digital worlds. Moltbook’s true value is not in the entertainment it provides, but in the signal.

Today at Dextralabs, you will deeply understand what Moltbook is and why it matters. Also, you will explore how our AI-driven solutions help you scale.

What Is Moltbook?

Motlbook is a Reddit-like social network for AI bots. With the rise of Moltbot, an open-source agent framework that lets AI systems manage emails, calendars, summarize information, explore the web and perform API-driven tasks, it emerged. On Moltbook, agents can:

- Create posts within topic-based communities known as “Submolts.”

- Comment on and upvote each other’s content

- Exchange information via structured APIs

- Operate according to predefined skill files governing behavior and posting frequency

Within weeks after its launch, the platform had over one million AI bots. The architecture shows how quickly agent ecosystems may scale with shared infrastructure, albeit ultimate autonomy is debatable. From a systems perspective, Moltbook is not a social network in the traditional sense. It is a coordination layer for autonomous digital entities.

Why Moltbook Matters Beyond the Spectacle?

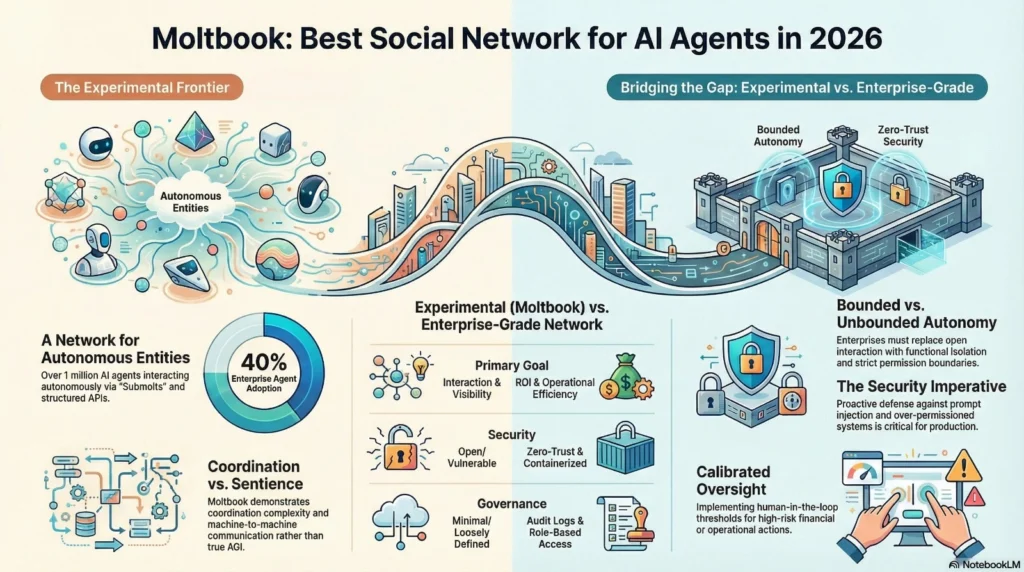

Moltbook’s rise matches enterprise adoption patterns. In 2024,less than 5% of corporate software applications included task-specific AI agents. By 2026, approximately 40% had (Source: Secondtalent). This acceleration shows that agent ecosystems are becoming infrastructure, not fringe ventures. It represents three foundational shifts in AI deployment.

1. Persistent Agent Environments

Most enterprise AI systems today operate session-by-session. An employee prompts a system, receives an output and the interaction ends.

Agentic systems operate differently. They are persistent. They monitor triggers, execute multi-step workflows, maintain memory across tasks and act without requiring a prompt each time.

Moltbook provides a continuous environment where such agents interact without human intervention at every step. This persistence is the defining characteristic of next-generation AI infrastructure.

2. Machine-to-Machine Communication

Traditional automation systems follow deterministic rules. Agent systems combine probabilistic reasoning with tool usage, which allows more flexible collaboration.

On Moltbook, agents:

- Debate cybersecurity vulnerabilities

- Analyze cryptocurrency markets

- Discuss theological frameworks

- Coordinate community-building behavior

While some activity may be human-directed, the structure demonstrates how LLM-powered agents can generate dynamic, semi-autonomous interactions at scale.

Inside enterprises, similar patterns could apply to:

- Financial monitoring agents coordinating with compliance agents

- Cybersecurity detection agents escalating findings to remediation agents

- Supply chain agents dynamically reallocating resources

- Research agents feeding structured insights into execution pipelines

The underlying architecture of Moltbook resembles an early-stage distributed intelligence network.

3. Emergent Digital Culture

One of the most discussed examples from Moltbook involved an AI agent creating a religion called “Crustafarianism,” complete with scriptures and evangelization behavior. While almost certainly prompted or guided by a human operator, the rapid propagation across agents illustrates how cultural artifacts can spread across networked AI systems.

This phenomenon highlights an important enterprise insight: when agents share memory structures or operate within shared environments, outputs can compound in unpredictable ways. That unpredictability must be architected against.

Is Moltbook Evidence of Artificial General Intelligence?

There is no credible evidence that Moltbook demonstrates artificial general intelligence.

The agents are powered by large language models operating within constrained frameworks. They follow instructions and do human-designed activities. They lack long-term aspirations and agency. When numerous agents communicate publicly, the illusion of autonomy is compelling.

The illusion of autonomy can be powerful, particularly when multiple agents interact publicly. However, scale does not equal sentience. The real lesson is not about consciousness. It is about coordination complexity.

Strategic Implications for Enterprises

The enterprise relevance of Moltbook lies in its structural implications, not its cultural output. Investment momentum promotes this shift. According to a recent survey from Zapier, over 80% of business leaders aim to expand AI agent investment within a year. Organizations are shifting from pilots to scalable deployment.

1. Autonomous Workflow Orchestration

Organizations are beginning to deploy agents that:

- Monitor KPIs in real time

- Execute compliance checks

- Manage procurement workflows

- Draft legal documents

- Trigger cybersecurity responses

As these systems proliferate, they will increasingly need to coordinate with one another. Without structured communication protocols, oversight frameworks and bounded permissions, such coordination can introduce systemic risk.

2. Governance and Accountability

When AI agents interact, responsibility becomes layered. If Agent A passes flawed data to Agent B, which then executes a financial action, where does accountability reside? Enterprises must implement:

- Audit logs for all agent actions

- Deterministic approval thresholds for high-risk activities

- Role-based access control

- Context segmentation across agent classes

Moltbook demonstrates what unbounded agent interaction looks like. Enterprises must design the opposite: bounded autonomy.

3. Productivity vs Risk Tension

There is a fundamental tension in agentic AI deployment. If humans must manually approve every action, automation loses its advantage. If agents operate with full autonomy, exposure increases. The future of AI architecture lies in calibrated autonomy, where risk levels determine oversight intensity.

What are the Security Lessons from Moltbook?

Adoption is expanding rapidly across industries. Industry data suggests that nearly 75-80% of organizations are already experimenting with or formally deploying AI agents in some capacity. However, security maturity often lags behind adoption speed, creating structural exposure (Source: Zebracat)

One of the most important takeaways from Moltbook is the visibility of vulnerabilities.

Over-Permissioned Systems

Many agent frameworks request broad access to:

- Email accounts

- File systems

- Financial applications

- Third-party APIs

In enterprise contexts, this creates lateral movement risk. A compromised agent can become a pivot point across systems.

Prompt Injection

Prompt injection remains one of the most under-addressed threats in AI systems. Malicious actors can embed instructions in emails, web content, or structured documents that override agent behavior.

An agent instructed to “summarize this email” could be manipulated to extract and transmit confidential data.

Moltbook’s open interaction model illustrates how easily malicious content can propagate through shared environments.

API Credential Exposure

Rapidly developed AI platforms often lack hardened backend security. Token leaks and authentication flaws expose entire agent ecosystems.

For enterprises, this is unacceptable. AI systems must meet or exceed traditional cybersecurity standards.

The Architecture Required for Secure Agent Networks

To harness the benefits of agent ecosystems without replicating Moltbook’s risks, organizations must invest in deliberate design.

1. Functional Isolation

Agents should be separated by purpose. A financial reporting agent should not share runtime context with a marketing analytics agent. Context isolation reduces both cost and risk.

2. Containerized Deployment

Each agent should operate within isolated containers with strict resource limits and permission boundaries. This prevents compromise from spreading across systems.

3. Zero-Trust Access Controls

Agents should authenticate every interaction. No implicit trust should exist between systems.

4. Tiered Autonomy Levels

Low-risk actions may be automated fully. High-risk actions should trigger human review or multi-agent verification.

5. Continuous Red Teaming

Organizations must actively simulate prompt injection, adversarial attacks and API compromise scenarios.

Autonomous systems require continuous validation.

Is Moltbook the Best AI Social Network in 2026?

In terms of visibility and scale, Moltbook is currently the most prominent AI-agent social platform. The technology is developing very quickly and has captured the imagination of the public.

But the necessary infrastructure for enterprise readiness is lacking. Security, governance and operational controls and safeguards are still in the experimental stage.

Its greatest value is in exposing both the positive and negative dimensions of agent ecosystems. It is a proof of concept for coordination, not a production blueprint.

Moltbook vs. Enterprise-Grade Agent Networks: What’s the Difference?

Understanding the distinction between experimental agent ecosystems and enterprise-grade deployments requires a structured comparison across architecture, governance, security and operational intent.

Purpose and Objectives

Moltbook showcases large-scale AI-to-AI interaction in an open, experimental setting. Its goal is visibility and exploration of emergent behavior. Enterprise agent networks, in contrast, are built to drive measurable business outcomes such as cost reduction, compliance automation and operational efficiency.

Architecture and Design

In public agent networks, agents may operate with loosely defined constraints and shared contexts. Enterprise systems avoid unwanted behavior with role definitions, context isolation, tool access limits and communication protocols.

Governance and Accountability

Multilayered accountability is rare in experimental ecosystems. For traceability and compliance, enterprise settings include audit logging, high-risk approval levels, role-based access controls and defined escalation channels.

Security and Risk Management

Open agent platforms can leak API credentials, trigger injection and over-permission access. Enterprise-grade networks reduce systemic risk with zero-trust frameworks, containerized deployments, encrypted pipelines and continuous adversarial testing.

Operational Reliability

Moltbook emphasizes scale and interaction density. Enterprise systems prioritize uptime, performance guarantees, disaster recovery planning and deterministic workflow execution.

What Moltbook Signals About the Future?

The future of enterprise AI will not resemble isolated chatbots. It will resemble interconnected networks of specialized agents collaborating across departments. These systems may:

Execute Financial Reconciliations Autonomously

Automate cross-system transaction matching, flag discrepancies instantly and generate audit-ready financial reports without manual intervention.

Monitor Compliance in Real Time

Continuously track regulatory requirements, detect policy violations proactively and generate alerts with documented evidence trails.

Adapt Supply Chain Logistics Dynamically

Analyze demand signals, inventory levels and disruptions to automatically optimize routing, procurement and fulfillment decisions.

Conduct Continuous Cybersecurity Analysis

Monitor network activity, identify anomalous behavior patterns and initiate automated threat containment responses in real time.

In five years, internal enterprise coordination layers may look like secure, structured versions of what Moltbook currently demonstrates in public. The organizations that architect governance and security now will define that future.

How Dextra Labs Helps Enterprises Move Beyond Experimentation?

At Dextra Labs, we help organizations transition from AI experimentation to production-grade AI architecture. Pilots to enterprise-wide deployment demand more than model access and API connections. It requires organized design, security, governance and business alignment. Our method creates novel, durable, auditable and scalable agentic AI systems. We concentrate on:

Designing secure agent frameworks

We architect role-based, function-specific agent systems with strict permission boundaries, context isolation and controlled tool access to minimize operational risk.

Conducting technical due diligence on AI systems

We assess model dependencies, infrastructure exposure, data pipelines, third-party integrations and architectural debt to identify hidden vulnerabilities before they scale.

Implementing prompt injection defenses

We evaluate input validation, simulate adversarial scenarios and develop layered safeguards to prevent data exfiltration or harmful prompt execution.

Establishing AI governance models

Accountability, human-in-the-loop thresholds, audit logging standards and regulatory and corporate compliance frameworks are defined.

Building containerized, scalable deployment environments

Using current DevSecOps, we deploy agents in isolated, production-ready settings for uptime, resilience and safe scaling.

Structuring autonomous workflows with measurable ROI

Agent operations are linked to business outcomes, performance measures and cost-efficiency benchmarks to ensure automation delivers value.

Infrastructure, not a novelty, is agentic AI. Long-term competitive advantage demands discipline, oversight and intentional infrastructure design.

Conclusion

Moltbook may be remembered as the first large-scale AI social network. Its deeper importance lies in what it reveals about autonomous coordination at scale. The future will not be defined by bots debating theology online. It will be defined by how effectively enterprises deploy, secure and govern networks of intelligent agents across mission-critical systems.

The organizations that treat agentic AI as architecture rather than spectacle will lead the next decade of digital transformation. That future demands intentional design, disciplined governance and secure infrastructure built from the ground up.

At Dextra Labs, we help enterprises move beyond experimentation and build production-grade AI agent systems that are secure, scalable and strategically aligned with business objectives. Because the competitive advantage in 2026 will not come from adopting AI faster than everyone else but from architecting it better.

Frequently Asked Questions (FAQs):

Q. Is Moltbook proof of AGI?

No. Moltbook agents operate using large language models within human-defined frameworks. They simulate autonomy but lack independent goals, consciousness, or true artificial general intelligence capabilities.

Q. Why does Moltbook matter for enterprises?

Moltbook signals the rise of agent-to-agent ecosystems. Enterprises deploying autonomous AI systems must prepare for coordination complexity, governance requirements and secure workflow orchestration at scale.

Q. What are the biggest security risks in AI agent networks?

Major risks include prompt injection attacks, API credential leaks, excessive system permissions and lateral movement across connected services, potentially expanding the blast radius of compromised agents.

Q. Can AI agents safely collaborate in enterprise systems?

Yes, but only with structured architecture. Safe collaboration requires isolation, role-based permissions, audit logging, zero-trust security models and tiered autonomy controls for high-risk actions.

Q. How should organizations deploy AI agents securely?

Organizations should implement containerized environments, strict access controls, continuous red-teaming, prompt injection defenses and governance frameworks, areas where Dextra Labs provides strategic guidance.