Artificial Intelligence (AI) has gone from academic inquiry to mission-critical production systems at scale across various industries like health care, finance, logistics, and retail. In parallel with an increase in AI-based applications, the biggest challenge is no longer building models, but rather scaling models effectively in production. This is where MLOps (Machine Learning Operations) becomes important.

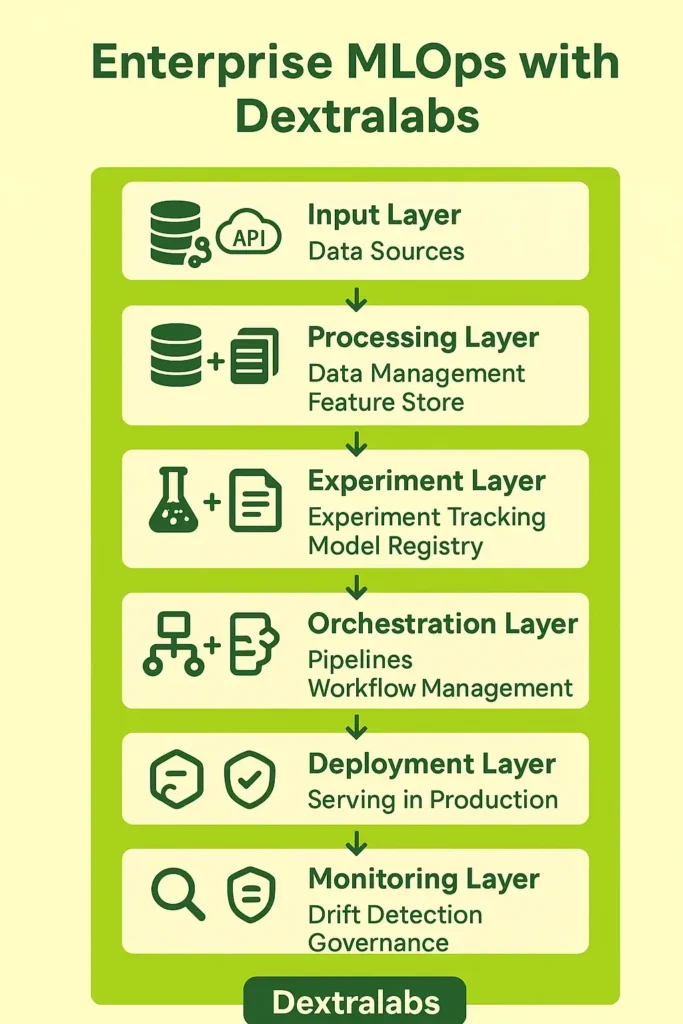

MLOps is a mechanism that allows you to leverage the principles of DevOps into the unique challenges of machine learning; it allows you to not merely train AI models but to launch, monitor, manage, and govern AI models at scale. By 2025 or later, the MLOps ecosystem will have matured, and you’ll have a plethora of MLOps tools, software, and platforms at your disposal to tackle the challenges of data version control, reproducibility, workflow orchestration, compliance, and so on.

Beyond providing a wide suite of useful tools to support enterprise functions, organizations are looking for expert AI consultancies like Dextralabs for custom MLOps services that combine ML observability tools, ML model deployment tools, and monitoring frameworks into unified, production-grade pipelines.

In this guide, we will explore the 25 best MLOps tools in 2025, organized by category to frame how they can fit into modern AI workflows.

What is MLOps, and Why is it Important in 2025?

MLOps is best conceptualized as the connector between building a model in a research lab and running that same model in a live business environment. It is the linkage of DevOps principles and machine learning management, covering every step so that AI projects remain as inventive and explorative as expected while still being scalable, reliable, and compliant.

MLOps solutions have never been more essential. AI projects often fail when moving from proof-of-concept to production because of some common issues, such as:

- Data drift and model decay – models are bound to drift and lose their accuracy over time as the data changes.

- Reproducibility – projects or experiments are never replicated exactly by the same team.

- Governance and compliance – models must be ethical, objective, explainable, and compliant with all regulations.

- Lack of monitoring – Many teams lack proper machine learning model monitoring tools to track performance.

By 2025, organizations will have an mlops ecosystem—a range of mlops tools, ml pipeline frameworks, and machine learning deployment tools that allow them to move fast while staying in control. But just having tools available does not solve anything – there has to be orchestration and integration, which is why AI consulting firms like Dextralabs will prove to be strategic partners on the journey.

25 Top MLOps Tools in 2025:

MLOps has grown into a complete ecosystem, offering strong mlops frameworks, ML pipeline tools, and model deployment platforms that support every stage of the machine learning life cycle. Here are 25 of the best tools for MLOps to look out for in 2025, grouped into five key categories.

A. Data Management & Versioning

Data is the backbone of the ML workflows. Active data management is a means to allow organizations to maintain data traceability, data reproducibility, and data scaling. These tools allow organizations to manage massive datasets, version data efficiently, and ensure compliance across an entire pipeline.

1. DVC (Data Version Control)

DVC is an open source MLOps tool for dataset versioning and experiment tracking. It operates like Git for machine learning (ML) projects, providing versioning for data and models alongside code.

Key Features & Functions:

- Version Control for Data & Models – Record changes to datasets, pipelines, and ML models.

- Lightweight Storage – Share datasets without unnecessary duplication of large files.

- Reproducibility – Every experiment can be reproduced as precisely as previously experienced.

- Collaboration – Collaboration between teams can occur naturally with no need for tedious manual management of data transfers.

- Integration – Works with GitHub, GitLab, S3, Google Drive, and more.

Best For: Teams who need reproducible ML experiments with efficient collaboration without heavy infrastructure.

2. Pachyderm

Pachyderm is built for automated, version-controlled, event-driven data pipelines. Pachyderm provides full data lineage and tracking so companies can track where the data came from, how it is manipulated, and where it is used.

Key Features:

- Data Lineage & Traceability – Track every step of a transformation from ingestion to deployment

- Pipeline Automation – Automated data wrangling and preprocessing can minimize manual errors

- Scalability – Work with subscriptions and large distributed data pipelines

Best For: Companies needing compliance, regulatory traceability, and completely automated pipelines.

3. LakeFS

LakeFS brings Git-like features to object storage and data lakes. It enables data teams to commit, branch, and merge datasets in a safe manner – similar to how software engineers manage code.

Key Features & Functions:

- Git-Like Experience – Commit, branch, and merge versions of data.

- Safe Experimentation – Test your models on new datasets without touching production.

- Data Rollback – Roll back to previous stable versions immediately if an error occurs.

- Supports Petabyte Scale – Can support enterprise-scale datasets.

- Collaboration – Teams can explore using the same underlying data lake.

Best For: Larger organizations that have big data lakes and are looking for safe, versioned experimentation when testing out new data and models.

4. Delta Lake

Delta Lake is an ACID transaction-enabled structured data framework in the Databricks ecosystem, ensuring the reliability of data lakes for production use.

Key Features & Functions:

- ACID Transactions – Makes data reliable and correct.

- Schema Enforcement & Evolution – Prevents data corruption with strict schema rules.

- Time Travel – Allows users to query and reproduce previous versions of data.

- Scalable ML Workflows – Transforms raw data lakes into robust ML environments.

- Spark and Databricks Integration – Built for and optimized for distributed data processing.

Best For: Enterprises storing large-scale ML pipelines with stringent requirements on reliability, compliance, and reproducibility of historical versions of the data.

5. Feast (Feature Store)

Feast is an open-source feature store that is central to any modern ML pipeline. It is designed to make sure features are standardized, consistently used, and reusable across training and inference.

Top Features & Functions:

- Centralize Features – Great way to keep all ML features in one place.

- Consistency Across Environments – The features used in training the model will always be the same when the model is used in production.

- Reusable – The team can share features, which removes duplication of work, ultimately speeding up development work.

- Real-time Serving – Can handle online use cases as well as batch inference use cases.

- Integrated with ML pipelines – These products integrate with the more common ML pipelines software, like TensorFlow, Spark, Pytorch, etc.

Best For: Companies wanting to standardize ML features and reduce drift between training and production models.

B. Experiment Tracking & Model Management

Experiment tracking prevents wasted effort and lost progress. These tools track parameters, metrics, and results to better facilitate reproducibility, help collaboration, and centralized management for seamless transition from research to deployment. Furthermore, they integrate with MLOps Python workflows and support ml ops platforms.

6. MLflow

MLflow has become one of the most popular MLOps frameworks because it offers experiment tracking, a model registry, and deployment. Through MLflow, teams are able to log experiments, compare results, and push models into production. Its open-source nature and a multitude of integrated parts of the ecosystem provide a critical standard for enterprises scaling AI.

Key Features & Functions:

- Experiment Tracking – Log parameters, metrics, and artifacts

- Model Registry – Manage versions of models, including staging/production

- Deployment – APIs to support pushing models into production

- Integrations – TensorFlow, PyTorch, Scikit-learn, and all three major cloud platforms

- Open-source flexibility – No restriction in growth from start-up to multinationals

Best For: Teams looking for a complete open-source package of experiment tracking, model registry, and deployment.

7. Weights & Biases (W&B)

W&B is the fast-becoming industry-recognized MLOps software for experiment tracking, visualization, and collaboration. Provides teams with MLOps capabilities to track experiments in real-time logging, hyperparameter tuning, and to create dashboards to share easily with stakeholders; great for organizations that are looking to make their research process faster but also want to be open. It will also present a good option for organizations looking for a quick experience, as it is easy to use and scalable.

Key features & functions:

- Real-Time Tracking – Watch your metrics while you train.

- Hyperparameter Optimization – Automatically tune your experiments.

- Rich Visualizations – Interactive dashboards that can be easily shared with stakeholders.

- Collaboration Tools – Results can be shared with team members instantly and easily.

- Seamless Integrations – W&B works with PyTorch, Keras, TensorFlow, and Hugging Face with seamless integration.

Best for: Research teams or organizations that want high-quality visualizations and the ability to collaborate.

8. Neptune.ai

Neptune.ai is a simple yet powerful platform for managing model metadata and results. It offers teams a centralized hub to log experiments and monitor progress, and provides collaboration across teams. The adaptability of the tool allows organizations to plan a structure around their models without incurring significant configuration overhead.

Key Features and Functions:

- Central Metadata Store – Track experiments, track metrics, and other artifacts.

- Customizable Dashboards – Select, customize, and create views for many workflows.

- Versioning – Keep track of datasets, code, and models.

- Collaboration – Share experiments across many departments.

- Lightweight Integrations – Easily integrated with lots of ML libraries.

Best suited for: Small teams or medium teams that want a simple, flexible, and lightweight model management data hub.

9. Comet ML

Comet ML is an all-in-one platform for experiment tracking, model management, and visualization tools. It allows teams to improve their flow by giving many integrations with popular ML libraries, relieving data scientists from having to wire things up. The real-time dashboards give data scientists the capability to examine their models quickly and to make choices quickly.

Key Features & Functions:

- End-to-End Tracking – Parameters, metrics, predictions.

- Model Management – Registry with versioning.

- Visualization Tools – Custom charts & analytics.

- Team Collaboration – Share projects and compare runs.

Best For: Teams wanting one platform that includes tracking, model registry, and visualizations in one tool.

10. AimStack

AimStack is an open-source competitor for experiment tracking with great momentum. It provides a clean and developer-friendly interface that allows logging and visualizing the experiments you ran. Startups and research teams generally prefer AimStack and its simplicity, open-source, and cost-saving model compared to an enterprise-grade platform.

Key Features & Functions:

- Developer-friendly UI – simple, fast, and clean UI.

- Fast, easy experiment logging – record hyperparameters, metrics, outputs.

- Visual Analytics – compare experiments.

- Lightweight installation – very little infrastructure; limited use; trialing.

Well-Suited For: Small companies, research enthusiasts, and developers wanting a lean, open-source, and budget-conscious platform.

C. Workflow Orchestration & Pipelines

Orchestration platforms automate all steps of the workflow and enable efficient machine learning. They reduce repetitive tasks, increase accuracy and enable organizations to scale their AI solutions easily across teams and systems.

11. Kubeflow

Kubeflow is an open-source MLOps platform purpose-built for Kubernetes. It enables teams to build, deploy, and manage machine learning workflows at scale. Kubeflow gives you everything from hyperparameter tuning, distributed model training, real-time serving and monitoring, to ensure ML projects are production-ready, all in a Kubernetes environment.

Features and Functions:

- Natively built on Kubernetes with containerized ML pipelines.

- Supports distributed training jobs such as TFJob, PyTorchJob, and MPIJob.

- Provides Katib, a sophisticated hyperparameter tuning and optimization tool.

- Covers the entire machine learning lifecycle from training to serving and monitoring

- Highly scalable and good for enterprise workloads.

Best For: Teams using Kubernetes or cloud native environments that need an enterprise, scalable, production-level ML orchestration tool.

12. Apache Airflow

Airflow is a workflow automation and scheduling tool, often used to manage workflows as a Directed Acyclic Graph (DAG). Though Airflow is not specific to ML, it is commonly used in MLOps pipelines for scheduling and orchestrating a variety of steps.

Features & Functions:

- DAG-based architecture allows for flexible pipeline design.

- Strong scheduling & retries.

- Plugin environment with connectors to databases, cloud storage, and ML libraries.

- Extensible with custom Python operators for customized workflows.

- Strong community and enterprise adoption.

Best For: Organizations that need general-purpose orchestration of workflows for ML and data engineering pipelines.

13. Metaflow

Metaflow is developed by Netflix, simplifies the details of the underlying infrastructure so you can focus on designing ML workflows easily. It has a human-centric API that allows scientists or engineers to create scalable workflows without extensive DevOps experience.

Features & Functions:

- Easy-to-use Python API to define workflows.

- Built-in versioning for code, data, and models.

- Integration with AWS (batch processing, step functions).

- Built-in managed scaling and resource management.

- Local-to-cloud portability and very little configuration.

Best For: Data scientists who need help with ease of use and productivity, with less emphasis on DevOps responsibility.

14. Flyte

Flyte is a production-grade orchestration platform enabling ML workflows. It supports reproducibility, parallelism, and serves collaborations across various ML teams.

Features & Functions:

- Excellent support for parallel executions and DAGs.

- Versioned, reproducible workflows with strict type-safety.

- Native Kubernetes integration for scalability.

- Efficient handling of massive workloads.

- Open-source, but enterprise adoption across fintech, health, and tech.

Best For: Large enterprise clients managing complex, large-scale ML workflows that require extreme reliability and reproducibility.

15. Prefect

Prefect is a relatively new orchestration solution that is flexible, fault-tolerant, and observable. It enables teams to define ML workflows in code and offers granular observability features.

Features & Functions:

- Easily define workflows through a Python-first API.

- Cloud and open-source deployments available.

- Built-in functionality for handling failures, retries, and timeouts.

- Strong observability with lots of logs, alerts, and dashboards.

- Lightweight for startups and mid-size teams.

Best For: Teams looking for a lightweight but powerful orchestration solution that comes with great observability and flexibility.

D. Deploying and Serving

Deployment confirms trained models drive business value. These tools make it easy to package, scale, and serve models in real-world environments. These tools enable low-latency inference, Kubernetes integration, and production-ready AI in all sectors.

16. Seldon Core

Seldon Core is an open-source ML model deployment tools that is built on Kubernetes, enabling it to scale and manage thousands of models in live production environments.

Key Features & Capabilities:

- Kubernetes-native tooling for easy scaling.

- Advanced monitoring and logging for observability.

- Supports A/B testing, canary, and shadow deployments.

- Compatible with a variety of machine learning frameworks (TensorFlow, Pytorch, XGBoost, etc.).

- Strong governance and compliance features built in.

Best for: Large organizations and regulated industries looking for scalable, reliable, compliant deployments.

17. KFServing / KServe

KServe (formerly KFServing) is a Kubernetes-native model serving framework designed to work with a multi-framework approach.

Highlights & Features:

- Unified API for serving models from various frameworks, including TensorFlow, PyTorch, XGBoost, and ONNX.

- Autoscaling capabilities, including scale-to-zero for cost savings.

- Multi-model serving with a sharing capability for GPUs.

- Out-of-the-box model explainability and monitoring.

Best For: Enterprises invested in Kubernetes ecosystems that need flexible, framework-agnostic deployment at scale.

18. BentoML

BentoML makes it easy to deploy your machine learning models by converting them into deployable APIs and microservices.

Key features & functions:

- Package models into containers with very little effort using DevOps tools.

- Native integration with Docker, Kubernetes, and cloud providers.

- Manage and version models in the Model Store.

- Command Line Interface and Python SDK to iterate quickly.

- Allows for both batch and online inference.

Best for: Startups and mid-size teams looking for fast, developer-friendly deployments with minimal infrastructure overhead.

19. TorchServe

TorchServe is the official serving framework for PyTorch Models built by AWS and PyTorch.

Key Features & Functions:

- Multi-model serving with dynamic model management.

- Metrics, logging, and versioning are natively supported.

- Inference optimized for low-latency serving on both CPU and GPU.

- Inference handlers can be customized.

- Can easily scale on AWS or Kubernetes environments.

Best For: Teams with strong ties to PyTorch research and production need seamless deployment workflows.

20. TFX (TensorFlow Extended)

TFX is Google’s production-level ML framework for deploying TensorFlow models end to end.

Key Features & Capabilities:

- End-to-end pipeline: data ingestion, data validation, model training, model deployment, and model monitoring tools.

- Tight integration with the complete TensorFlow ecosystem.

- Model validation in the pre-production stage.

- Horizontal scaling with TensorFlow Serving and Kubernetes.

- Native integrations with Google Cloud AI services.

Best For: Enterprises primarily using TensorFlow as their ML stack, looking for enterprise-grade reliability and monitoring.

E. Monitoring, Governance & Compliance

No deployment is complete without ML model monitoring tools and ML observability tools. Platforms like Arize AI, Fiddler AI, Evidently AI, WhyLabs, and Truera offer machine learning monitoring tools that detect anomalies, bias, and drift. These solutions represent the future of responsible AI, ensuring fairness and compliance.

21. Arize AI

Arize AI is an industry-grade monitoring platform with the ability to give real-time visibility into ML models. Arize will automatically surface and detect any data and concept drift and model decay, so you can quickly troubleshoot.

Features & Functions:

- Real-time drift detection (data and model).

- Dashboard for bias and fairness differentials across demographics.

- Interactive dashboards for performing root-cause analysis of your models.

- Integration with leading ML frameworks.

- Automatically alerts you when model performance deteriorates.

Best For: Enterprises needing real-time monitoring and root cause analysis of their production models in industries such as e-commerce, finance, and logistics.

22. Fiddler AI

Fiddler AI focuses on explainability, governance, and compliance, which is a critical need in regulated industries. It provides model insights and transparency and simplifies the auditing process.

Features & Functions:

- Model explainability (SHAP, LIME, custom methods).

- Bias detection and fairness analysis.

- Governance reports for regulatory audits.

- Decision provenance for risk management.

- Enterprise-ready compliance workflows.

Best For: Regulated industries (healthcare, BFSI, insurance) needing explainability and compliance for strict auditing requirements.

23. Evidently AI

Evidently AI is an open-source monitoring service for identifying data and model drift. Ultimately, it produces visual reports (interactive) to assist teams in debugging earlier.

Features & Functions:

- Dashboards are designed for drift detection straight out of the box.

- Metrics for data quality, stability, and prediction drift metrics.

- Monitoring with customizable templates.

- Light and integrates easily with Jupyter & CI/CD.

- Open source allows flexibility and community support.

Best For: Start-ups and mid-sized teams looking for an economical open-source monitoring solution.

24. WhyLabs

WhyLabs offers scalable ML models for continuous observability. Its proactive alerts and anomaly detection will help enterprises stay ahead of any ML failures.

Features & Functions:

- Enterprise-grade monitoring at scale with support for hundreds of ML models.

- Automated anomaly detection with alerts to notify in real time.

- End-to-end observability (inputs, predictions, outcomes).

- Strong cloud and hybrid observation support.

- Developer integrations into their MJL stack (Python SDK, APIs).

Best for: Enterprises managing AI at scale (dozens to hundreds of models) requiring monitoring which is always-on with proactive alerts.

25. Truera

Truera offers model observability, bias detection, and explainability. It allows teams to build trustworthy AI systems that are fair and compliant with regulations.

Features & Functions:

- Bias and fairness testing before deployment.

- Post-deployment monitoring to promote ethical AI.

- Explainability dashboards to make decisions transparent.

- Governance and compliance reporting.

- Root-cause analysis into drift and errors.

Best For: Enterprises developing a trustworthy, ethical AI system with high fairness and compliance requirements, especially for customer-facing applications.

This MLOPs tools list covers the full AI lifecycle, including managing and versioning data, to deployment, monitoring, and governance for models. These tools are the most essential building blocks of a modern MLOPs platforms and services to allow organizations to scale AI responsibly.

It’s worth noting that these resources are building blocks to create your AI ecosystem. Integrating these tools into a formal and cohesive production system continues to be a struggle for most organizations. This is where MLOps consultancies like Dextralabs can make a huge impact – and help organizations to coordinate and scale these tools into a sustainable, compliant AI ecosystem.

How Dextralabs Helps Enterprises Operationalize AI?

While the most effective and best mlops platforms and MLOps vendors, they by themselves do not guarantee enterprise-wide success. It is well known that when moving from POC to production, organizations consistently face significant challenges with integration, governance, scalability and ongoing health monitoring.

Selecting properly is just the beginning. Operationalizing a tool, including any necessary configurations, will require strategic and managed expertise — its own enterprise-grade framework. Choosing the right tools to deploy machine learning models is critical, but without the right expertise, even the most advanced platforms can fall short in real-world enterprise environments.

This is where Dextralabs makes significant contributions.

Dextralabs Roles in MLOps & LLMOps:

Dextralabs not only provides tools but also hosts models. We provide customers with real end-to-end operationalization of AI and LLMs. Our services help connect the gap between research prototypes and production-ready systems:

- LLM Deployment – Hosting of large language models as extremely scalable, secure and highly available enterprise-grade systems.

- LLM Evaluation – Provides the rigorous testing required for accuracy, fairness, compliance, and safety to ensure models are enterprise-ready.

- Prompt Consulting & Optimization – Support in the strategic tuning of prompts for more efficiency, lower costs and higher reliability of any streaming outcomes in the real world.

- AI Agent Development – Development and deployment of tailored AI agents to automate workflows, help with decisions, and assist with operational business.

- Custom AI Pipelines – Providing integrated custom pipelines for the MLOPS solution and their LLMOps frameworks for zero-latency, global as a service, enterprise-grade data, training, deployment and monitoring process

Why Enterprises Rely on Dextralabs?

Enterprises trust Dextralabs because we offer more than just tools: we deliver outcomes. Enterprises rely on Dextralabs for:

- Velocity of implementations with proven expertise across industries, including finance, healthcare, retail and SaaS.

- End-to-end support timeline from Research, Implementation, Monitoring, and Scaling.

- Governed, secure and compliant, we ensure our AI solutions are sound from an enterprise regulation and ethical perspective.

- Future-ready strategies that integrate MLOps with AI and LLMOps, forming a reliable long-term plan for a scalable enterprise.

If you’re considering implementing MLOps with enterprise LLMs, Dextralabs simplifies the transition from experimentation to production, ensuring your AI solutions are truly transformative.

Conclusion

AI is rapidly changing. MLOps frameworks and ML pipeline tools are the foundation of scaling machine learning from the lab to production. The 25 MLOps tools highlighted here represent the best solutions for data management, deployment, orchestration, and monitoring in 2025.

Choosing the tools is just part of the process. Even the best ML ops tools are useless without proper integration, governance, and strategy.

If you are an enterprise and want to move from just tools to true production-grade AI, Dextralabs offers the full end-to-end consultancy service in LLMOps, AI agents, and enterprise AI deployment – working with organisations to turn ML into real value for business.