| Industry | Region | Client Type |

| Platform Engineering / SaaS | San Francisco, USA | Growth-Stage SaaS (400 engineers) |

About the Client

The client is a Series D SaaS company in San Francisco building enterprise collaboration software. 400 engineers across 35 teams, shipping to a customer base of 4,200+ companies. Their product is a mono repo (TypeScript + Go backend, React frontend) deployed on Kubernetes.

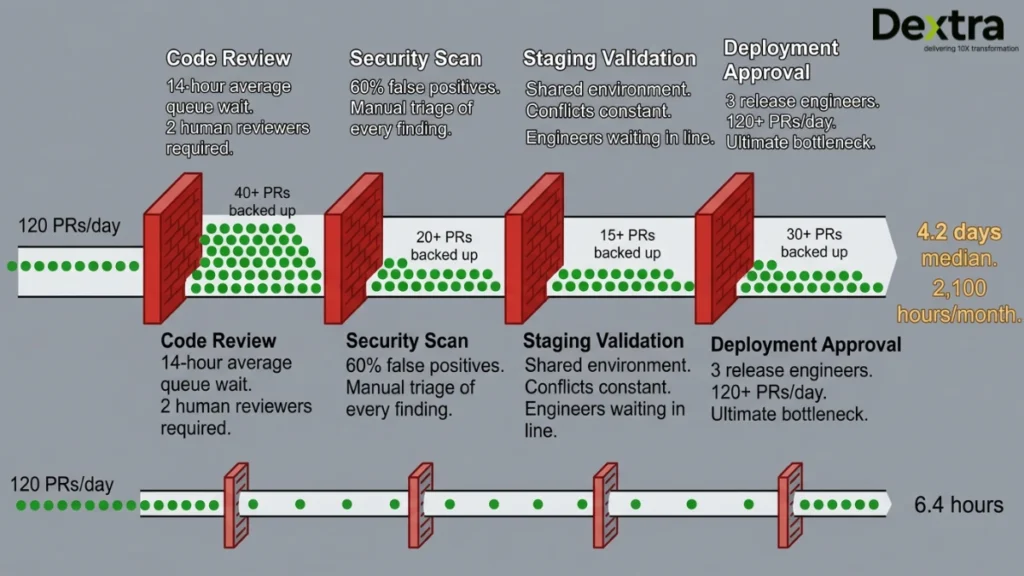

They were shipping 120+ pull requests per day. Growth was creating a paradox: the more engineers they hired, the slower the release process became, because every additional PR added to the review queue, the security scanning backlog and the deployment coordination overhead.

The Challenge: 2,100 Engineering Hours Per Month on Manual Gates

The release pipeline had four manual gates that every PR had to pass through:

- Code Review: 2 human reviewers required per PR. Average wait time: 14 hours. The review itself took 30–45 minutes, but the queue wait was killing velocity.

- Security Scan: Manual review of Snyk findings. 60% of flagged issues were false positives or accepted risks. But someone had to triage each one.

- Staging Validation: Each PR deployed to a shared staging environment. Engineers waited in line. Environment conflicts were constant.

- Deployment Approval: A release engineer manually approved and monitored each deployment. With 120+ PRs/day, the 3 release engineers were the ultimate bottleneck.

The median time from PR creation to production was 4.2 days. The engineering team was spending 2,100 hours per month on review, scanning, validation and deployment, roughly 5 full-time engineers worth of effort that wasn’t building the product.

AI Agent Architecture: Four Claude Code Subagents with MCP Integrations

┌─ SYSTEM ARCHITECTURE – Claude Code Multi-Agent DevOps Pipeline

Developer opens PR on GitHub

│

▼

┌───────────────────────────────────────────────────────────────┐

│ CLAUDE CODE COORDINATOR (Lead Agent) │

│ Reads PR diff + CLAUDE.md project rules + Jira context │

│ Spawns 4 parallel subagents with scoped permissions │

└───────────────┬───────────┬──────────┬────────────────────────┘

│ │ │

┌───────┴───┐ ┌───┴──────┐ ┌─┴─────────┐

│ SUBAGENT 1 │ │ SUBAGENT 2 │ │ SUBAGENT 3 │

│ Code Review│ │ Security │ │ Staging │

│ │ │ Scan │ │ Validator │

│ Opus 4.6 │ │ Sonnet 4.6 │ │ Haiku 4.5 │

│ MCP:GitHub │ │ MCP:Snyk │ │ MCP:Play- │

│ MCP:Jira │ │ MCP:GitHub │ │ wright, │

│ Read+Write │ │ Read only │ │ Datadog │

└───────┬────┘ └────┬──────┘ └───┬────────┘

│ │ │

└───────┴───────────┴──────────┘

│ All pass?

▼

┌───────────────────────────────────────────────────────────────┐

│ SUBAGENT 4: RELEASE AGENT │

│ Canary deployment (5% → 25% → 100% traffic) │

│ MCP: Kubernetes + Datadog + PagerDuty + Slack │

│ Auto-rollback if error rate > baseline + 2 std devs │

└───────────────────────────────────────────────────────────────┘

MODEL ROUTING: Opus 4.6 for deep code reasoning,

Sonnet 4.6 for security triage, Haiku 4.5 for fast validation

CLAUDE.md: Project-specific rules, patterns, banned patterns

MCP Connectors: GitHub, Jira, Snyk, Playwright, Datadog,

Kubernetes, PagerDuty, Slack (8 total)

Subagent 1: Code Review AI Agent (Claude Opus 4.6)

This is the deep thinker. It uses Claude Opus 4.6, the most capable model, because code review requires understanding the full codebase context, architectural patterns and business logic. The agent reads the PR diff, loads relevant context from the CLAUDE.md project rules (the team’s architectural decisions, coding patterns and banned anti-patterns) and pulls the linked Jira ticket via MCP for requirement context.

It reviews for: logic errors, architectural violations, performance regressions, test coverage gaps and consistency with the codebase’s established patterns. It posts inline comments on the PR exactly like a human reviewer would. For high-confidence issues (clear bugs, pattern violations), it requests changes. For ambiguous items, it flags for human attention.

Scoped permissions: GitHub MCP (read + write comments + request changes), Jira MCP (read tickets + update status). Cannot merge or delete.

Subagent 2: Security Scan AI Agent (Claude Sonnet 4.6)

The Security AI Agent runs SAST and dependency scanning via the Snyk MCP connector, then applies AI reasoning to triage the results. This is the agent that eliminated 60% of the manual triage burden. Most Snyk findings are either false positives (the vulnerable code path isn’t reachable in this specific application) or accepted risks (the team knowingly uses a library with a low-severity CVE because the fix hasn’t been released yet). The agent reads the Snyk results, cross-references against the team’s .snyk exception file, checks whether the vulnerable code path is actually reachable in the changed code and triages findings into: “block PR”, “notify team (non-blocking)”, or “auto-suppress (known exception).”

Scoped permissions: Snyk MCP (read only), GitHub MCP (read only + add labels). Cannot modify code.

Subagent 3: Staging Validator AI Agent (Claude Haiku 4.5)

The Staging Validator deploys the PR to an ephemeral staging environment (not the shared staging that caused all the conflicts), runs the E2E test suite via Playwright MCP and validates key metrics against Datadog baselines. If page load time increases by more than 200ms, or if any E2E test fails, the PR is flagged. Haiku 4.5 is used here because the task is fast validation, not deep reasoning and at $0.25/1M tokens, running this on every PR is cost-effective.

Scoped permissions: Playwright MCP (execute tests), Datadog MCP (read metrics), Kubernetes MCP (deploy ephemeral env, destroy on completion). Cannot touch production.

Subagent 4: Release AI Agent

When all three subagents pass, the Release AI Agent takes over. It executes a canary deployment: routes 5% of production traffic to the new version, monitors error rates and latency via Datadog for 15 minutes, then progressively rolls to 25%, then 100%. If error rate exceeds the baseline by more than 2 standard deviations at any stage, the agent auto-rolls back and pages the on-call engineer via PagerDuty.

Scoped permissions: Kubernetes MCP (deploy/rollback), Datadog MCP (read), PagerDuty MCP (create incident), Slack MCP (post to #releases). Full production access with auto-rollback as the safety net.

Workflow: From PR to Production

Step 1: PR Created (T+0)

Developer pushes code and opens a PR on GitHub. The GitHub webhook triggers the Claude Code Coordinator.

Step 2: Context Loading (T+30s)

The Coordinator reads the PR diff, loads the CLAUDE.md project rules, fetches the linked Jira ticket and determines the PR’s risk profile (does it touch auth? payments? database schema?). High-risk PRs get Opus 4.6 review; low-risk gets Sonnet 4.6.

Step 3: Parallel Review (T+2–12 min)

All four subagents run in parallel. Code Review Agent posts inline comments. Security Agent triages Snyk findings. Staging Validator deploys to ephemeral env and runs E2E tests. Total parallel execution: 2–12 minutes depending on test suite size.

Step 4: Consolidation (T+15 min)

The Coordinator collects all subagent results. If all pass, it auto-approves the PR and merges. If any agent flagged issues, the Coordinator summarizes findings in a single consolidated PR comment and requests changes.

Step 5: Canary Deploy (T+20 min)

The Release Agent deploys to production via canary. 5% traffic for 15 minutes, monitored against Datadog baselines. If clean: 25% for 10 minutes, then 100%.

Step 6: Production (T+45 min – 6.4 hours)

Simple PRs (no issues flagged) go from creation to production in under 45 minutes. PRs that need developer fixes average 6.4 hours end-to-end, down from 4.2 days. The Release Agent posts to #releases on Slack with a deployment summary.

Implementation Decisions

| Decision Area | What We Chose | Why / Trade-Offs |

| Model routing | Opus 4.6 for review, Sonnet 4.6 for security, Haiku 4.5 for validation | Model-mixing strategy per Anthropic’s enterprise guidance. Opus ($15/1M) for deep reasoning (code review). Sonnet ($3/1M) for security triage. Haiku ($0.25/1M) for fast, high-volume validation. Monthly model cost: ~$12,000 for 120 PRs/day instead of $45,000 if all-Opus. |

| Agent framework | Claude Code subagents with CLAUDE.md project memory | Claude Code’s subagent architecture provides scoped tool permissions per agent (critical for security: review agent can’t access production). CLAUDE.md stores architectural rules that persist across all agent sessions. |

| MCP integrations | 8 MCP connectors (GitHub, Jira, Snyk, Playwright, Datadog, Kubernetes, PagerDuty, Slack) | MCP provides standardized tool access with audit logging. Critical: every agent action is traceable in the MCP audit log for SOC 2 compliance. |

| Staging strategy | Ephemeral environments per PR over shared staging | Shared staging caused 40% of validation delays (environment conflicts). Ephemeral envs spin up in 90 seconds on Kubernetes, run tests and destroy. Cost: ~$0.12 per PR for compute. Time saved: 14+ hours of queue wait eliminated. |

| Rollback trigger | Error rate > baseline + 2 std deviations | 1 std dev caused too many false rollbacks. 3 std dev caught issues too late. 2 std dev (validated on 3 months of production data) catches real regressions within 3–5 minutes while maintaining 99.1% precision. |

| Human override | Any engineer can override agent decisions via /override command | Non-negotiable. The agents augment human judgment, not replace it. Override events are logged and reviewed weekly for agent improvement. |

Tech Stack

Claude Code (Opus 4.6 / Sonnet 4.6 / Haiku 4.5) · CLAUDE.md (project memory) · MCP connectors (8) · GitHub Actions · Kubernetes (EKS) · Playwright · Datadog · Snyk · PagerDuty · SlackResults

| 4.2 days → 6.4h Median PR-to-production time | 2,100 → 380 Monthly engineering hours on reviews/deploys | 42% Reduction in production incidents | 23% Increase in developer shipping velocity |

Median PR-to-production time dropped from 4.2 days to 6.4 hours. For zero-issue PRs (roughly 45% of all PRs), the pipeline runs from creation to production in under 45 minutes. The engineering team reclaimed 1,720 hours per month, equivalent to roughly 10 full-time engineers redirected from review overhead to product development.

Production incidents dropped by 42%. The combination of AI code review (catching logic errors humans missed at volume), automated security triage (no more “we’ll fix that Snyk finding later”) and canary deployments with auto-rollback means fewer bugs reach production and the ones that do get caught faster.

The release engineering team went from 3 full-time roles to 1 person who monitors the system and handles the override/escalation cases. The other 2 moved into platform engineering, building the infrastructure that makes the agent pipeline better.

“We went from release engineers being the bottleneck to releases being the fastest part of our process. Engineers now complain that the agent reviews too quickly, they don’t have time to grab coffee.” – VP of Engineering, SaaS Company, San Francisco

Engagement Timeline: 12 Weeks

Weeks 1–2: discovery, codebase analysis, CLAUDE.md configuration, MCP connector setup (GitHub, Jira).

Weeks 3–5: Code Review AI Agent (Opus 4.6 with project-specific fine-tuning via CLAUDE.md rules).

Weeks 4–7: Security Agent (Snyk MCP, triage logic, exception handling).

Weeks 6–9: Staging Validator (Playwright MCP, ephemeral environment automation, Datadog baseline configuration).

Weeks 8–11: Release AI Agent (canary deployment logic, rollback thresholds, PagerDuty/Slack integration).

Week 12: full pipeline parallel running alongside existing manual process, then cut-over.

Running a large engineering org where release velocity is the bottleneck?

Let’s build a Claude Code multi-agent pipeline that gives your engineers their time back.

👉 Book a Free AI Agent development Services