| Industry | Region | Client Type |

| E-Commerce / Direct-to-Consumer | Bengaluru, India | High-Growth D2C Startup (₹250Cr ARR) |

About the Client

The client is one of India’s fastest-growing D2C brands, operating in the personal care and wellness category. They sell across their own website, a mobile app and major marketplaces, with 8 million monthly active users and annual recurring revenue of approximately ₹250 crore. Their customer base spans metros and Tier 2–3 cities, communicating in Hindi, English, Tamil and Bengali, often mixing languages within the same message.

The company had recently raised a Series D round and was under pressure to improve unit economics. Customer support was one of the largest line items: a 120-person BPO team costing ₹4.8 crore per year.

The Problem

68% percent of the company’s support tickets were repetitive. Order tracking, return and refund requests, size guide questions, COD-to-prepaid conversions, these queries followed predictable patterns and had clear resolutions. But the company’s existing chatbot was failing badly at handling them.

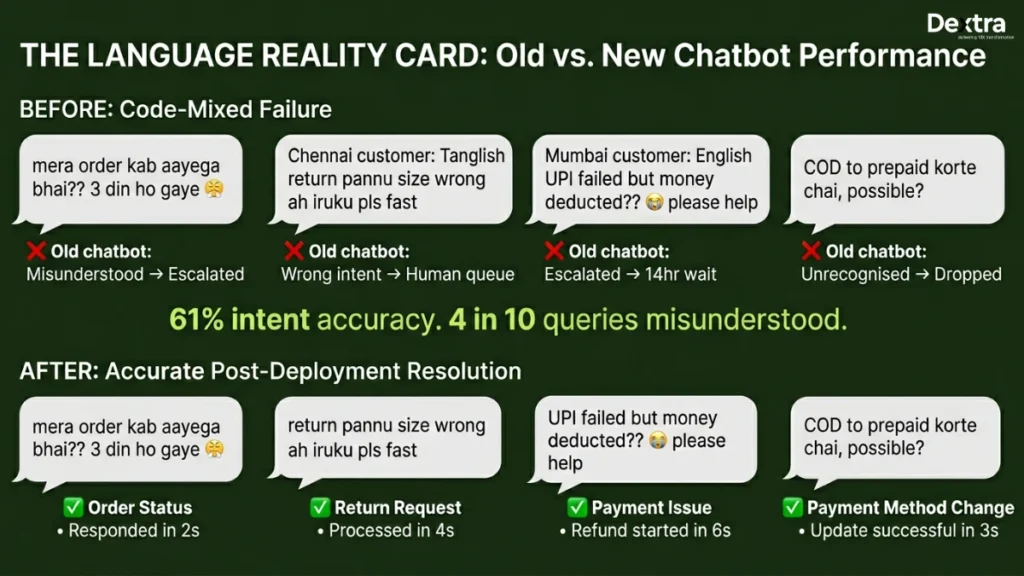

The core issue was language. India’s customer communication doesn’t fit neatly into single-language categories. A customer in Delhi might type “mera order kab aayega, bhai?” (Hinglish, Hindi words in English script). A customer in Chennai might write “return pannu, size wrong ah iruku” (Tanglish, Tamil mixed with English). Off-the-shelf NLP models, trained primarily on clean English or clean Hindi, scored around 61% intent accuracy on this kind of code-mixed input. That meant four out of ten queries were misunderstood, escalated to a human, or answered incorrectly.

Beyond language, there were India-specific payment edge cases the chatbot couldn’t handle. UPI payment failures (common during high-traffic sales), COD verification calls, wallet refund routing (the customer paid via Paytm but wants the refund in their bank account) and partial refunds on bundled orders. Each of these required connecting to the payment gateway and order management system to actually resolve the issue, not just acknowledging it.

Customer satisfaction had dropped to 3.1 out of 5. During sale events, first-response time averaged 14 hours. Customers were publicly complaining on social media, which was starting to affect acquisition costs.

What Dextralabs Built?

At Dextra Labs, we built a multi-agent support system with three specialized agents, deployed primarily on WhatsApp Business API, which accounted for 72% of the client’s customer interactions.

Agent 1: Triage Ai Agent

The Triage AI Agents classifies incoming queries into 32 intent categories. The key innovation here was the language model. We fine-tuned an IndicBERT-based classifier on 45,000 labeled code-mixed queries from the client’s historical support data. This isn’t the kind of data you can download from a benchmark, it’s real messages from real customers in Bengaluru, Patna, Chennai and Kolkata, full of abbreviations, transliterations, emojis and voice-note transcriptions. The fine-tuned model achieves 93.2% intent accuracy on code-mixed input, compared to 61% for off-the-shelf multilingual models.

Agent 2: Resolution AI Agent

This is where the system goes beyond answering questions to actually solving problems. The Resolution AI Agent has direct API integrations with the client’s OMS, Shiprocket (logistics) and Razorpay (payments). When a customer asks for a refund, the agent doesn’t say “your refund is being processed” and leave the customer hanging. It initiates the refund through the Razorpay API, confirms the amount and destination and sends the customer a confirmation with an expected timeline. When a customer wants to reroute a shipment, the agent calls the Shiprocket API and makes it happen.

This required careful state management. A refund request might involve checking the order status, verifying the return window, initiating the refund and confirming success, four API calls in sequence, any of which could fail. We built retry logic and graceful degradation for each integration: if Razorpay is slow, the agent tells the customer it’s processing and follows up when the confirmation arrives, rather than timing out.

Agent 3: Escalation AI Agent

Not everything should be automated. The escalation AI Agent monitors conversation sentiment in real time. It detects frustration patterns: repeated queries about the same issue, negative sentiment shift, profanity in any of the four supported languages, or requests that fall outside the Resolution AI Agent’s scope. When triggered, it routes the conversation to a human agent within 30 seconds, along with the full conversation history, extracted customer details and a suggested resolution path. The human agent doesn’t start from zero.

Architecture and Technical Decisions

Scale was the defining constraint. The system needed to handle 1,500 concurrent conversations during flash sales without degradation. We built the message ingestion layer on Kafka, with auto-scaling consumer pods on Amazon EKS in the Mumbai region. Each conversation maintains state in Redis with a 24-hour TTL.

We chose GPT-4o Mini as the primary response generation model for its speed and cost efficiency at the volume this client operates at. The IndicBERT classifier handles intent detection, so the LLM only needs to generate contextually appropriate responses rather than understanding the query from scratch. This split architecture keeps per-query costs under ₹0.30, which was essential for the unit economics to work at 1.2 million queries per month.

Tech Stack

GPT-4o Mini · IndicBERT (fine-tuned) · WhatsApp Business API · Redis · AWS Mumbai (ap-south-1) · Kafka · MongoDB · Amazon EKS · Shiprocket API · Razorpay APIResults

| 71% Queries resolved without human touch | 14h → 90s Avg. first response time | ₹3.1Cr/yr BPO cost reduction | 3.1 → 4.4 CSAT score improvement (/5) |

Within 90 days, 71% of all support queries were being fully resolved by the agent system without any human involvement. First-response time dropped from 14 hours to 90 seconds. During the Republic Day sale, the system handled 89,000 queries in a single day without any outage or degradation in response quality.

The BPO team went from 120 people to 35. The retained team handles genuinely complex cases: product defect complaints, escalated disputes and high-value customer retention. Annual BPO costs dropped from ₹4.8 crore to approximately ₹1.7 crore, a saving of ₹3.1 crore per year. Customer satisfaction improved from 3.1 to 4.4 out of 5 and negative social media mentions about support responsiveness dropped by over 60%.

The metric the CTO cares about most: per-query cost. With the BPO team, the average cost per resolved query was approximately ₹40. With the agent system, it’s ₹4.70 for agent-resolved queries and ₹52 for human-escalated queries. Blended cost per query is now ₹18.40, less than half of what it was.

“During our Republic Day sale, the agent handled 89,000 queries in a single day without a single outage. Our BPO team, now 35 people instead of 120, only sees the cases that genuinely need human judgment.” – CTO & Co-Founder, D2C E-Commerce Brand, Bengaluru

Engagement Timeline

10 weeks total. We shipped a working MVP in 5 weeks covering the top 12 intents (representing roughly 55% of ticket volume), then expanded to all 32 intents and full API integrations over the next 5 weeks.

Weeks 1–2: discovery, intent taxonomy design and data labeling pipeline setup.

Weeks 3–5: IndicBERT fine-tuning, Triage Agent and basic Resolution Agent with Razorpay integration.

Weeks 6–8: full Resolution Agent with Shiprocket and OMS integrations, Escalation Agent development.

Weeks 9–10: load testing, Republic Day sale simulation (synthetic traffic at 2x expected volume) and production rollout.

Running a consumer brand with support costs that scale linearly with growth?

Let’s build an agent system that changes that equation. Connect Dextra Labs for getting AI agents for your ecommerce brand.

👉 Book a Free AI Agent development Services