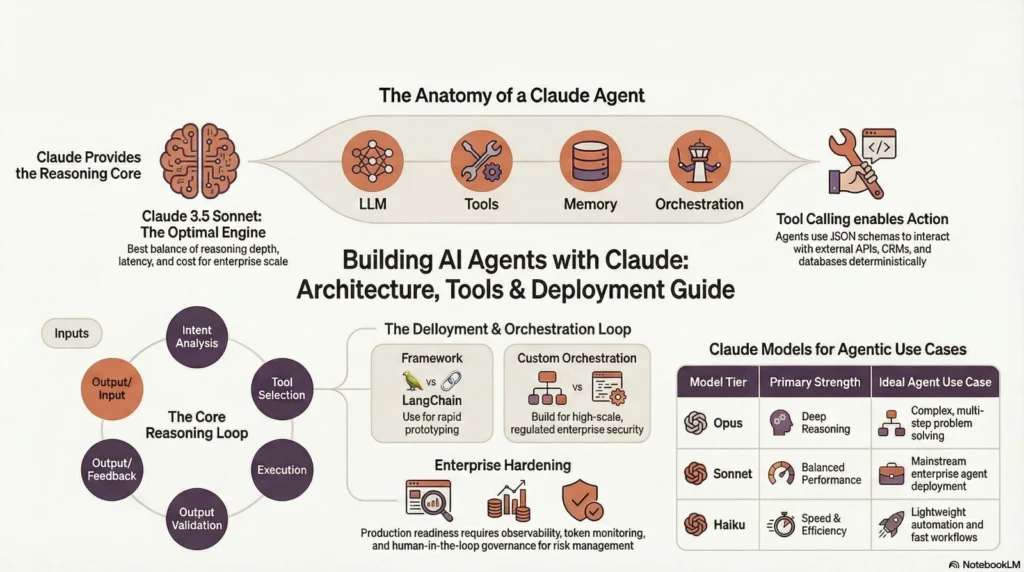

Claude is a large language model developed by Anthropic. While Claude itself is not an autonomous AI agent, developers can use the Claude API tutorial to build Claude AI agents that perform tool calling, multi-step reasoning, and structured autonomous workflows for enterprise-grade applications.

Claude on its own is a reasoning engine; it predicts and generates text based on patterns and instructions. It does not inherently take actions, maintain persistent memory, or independently operate external systems. However, when combined with orchestration logic, tool definitions, and memory layers, Claude becomes the cognitive core of a powerful agentic AI system.

So to answer directly:

- Is Claude an AI agent? No, claude is a large language model (LLM), not an autonomous system.

- Is Claude agentic AI? Not by itself, it becomes agentic when embedded inside a structured orchestration architecture that enables decision loops, tool execution, and memory persistence.

This distinction is critical. Many organizations confuse “advanced LLM” with “AI agent.” In reality, an AI agent is an engineered system built around the model; not the model itself.

This guide explains:

- How to build Claude AI agents?

- How to design scalable agent architectures?

- How to implement tool calling correctly?

- How to deploy production-ready agentic AI systems?

- What infrastructure is required for enterprise environments?

What Is a Claude AI Agent?

A Claude AI agent is an application that combines Claude’s reasoning capabilities with tools, memory systems, and orchestration logic to perform semi-autonomous or autonomous tasks. These tasks can include retrieving data, analyzing information, making structured decisions, generating outputs, and interacting with external systems via APIs.

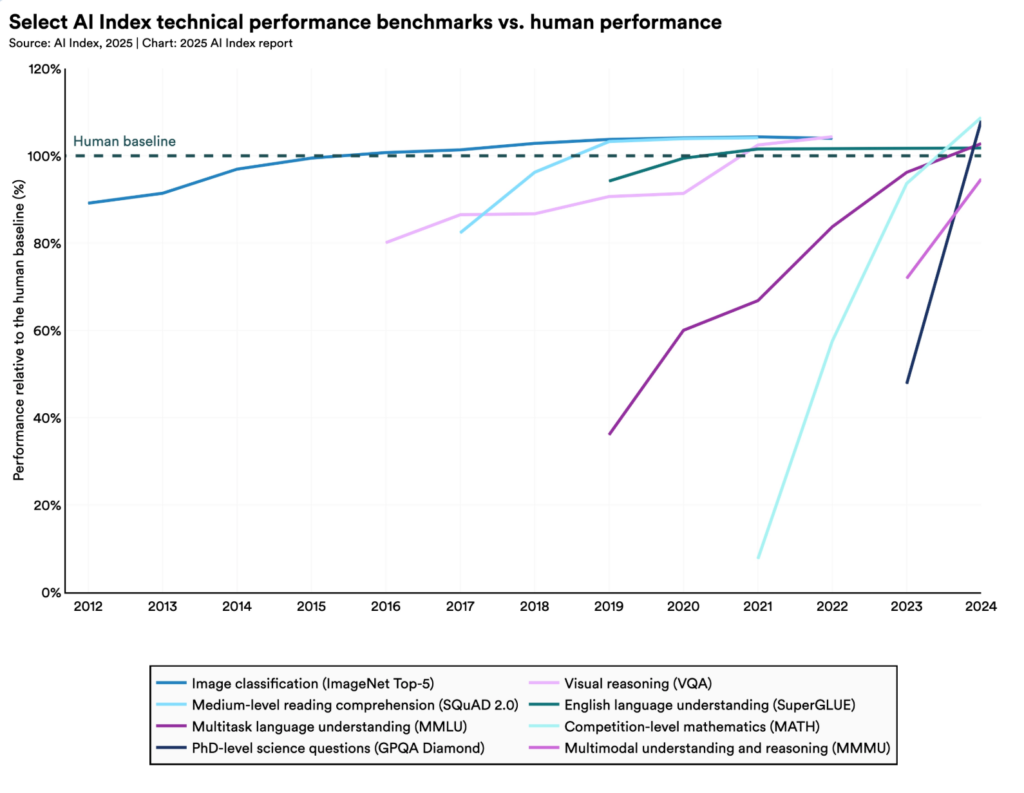

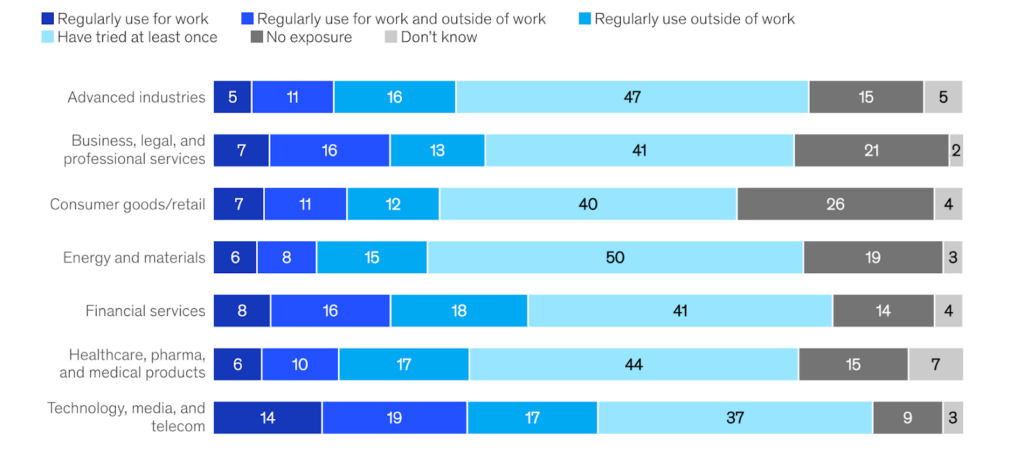

Enterprise momentum behind AI agents is accelerating. The Stanford AI Index Report 2024 notes a sharp increase in generative AI deployment across enterprise workflows, with widespread investment in production-grade AI systems rather than experimental pilots.

Unlike a simple chatbot that only responds to prompts, an agent:

- Breaks down complex tasks

- Selects appropriate tools

- Executes actions

- Evaluates outputs

- Updates memory

- Produces structured final responses

In other words, the agent turns Claude from a text generator into a workflow engine.

Clarification

- Claude = LLM (Language Model)

- Claude Agent = LLM + Tools + Memory + Orchestration Layer

Without tools and orchestration, Claude remains reactive. With them, it becomes operational.

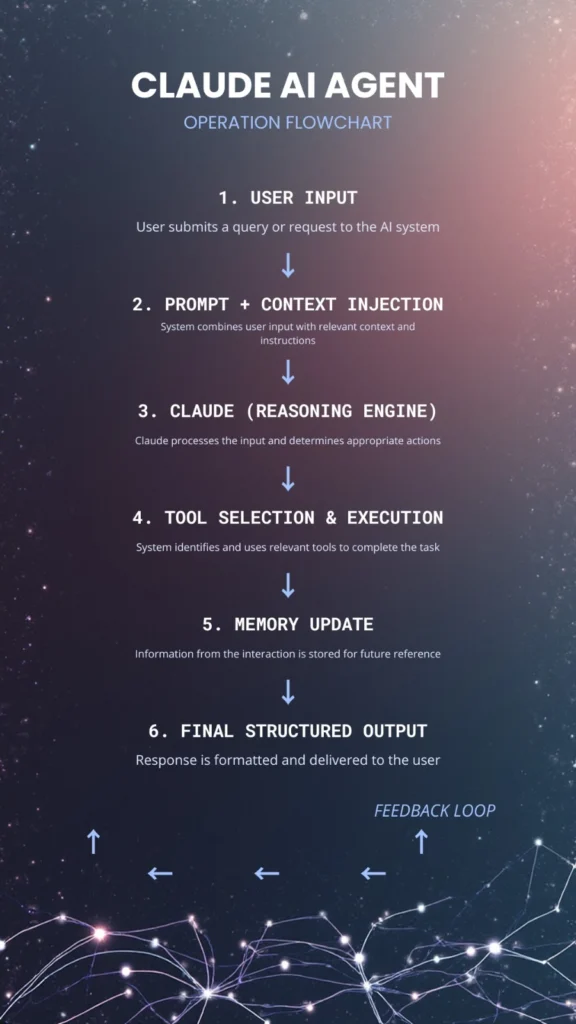

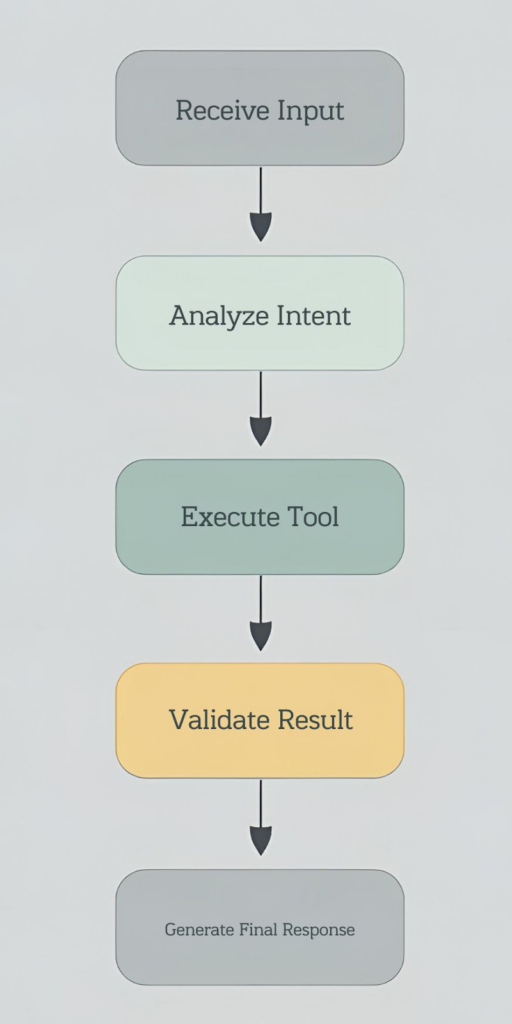

Textual Architecture Diagram

Below is a simplified flow of how a Claude-powered AI agent operates:

Each arrow represents a control loop. The system may iterate multiple times before producing a final result.

A Claude agent framework wraps the model inside a decision loop that enables:

- Intent detection

- Tool invocation

- Multi-step reasoning

- Output validation

- Memory updates

This continuous loop is what creates agentic workflows.

Is Claude Agentic AI?

Claude supports agentic AI workflows when combined with tool calling and orchestration layers. However, Claude itself is not an agent. It becomes agentic when embedded within a structured decision-loop architecture that enables action-taking and state tracking.

Claude supports the foundational capabilities needed for agents:

- Tool use

- Long-context reasoning

- Structured outputs

- Multi-step plan execution

- Instruction-following reliability

However, autonomy requires additional layers:

- Persistent memory

- Execution systems

- Monitoring

- Governance controls

When properly engineered, these components transform Claude into the reasoning core of Autonomous AI systems. But without those layers, it remains a powerful yet passive language model.

Claude 3.5 Sonnet for Agentic AI Development

Among the available Claude model variants, Claude 3.5 Sonnet is widely considered the most balanced option for enterprise Claude AI agent deployment. When building production-grade agentic systems, model selection is not just about intelligence; it’s about operational trade-offs.

Organizations developing Claude AI agents must carefully balance:

- Reasoning depth: Can the model break down complex, multi-step tasks?

- Latency: How quickly does it respond under real-world traffic?

- Cost per token: Is it financially sustainable at scale?

- Reliability in tool invocation: Does it consistently follow structured schemas?

- Context capacity: Can it handle long documents and historical memory?

Claude 3.5 Sonnet provides a strong middle ground across these dimensions, making it highly suitable for agentic AI systems that require both reasoning capability and predictable operational performance.

Why Claude 3.5 Sonnet?

Compared to lighter-weight models, Sonnet offers:

- Improved reasoning depth for multi-step workflows and structured planning

- Strong speed-to-cost efficiency, making it viable for large-scale deployment

- Large context window, enabling document-heavy use cases such as legal review, compliance audits, or knowledge-base agents

- Reliable and structured tool invocation, reducing parsing errors and malformed outputs

- Stable performance under enterprise workloads, including concurrent API requests

For most production environments, Sonnet delivers sufficient intelligence to handle:

- Tool-based decision loops

- Multi-turn conversational agents

- Workflow automation

- Document reasoning pipelines

Without the significantly higher cost typically associated with top-tier reasoning models.

In other words, it hits the optimal performance curve for organizations that need scalable agentic AI without overpaying for marginal reasoning improvements.

Snippet-ready summary: Claude 3.5 Sonnet is currently the most balanced model for building enterprise AI agents due to its speed, reasoning depth, and cost efficiency.

What Is the Core Architecture of a Claude AI Agent?

Enterprise-grade Claude agentic AI systems follow a layered, modular architecture. This modularity improves scalability, maintainability, observability, and security. Rather than treating the agent as a single prompt-response pipeline, mature systems separate responsibilities into well-defined layers.

Each layer performs a distinct function in the agent lifecycle, ensuring that reasoning, execution, and memory remain decoupled yet coordinated.

Layer 1: Input & Context Management

This layer prepares the reasoning environment before Claude processes the request. Poorly structured inputs can degrade performance, increase token costs, and cause tool invocation errors. Components include:

- User query (natural language input)

- System instructions (behavioral constraints and role definitions)

- Memory injection (previous context or retrieved knowledge)

- Guardrails and validation rules

- Compliance filters and redaction pipelines

- Prompt templates for consistency

Effective context management determines:

- How much information Claude sees

- How accurately it interprets intent

- Whether it adheres to enterprise policies

Overloading context increases cost and latency. Under-injecting context reduces accuracy. Proper context optimization directly impacts both performance and cost efficiency.

Layer 2: Reasoning Engine

In this layer, Claude functions as the cognitive core of the agent. Its responsibilities include:

- Task decomposition: Breaking complex instructions into manageable steps

- Intent analysis: Determining user goals

- Tool selection logic: Deciding whether to call an external function

- Plan generation: Creating structured execution paths

- Decision validation: Evaluating whether outputs meet criteria

The reasoning engine determines whether a task requires:

- Direct natural language response

- One or more tool calls

- Multi-step workflow execution

- Escalation to human oversight

This is where agentic behavior emerges. Instead of generating a single response, Claude may generate a structured plan, request tool execution, then refine outputs iteratively.

Layer 3: Tool Calling System

This layer enables action-taking and transforms Claude from a passive model into an operational system.

The tool layer may integrate with:

- API integrations (CRM, ERP, analytics platforms)

- Database queries (SQL or NoSQL systems)

- Webhooks

- Internal microservices

- CRM platforms

- Search engines

- Email or notification services

Tool definitions are typically described using structured JSON schemas. When Claude identifies that a tool is required, it outputs a structured function call rather than free-form text.

This separation ensures:

- Deterministic execution

- Reduced hallucination risk

- Auditable workflows

- Controlled system interaction

Tool calling is what allows a Claude AI agent to do more than generate text; it enables it to take measurable action.

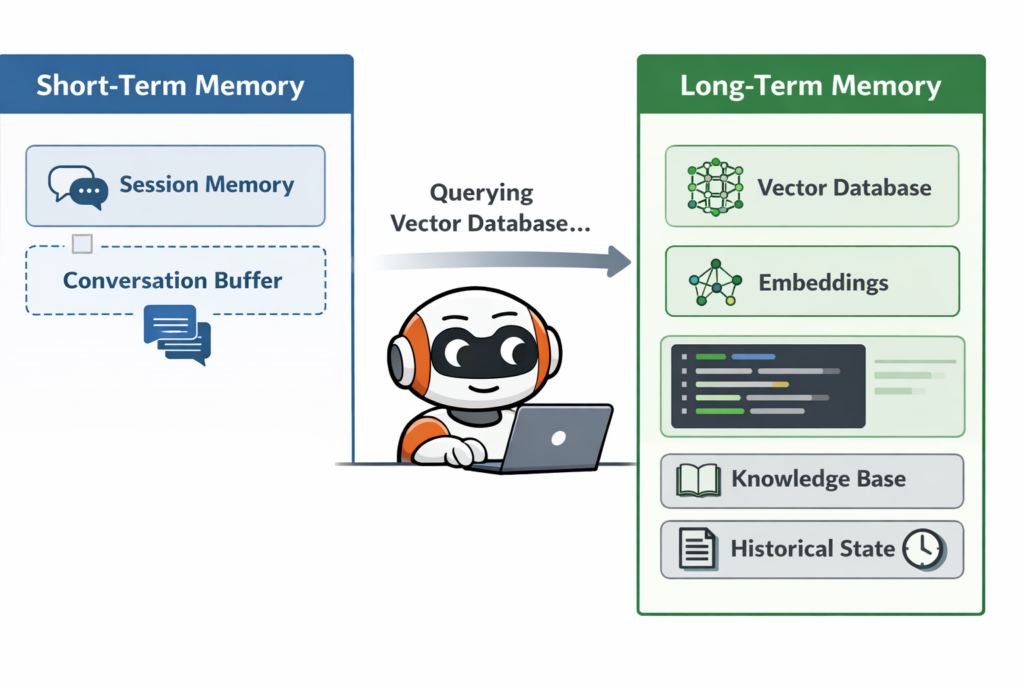

Layer 4: Memory & State

Memory is essential for continuity and contextual awareness. Without memory, every request becomes stateless and isolated. A McKinsey Global Institute study found that knowledge workers spend nearly 20% of their workweek searching for internal information, highlighting the need for persistent memory systems and retrieval architectures in AI agents

With memory, agents can:

- Track conversations over time

- Store user preferences

- Maintain workflow states

- Reference historical decisions

Types of Memory:

- Short-term session memory (conversation context)

- Long-term memory via vector databases

- State tracking variables

- Audit logs

- Prompt version histories

Long-term memory often uses embeddings stored in vector databases to retrieve relevant context dynamically. This is critical for knowledge assistants, compliance agents, and research systems. Without memory architecture, agents cannot operate reliably across multi-step or multi-session workflows. Information fragmentation is a well-documented enterprise challenge.

Layer 5: Output & Action Execution

This is the execution and impact layer; where reasoning becomes real-world effect.

Examples include:

- CRM updates

- Notification triggers

- Structured JSON responses

- Report generation

- Workflow automation

- Ticket creation

- Transaction approvals

At this stage, the system validates outputs and executes real system changes. This layer must include:

- Error handling

- Logging

- Retry logic

- Human approval checkpoints (if required)

Execution without safeguards can introduce operational risk. Therefore, validation and governance mechanisms are critical at this stage.

Tool Calling in Claude: How It Works?

Tool calling allows Claude to select predefined structured functions instead of generating only natural language text. This dramatically improves reliability in enterprise systems. Reliability and governance are central to enterprise AI adoption. According to the IBM Global AI Adoption Index 2023, organizations cite data accuracy, governance, and risk management as top barriers to scaling AI systems.

Rather than saying, “I will look up that data,” Claude can formally request execution of a function.

Core Mechanism

- Define tools using JSON schema

- Claude identifies the appropriate tool

- System executes the tool

- Output is returned to Claude

- Claude composes final response

This loop may repeat multiple times for multi-step workflows.

Why Tool Calling Matters?

Structured invocation enables:

- Deterministic workflows

- Reduced hallucinations

- Auditable decision paths

- Reliable external integrations

- Clear separation between reasoning and execution

Instead of free-form text generation, the agent operates within defined boundaries. Tool calling is the backbone of any serious Claude agent framework, especially in enterprise environments where reliability, compliance, and traceability are non-negotiable.

By expanding beyond simple prompt-response patterns and implementing structured architecture, memory systems, and tool orchestration, organizations can transform Claude into the reasoning core of scalable, production-ready Claude AI agents capable of powering modern autonomous workflows.

What Are the Claude Agent Framework Options?

To build scalable Claude code AI agents, API access alone is not enough, you need orchestration. Orchestration is the architectural layer that coordinates how the model thinks, acts, and interacts with external systems. It manages prompt construction, tool selection, execution logic, retry policies, memory updates, state transitions, logging, and error handling.

Without orchestration, what you have are isolated model calls, not a true Claude agent framework.

A single API request can generate a response. But a production-ready agent must:

- Maintain context across turns

- Decide when to call tools

- Execute structured functions

- Handle failures gracefully

- Log decisions for auditing

- Update memory stores

- Enforce governance constraints

Orchestration is the control plane that makes all of that possible. At a high level, there are two primary architectural approaches for orchestrating Claude agents:

What are the Common Approaches?

1. LangChain

LangChain is one of the most widely used orchestration frameworks for building LLM-powered systems. It provides structured abstractions that simplify the development of agentic workflows without requiring teams to design every component from scratch.

LangChain includes built-in support for:

- Tool calling abstractions

- Memory modules

- Agent loops

- Multi-step workflows

- Multi-model coordination

- Prompt templates

- Output parsers

- Vector database integrations

By offering prebuilt primitives for these components, LangChain significantly reduces boilerplate code and accelerates experimentation.

For example, instead of manually writing logic to:

- Parse tool schemas

- Validate model outputs

- Retry failed executions

- Inject memory context

- Manage state transitions

developers can rely on framework-level constructs.

This makes LangChain especially attractive during early-stage development when teams are:

- Exploring agent patterns

- Testing reasoning chains

- Prototyping tool integration

- Iterating on memory strategies

It lowers the barrier to entry for building multi-step AI agents and helps teams move quickly from concept to working prototype.

However, abstraction comes with trade-offs. While frameworks simplify development, they may limit low-level control over execution paths, performance tuning, and governance policies, factors that become increasingly important at enterprise scale.

2. Custom Backend Orchestration (Node.js, Python, etc.)

The second approach involves designing your own orchestration layer using general-purpose backend technologies. This means building a fully custom control system around Claude using frameworks such as:

- Node.js (Express, Fastify)

- Python (FastAPI, Django)

- Serverless runtimes

- Microservice architectures

- Containerized deployments

In this model, orchestration is explicitly defined in your backend codebase.

You manually design:

- Tool schemas and validation layers

- Agent execution loops

- Memory injection and retrieval pipelines

- Retry and fallback mechanisms

- Error-handling policies

- Role-based governance controls

- Audit logging systems

- Observability and tracing infrastructure

Unlike a prebuilt framework, custom orchestration provides complete ownership of:

- Execution flow

- Performance optimization

- Security boundaries

- Compliance enforcement

- Cost management strategies

This approach requires more engineering effort upfront. However, it offers maximum flexibility and long-term scalability. For organizations operating in regulated industries, such as finance, healthcare, or enterprise SaaS, custom orchestration often becomes the preferred path because it allows deeper integration with existing systems and stricter operational oversight.

When to Use Frameworks?

Framework-based orchestration is ideal when speed and experimentation are top priorities. It is particularly well suited for:

- Rapid prototyping

- Standardized agent pipelines

- Proof-of-concept builds

- Multi-model experimentation

- Internal tools

- Research environments

- Educational projects

- Innovation labs

Frameworks reduce development time and help teams quickly validate architectural patterns. They allow developers to focus more on prompt design, tool experimentation, and workflow logic rather than infrastructure plumbing.

If your primary goal is to test how Claude performs in multi-step workflows or to evaluate different agent strategies, a framework like LangChain can significantly accelerate progress.

However, as systems mature and requirements become more stringent, limitations around customization, monitoring, and cost predictability may surface.

When to Go Custom?

Custom orchestration is typically preferred for production-grade deployments where reliability, governance, and scale are critical.

This approach is especially beneficial for:

- Enterprise security requirements

- High-scale production environments

- Complex compliance workflows

- Custom audit logging

- Advanced memory architectures

- Fine-grained observability

- Strict data residency controls

- Performance optimization needs

- Cost-efficiency at scale

In large organizations, orchestration must integrate with:

- Identity and access management systems

- Logging and monitoring platforms

- Data warehouses

- Internal APIs

- Approval workflows

- Incident management systems

A custom-built orchestration layer allows deep alignment with these operational systems.

For serious Claude agentic AI deployments, custom orchestration often provides stronger governance, clearer execution visibility, improved cost control, and greater long-term scalability.

Orchestration as a Strategic Layer

In enterprise environments, orchestration is not just about functionality; it’s about reliability, monitoring, accountability, and risk management.

A mature orchestration layer should provide:

- Structured logging of every model interaction

- Tool invocation tracing

- Token usage monitoring

- Alerting on abnormal behavior

- Guardrail enforcement

- Human-in-the-loop override capability

- Versioned prompt management

Without these controls, AI agents can become opaque and difficult to manage at scale. With them, Claude transitions from a powerful model into a governed reasoning engine embedded within enterprise infrastructure.

How to Build a Claude AI Agent (Step-by-Step)?

This section is optimized for how to use Claude AI agent and Build AI agents with Claude in structured production environments. Building a Claude AI agent is not about writing a single prompt. It involves designing a system.

Step 1: Define the Agent Use Case

Before selecting models or tools, define the purpose clearly.

Common enterprise use cases:

- Internal knowledge assistant

- Customer-facing chatbot

- Workflow automation agent

- Compliance validation assistant

- Sales intelligence assistant

- Document analysis system

Clear use-case definition determines architecture complexity, tool requirements, and model selection.

Step 2: Choose the Right Model

Claude provides multiple model tiers through the API.

- Opus: Deep reasoning and complex problem-solving

- Sonnet: Balanced production deployment

- Haiku: Lightweight automation and fast workflows

For most production-grade Claude AI agents, Sonnet provides a strong balance between reasoning depth and cost efficiency.

Model choice impacts:

- Latency

- Cost

- Accuracy

- Context handling capacity

Step 3: Define Tools

Agents become operational through tool integrations.

Common tools include:

- CRM APIs (Salesforce, HubSpot)

- Internal knowledge databases

- Vector search engines

- External web search

- Webhooks

- Email automation services

- ERP systems

Each tool must be defined with a structured JSON schema so Claude can reliably invoke it. Clear tool definitions reduce ambiguity and hallucination risk.

Step 4: Implement the Agent Loop

The agent loop is the core of any Claude AI agent.

Pseudo-Logic Flow

In advanced systems, this loop may:

- Repeat multiple times

- Include validation checkpoints

- Escalate to human review

- Trigger fallback models

The loop is what transforms Claude into an agent rather than a chatbot.

Step 5: Add Monitoring & Observability

Production systems require oversight.

Essential monitoring components:

- Token usage tracking

- Tool error logging

- Latency monitoring

- Rate-limit handling

- Cost dashboards

- Audit trails

Without monitoring, even the best-designed Claude agent framework can become unstable at scale.

How to Build a Claude AI Agent for Non-Coders?

Non-coders can build basic Claude-powered AI agents using no-code automation platforms that connect Claude API endpoints with structured tool definitions. This lowers the barrier to entry but comes with trade-offs.

Methods:

- Visual workflow builders

- Automation platforms (Zapier-style tools)

- Prebuilt connectors

- Drag-and-drop integrations

- Low-code dashboards

These tools allow users to:

- Trigger Claude on events

- Route outputs to systems

- Automate repetitive workflows

For example, a non-coder could build:

- A document summarization workflow

- An email drafting assistant

- A CRM update automation flow

Reality Check

While simple automation is achievable without coding, enterprise-grade agentic AI systems require:

- Backend orchestration

- Memory architecture

- Error handling logic

- Security layers

- Governance policies

No-code tools are useful for experimentation, but large-scale systems demand engineering rigor.

Multi-Agent Systems with Claude

Multi-agent systems involve multiple Claude-powered agents collaborating, delegating tasks, and sharing structured outputs to solve complex workflows.

Instead of a single generalist agent, you design specialized agents.

Example Structure

- Research Agent: Gathers raw information

- Analysis Agent: Evaluates and structures insights

- Execution Agent: Performs actions (API calls, updates)

Each agent operates with a focused responsibility.

Advanced Concepts

- Agent hierarchies (manager-agent model)

- Supervisory controllers

- Task delegation systems

- Consensus evaluation models

- Redundancy-based validation

Multi-agent systems improve:

- Reliability

- Task specialization

- Accuracy in complex reasoning chains

They are particularly useful in large-scale enterprise automation environments.

Autonomous AI Systems Using Claude

Autonomous AI systems continuously perceive inputs, reason over data, select actions via tool invocation, and update internal memory without human intervention, using Claude as the reasoning core. True autonomy requires:

- Continuous monitoring

- Feedback loops

- State tracking

- Execution capabilities

Claude provides the reasoning layer; the rest must be engineered.

Enterprise Caution

Autonomy introduces risk. Organizations must implement:

- Governance layers

- Human-in-the-loop oversight

- Safety thresholds

- Escalation policies

- Decision auditing

Autonomy without governance creates operational, legal, and reputational risk.

Claude Code AI Agents: What Does That Mean?

“Claude code AI agents” refers to AI agents built with Claude that can generate code, execute scripts, and interact with development environments via tool APIs.

These agents combine reasoning with execution capabilities.

Capabilities

- Generate production code

- Refactor legacy systems

- Execute CI/CD tasks

- Trigger DevOps workflows

- Run infrastructure scripts

Advanced Use Cases

- Code refactoring at scale

- Automated security scans

- Infrastructure automation

- Deployment orchestration

- Log analysis and debugging

These systems combine tool calling + execution environments, creating highly technical autonomous workflows.

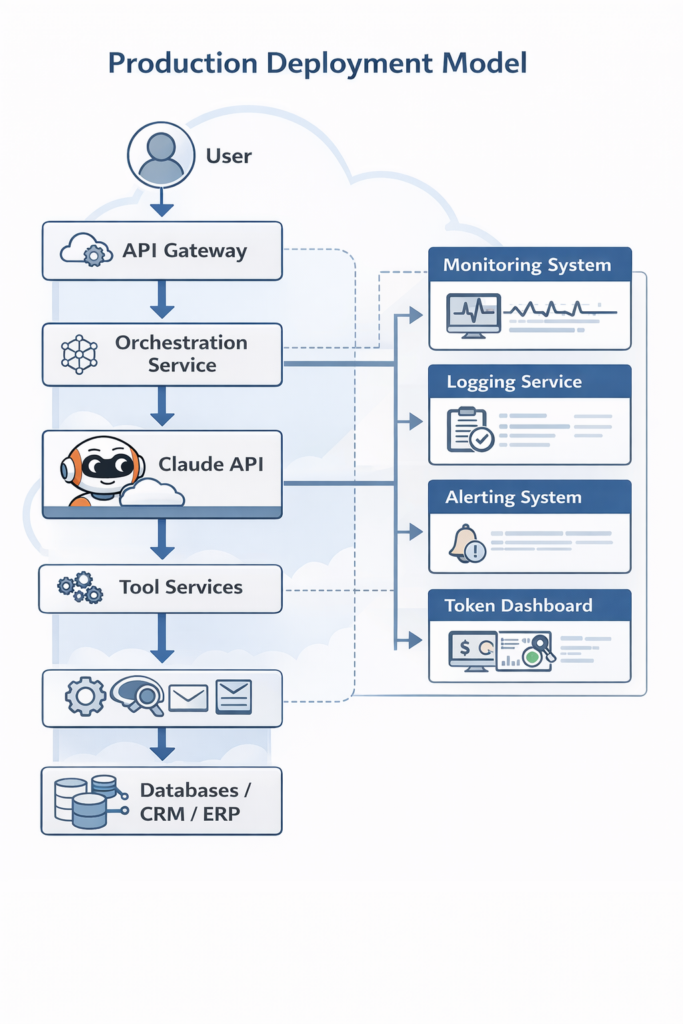

Performance & Deployment Considerations

Building scalable Claude AI agents requires deliberate infrastructure planning. Agent systems consume tokens, execute tools, and manage state; all of which impact performance.

Key Factors

- Token optimization

- Context window management

- Rate-limit compliance

- Horizontal scaling

- Intelligent batching

- Caching repeated outputs

Improper token handling can dramatically increase cost.

Deployment Options

- Serverless architecture (AWS Lambda, Cloud Functions)

- Containerized microservices

- Kubernetes-based clusters

- Hybrid cloud systems

Architecture choice depends on:

- Traffic volume

- Compliance requirements

- Latency sensitivity

Enterprise Essentials

- Observability dashboards

- Guardrails and validation layers

- Failover strategies

- Multi-region redundancy

- Real-time cost monitoring

- Prompt version control

Enterprise-grade Claude agentic AI systems require the same rigor as distributed software systems.

Is Claude AI Free for Agent Development?

Claude offers limited free access in chat interfaces. However, agent development via the API is billed based on token usage.

Cost Considerations

- Model tier pricing

- Input token billing

- Output token billing

- Tool execution overhead

- Infrastructure costs

Cost-Control Strategies

- Prompt optimization

- Context trimming

- Smart model tier selection

- Caching frequent responses

- Monitoring usage logs

Careful optimization ensures predictable scaling costs.

Who Operates Claude AI?

Claude is developed and operated by Anthropic, an AI research and safety-focused organization dedicated to building aligned AI systems.

Common Misconceptions About Claude AI Agents

Many misunderstandings slow down proper system design.

- Claude is not automatically autonomous

- Agents require orchestration logic

- Tool calling does not equal autonomy

- Long context does not equal persistent memory

- A single prompt does not create an agent

- Memory must be engineered, not assumed

Understanding these distinctions prevents architectural mistakes and reduces deployment failures.

Enterprise Use Cases of Claude AI Agents

When properly architected, Claude AI agents can power high-value enterprise systems. The productivity impact of generative AI is measurable. According to McKinsey research on generative AI (2023), generative AI could add $2.6 to $4.4 trillion annually to the global economy, largely driven by productivity gains across enterprise knowledge work.

Common use cases include:

- Internal knowledge copilots

- CRM automation agents

- Compliance monitoring systems

- Document analysis pipelines

- Customer service orchestration agents

- Procurement intelligence systems

- Risk analysis assistants

These use cases benefit from:

- Structured reasoning

- Tool execution

- Memory systems

- Governance frameworks

How Dextralabs Designs Enterprise-Grade Claude AI Agents?

As an AI consulting company, Dextralabs builds production-ready Claude agentic AI systems using a structured, engineering-first methodology designed specifically for scalability, compliance, performance, and long-term maintainability. Rather than approaching AI development as a series of isolated experiments, Dextralabs treats agent systems as mission-critical infrastructure that must integrate cleanly into enterprise environments.

Enterprise AI agents are not just prompt wrappers around large language models. They are distributed systems that must:

- Operate reliably under load

- Maintain contextual continuity

- Execute secure tool calls

- Comply with governance policies

- Provide full observability

- Scale across departments and use cases

Dextralabs designs agent ecosystems with these operational realities in mind.

Dextralabs Agent Development Approach

Dextralabs follows a structured lifecycle that moves from strategic design to production hardening.

1. Use Case Decomposition

Every engagement begins with deep use case analysis.

Rather than immediately selecting a model or framework, Dextralabs decomposes business requirements into:

- Decision boundaries

- Automation opportunities

- Tool dependencies

- Data sensitivity levels

- Compliance constraints

- Failure scenarios

This decomposition clarifies:

- Where agentic reasoning is appropriate

- Where deterministic automation is preferable

- Where human oversight must remain

This phase ensures that AI is applied with precision, not overextended beyond its reliability threshold.

2. Model Benchmarking (Claude vs GPT)

Dextralabs evaluates performance across leading models, including Claude and GPT variants, to determine optimal deployment strategy.

Benchmarking criteria include Claude vs GPT comparison:

- Reasoning depth

- Tool invocation accuracy

- Structured output reliability

- Latency under load

- Token efficiency

- Context window utilization

- Cost-performance ratio

Rather than defaulting to a single model, Dextralabs frequently designs multi-LLM architectures, assigning models to roles based on strengths. For example:

- Claude for structured reasoning and compliance-sensitive workflows

- GPT for creative generation or auxiliary summarization

This evidence-based model selection ensures performance alignment with business objectives.

3. Architecture Blueprinting

Before implementation begins, Dextralabs produces a detailed architectural blueprint.

This includes:

- Orchestration layer design

- Tool integration mapping

- Memory architecture (short-term + long-term)

- Vector database strategy

- API gateway integration

- Security boundaries

- Human-in-the-loop checkpoints

- Observability pipelines

Architecture decisions are documented and validated against scalability targets and regulatory requirements. This blueprint-first methodology prevents architectural drift and reduces technical debt as systems grow.

4. Tool Schema Engineering

Tool calling is central to enterprise-grade agents. Dextralabs engineers structured tool schemas that:

- Enforce strict parameter validation

- Limit execution scope

- Reduce hallucination risk

- Support auditability

- Align with internal APIs

Tool schema engineering ensures that agents operate within defined boundaries rather than relying on free-form interpretation. Each tool definition is treated as a contract between the reasoning engine and execution environment.

5. Secure Deployment

Deployment strategy is designed around enterprise infrastructure realities.

Dextralabs supports:

- Cloud-native deployments

- VPC-isolated environments

- On-prem integrations

- Role-based access controls

- API authentication layers

- Data encryption in transit and at rest

Security is embedded at every layer; from prompt management to tool execution endpoints. This ensures that Claude agents operate safely within regulated and high-security environments.

6. Governance & Monitoring

Enterprise AI systems must be observable and controllable.

Dextralabs integrates:

- Prompt version tracking

- Tool invocation logging

- Execution tracing

- Token usage monitoring

- Error rate analytics

- Escalation triggers

- Human override workflows

Governance frameworks ensure that agent behavior remains transparent and auditable.

Monitoring pipelines allow teams to detect:

- Performance degradation

- Unexpected tool usage

- Policy violations

- Cost anomalies

This transforms AI from a black-box system into a managed operational asset.

7. Performance Optimization

Once deployed, optimization becomes continuous.

Dextralabs refines:

- Prompt efficiency

- Context window utilization

- Memory retrieval accuracy

- Tool invocation precision

- Caching strategies

- Latency reduction techniques

Optimization balances three competing factors:

- Intelligence

- Speed

- Cost

Through iterative refinement, agents achieve stable, cost-efficient performance under real-world usage conditions.

8. Observability Integration

Enterprise agents must integrate with existing observability ecosystems.

Dextralabs connects AI systems to:

- Logging platforms

- Metrics dashboards

- Incident management systems

- Compliance reporting tools

Full observability ensures that stakeholders can measure:

- Agent effectiveness

- ROI impact

- Operational risk exposure

- System health

This integration is essential for scaling agent ecosystems across business units.

Strategic Positioning

Dextralabs focuses on building durable AI infrastructure rather than short-lived prototypes.

Core positioning includes:

- Multi-LLM expertise

- Production-grade architecture

- Compliance-aware AI systems

- Scalable agent ecosystems

- Enterprise-ready orchestration layers

- Structured tool orchestration frameworks

Rather than building experimental demos or superficial chatbot integrations, the emphasis is on operationally resilient AI infrastructure designed to support real-world workloads.

From Experimentation to Infrastructure

Many organizations begin their AI journey with isolated experiments. While useful for exploration, these prototypes rarely account for:

- Governance requirements

- Observability standards

- Cost management

- Long-term scalability

- Cross-system integration

AI is no longer experimental. According to the McKinsey Global Survey on AI (2023), 55% of organizations report using AI in at least one business function, up significantly from previous years, signaling a clear shift from experimentation to operational deployment.

Dextralabs bridges the gap between experimentation and enterprise deployment.

Looking to build production-ready Claude agents instead of experimental demos? Dextralabs architects, deploys, and optimizes enterprise AI agent ecosystems designed for real-world scale, where reliability, compliance, and measurable performance are non-negotiable.

Conclusion

Claude AI agents are not standalone models; they are deliberately engineered systems composed of multiple coordinated layers. When combined with structured tool calling, persistent memory frameworks, orchestration logic, observability pipelines, and governance controls, Claude evolves from a conversational model into the reasoning core of scalable agentic AI infrastructure. These architectures can power enterprise automation, intelligent decision support systems, and autonomous multi-step workflows. For organizations moving beyond prototypes into production environments, architectural design, reliability engineering, and operational safeguards matter far more than prompt experimentation alone.

FAQs:

Q. What is the Claude user agent?

The term “Claude user agent” may refer to HTTP client identifiers used when Claude-powered applications make API requests, not an autonomous AI entity.

Q. What are the top 5 AI agents?

This depends on use case. Popular categories include Claude-based agents, GPT-based agents, open-source LLM agents, AutoGPT-style systems, and enterprise workflow agents.

Q. Is Claude agent free?

Claude chat access may offer limited free usage, but API-based Claude AI agents are paid via token-based billing.

Q. Is Claude an AI agent?

No, Claude is a large language model. It becomes part of an AI agent when combined