| TL;DR The five best OpenClaw alternatives for secure AI agent deployment in 2026 are NanoClaw, PicoClaw, TrustClaw, NanoBot, and IronClaw. Each solves a different production problem, from containerized compliance to edge IoT to enterprise-scale orchestration. Read on to find which one fits your stack. |

The Gap Nobody Talks About

There’s a moment every engineering team hits sooner or later. The demo worked. The stakeholders were impressed. The prototype ran clean in your sandbox environment for three straight weeks. So you gave the green light to move it into production.

Then things got complicated.

The agent that happily processed test tickets started making calls to systems it probably shouldn’t touch. The audit team asked for logs that didn’t exist in the format they needed. A dependency update broke two integrations at once. And somewhere in a security review, someone asked a simple question that turned out to have no clean answer: “What exactly does this agent have access to?“

This isn’t a failure of the idea. It’s a failure of the tool.

Frameworks like OpenClaw were built to make autonomous AI agents accessible and they succeeded. Getting from zero to a working agent in an afternoon is genuinely useful. But that same accessibility, that openness, becomes a liability the moment you’re dealing with real data, real compliance requirements, and real consequences.

At Dextra Labs, we’ve watched this pattern play out across enough enterprise deployments to know: the gap between a working prototype and a production-ready agent isn’t a gap in ambition. It’s a gap in architecture. This article is about how to close it.

What are the 5 Best OpenClaw Alternatives for Production in 2026?

A quick note before we dive in: these frameworks aren’t competing for the same use case. The best one for a healthcare enterprise is almost certainly not the best one for an IoT startup. Read through all five before you make a decision.

1. NanoClaw — When Security Boundaries Are Non-Negotiable

If you’re in a regulated industry and the phrase “the agent has filesystem access” keeps your security team up at night, NanoClaw is probably where your conversation should start.

NanoClaw runs agents inside containers. That’s not just a deployment detail, it’s the entire security model. Each agent lives in its own isolated execution environment with explicitly declared permissions. Filesystem access is off by default. System exposure is minimized. If one agent is compromised, the blast radius stops at the container boundary.

In practice, this means a few things. Your security team can point to a concrete isolation boundary during an audit. Your compliance reviewers can map agent behavior to specific permission declarations. And if something goes wrong, the forensics story is clean because the execution environment was constrained from the start.

What it takes to run NanoClaw well?

None of this is free. Container-based isolation requires container expertise — if your team hasn’t worked with orchestration platforms before, the setup learning curve is real. And configuring containers incorrectly can actually undermine the security benefits you’re trying to capture.

The Dextralabs SARA assessment consistently rates NanoClaw highest on security architecture and compliance alignment among the five frameworks on this list. For teams in healthcare, financial services, or any other heavily regulated sector, it’s our first recommendation. Just make sure you have the DevOps depth to implement it correctly.

| Best for: – Compliance-driven enterprises – Regulated sectors (healthcare, finance, legal) – Teams with DevOps infrastructure |

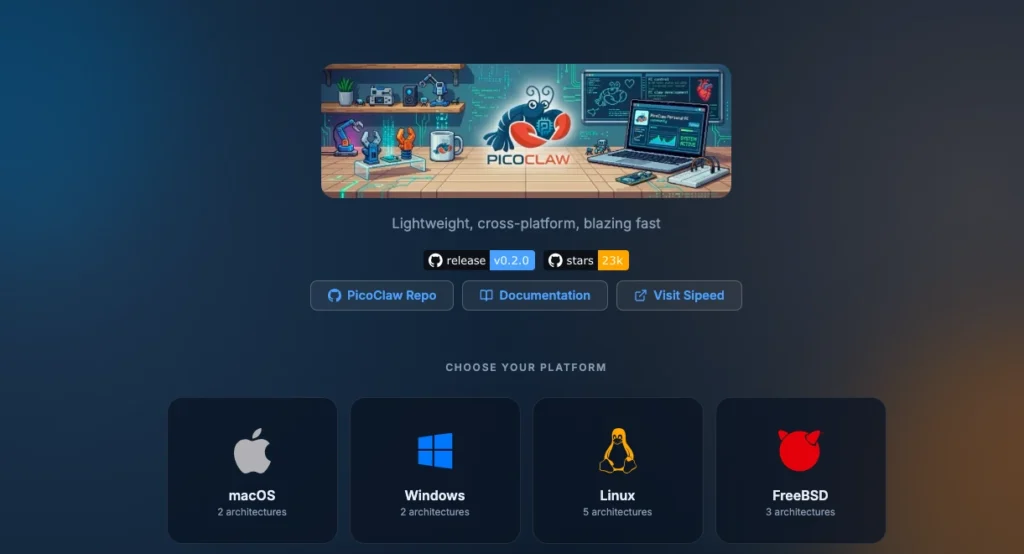

2. PicoClaw — The One That Disappears Into Your Infrastructure

Not every AI agent deployment lives in a data center. Some of the most interesting work happening with autonomous agents right now is out at the edge — on manufacturing floors, inside remote monitoring systems, on hardware that has maybe 50MB of memory to work with on a good day.

PicoClaw was built for exactly that environment.

Written in Go, it has a memory footprint under 10MB and boot times measured in milliseconds. It disappears into your infrastructure rather than imposing on it. And because Go produces lean, self-contained binaries with minimal runtime dependencies, the vulnerability surface is naturally small — not because of careful configuration, but because there’s just not much there to exploit.

Where PicoClaw runs out of road?

The tradeoff is capability. PicoClaw’s minimal footprint means a minimal ecosystem. If you need enterprise-grade observability integrations, role-based access control, or the kind of compliance tooling that a regulated deployment requires, you’ll be building that yourself. It doesn’t come with the framework.

Think of PicoClaw as a very good engine in a very light chassis. Excellent for what it’s designed for. Not the right choice if you need it to do things it was never meant to do.

| Best for: – Edge compute and IoT automation – Resource-constrained hardware – Distributed infrastructure pipelines |

3. TrustClaw — For Teams That Need to Move Fast Without a Platform Engineer

There’s a real segment of the market that gets overlooked in conversations about AI agent security: teams that absolutely need to deploy something, don’t have a dedicated DevOps function, and can’t spend three months standing up infrastructure before they can test a hypothesis.

TrustClaw is for them.

It’s a managed cloud platform. You don’t stand up the infrastructure — TrustClaw handles it. Security controls are applied at the platform level. Scaling is handled for you. The setup experience is genuinely close to “configure a few things, connect your LLM provider, and go.”

The question you need to ask before you commit

Here’s the honest conversation: managed means you’re trusting a third party with your agent’s execution environment. For a lot of organizations, especially those dealing with sensitive customer data, that creates a compliance problem.

Can you get a Data Processing Agreement? Does TrustClaw’s infrastructure meet your data residency requirements? Can you access the audit logs your compliance team will eventually ask for? These aren’t hypothetical questions — they’re the ones that will come up when your security review hits managed infrastructure.

For proof-of-concept work, non-critical automation, and teams evaluating what AI agents can do for their organization, TrustClaw is excellent. For production workloads in regulated industries, we’d want to see the compliance answers first.

| Best for: – Pilots and proof-of-concept – Non-technical teams – Automation without infrastructure overhead |

4. NanoBot — The Framework That Respects Your Intelligence

Most AI agent frameworks treat their security model as something the vendor has figured out so you don’t have to think about it. NanoBot takes the opposite position: it gives you a clean, auditable Python codebase and trusts you to implement the security model that fits your context.

This is either a strength or a weakness depending on who you are.

If you’re a startup or an R&D team that needs a framework you can fully understand, fully modify, and fully audit — NanoBot is genuinely refreshing. The codebase is small enough that a capable engineer can read it end-to-end. There’s no magic happening in the parts you can’t see. When you put it in front of a security reviewer, you can answer their questions because you actually understand what’s in the box.

The production responsibility that comes with this

What NanoBot doesn’t give you out of the box is production hardening. There’s no authentication middleware, no sandboxed execution environment, no centralized logging infrastructure baked in. These aren’t oversights — they’re deliberate. The framework is built to be extended.

At Dextralabs, we deploy NanoBot with what we call our Layered Security Model: API gateway → authentication middleware → sandboxed execution → centralized logging → continuous monitoring. Each layer adds defense in depth. Without it, NanoBot in production carries real risk. With it, the combination is genuinely solid.

| Best for: – Startups and R&D teams – Custom AI workflow development – Teams who value auditability and control |

5. IronClaw — When You’re Done Experimenting and Need It to Scale

If NanoBot is the framework for teams who want to build their own path, IronClaw is the framework for teams who’ve already walked that path a few times and want something more structured.

IronClaw is pipeline-oriented. Every agent action follows a declared workflow. Reusable tool components. Modular architecture. Orchestration logic that’s designed to grow with organizational complexity rather than collapse under it. For engineering teams that take software architecture seriously, IronClaw feels like it was built by people who think the same way they do.

That structure matters for security, too. When agent behavior follows declared pipelines rather than open-ended reasoning chains, auditing becomes tractable. You can point to a workflow definition and say: this is what the agent is allowed to do, and this is the log showing it did exactly that.

What you’re signing up for?

IronClaw is the heaviest framework on this list. The setup investment is meaningful. It’s not appropriate for edge deployments, rapid prototyping, or teams that need to move fast with minimal infrastructure. The payoff is a foundation that doesn’t require rearchitecting when you go from 10 agents to 100.

For enterprise teams migrating from OpenClaw with complex orchestration requirements, IronClaw is the most structurally compatible transition we’ve seen. It’s Dextralabs’ top recommendation for large-scale, compliance-sensitive enterprise deployments.

| Best for: – Large enterprise – Complex multi-step AI workflows – Compliance-sensitive orchestration at scale |

Side-by-Side: How the 5 Frameworks Compare?

Here’s how the five alternatives stack up across the dimensions that actually matter in a production deployment, based on Dextralabs’ SARA assessment:

| Frameworks | Best Use Case | Security | Setup | Best Sector | DL Rating |

| NanoClaw | Compliance-driven enterprise | High | Medium | Finance, Healthcare | ★★★★★ |

| PicoClaw | Edge / IoT automation | Medium | Low | Manufacturing, IoT | ★★★★ |

| TrustClaw | Pilots, non-technical teams | Managed | Very Low | SMB, Startups | ★★★ |

| NanoBot | Custom workflows (R&D) | Medium* | Medium | Startups, ML teams | ★★★★ |

| IronClaw | Enterprise orchestration | High | High | Enterprise, Regulated | ★★★★★ |

* NanoBot rating assumes Dextralabs Layered Security Model is implemented. Ratings reflect SARA assessment, March 2026.

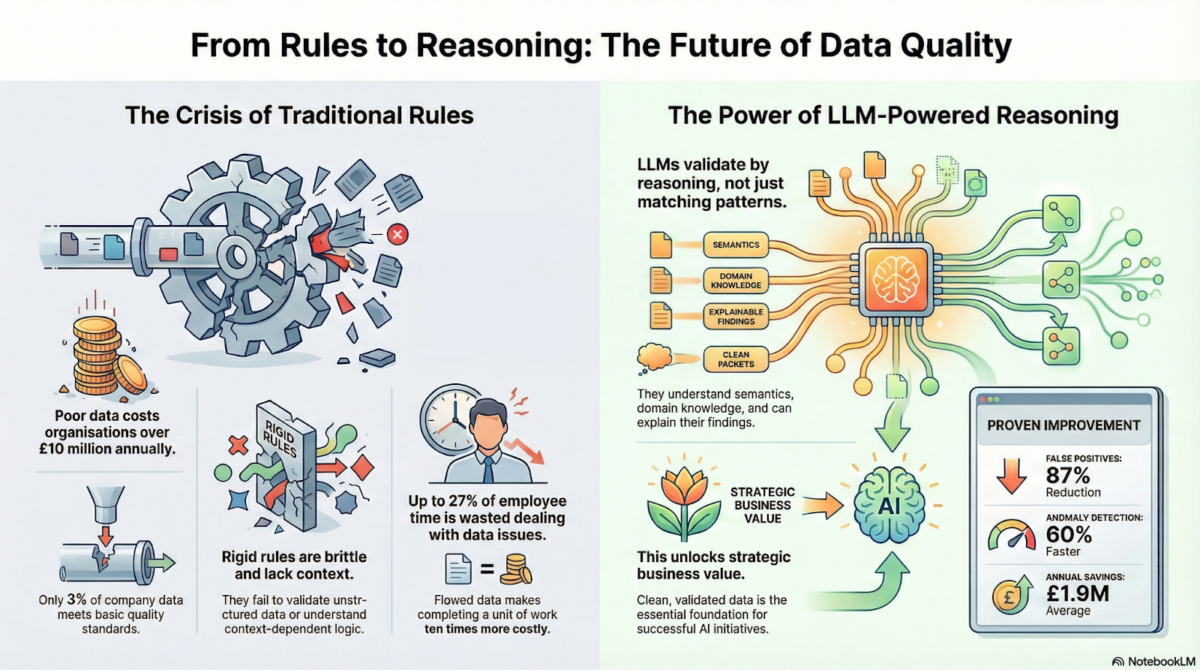

Why Teams Are Looking Beyond OpenClaw?

Let’s be honest about what OpenClaw is. It’s a powerful, well-designed tool that gives AI agents broad system access — filesystem read/write, shell execution, browser control, email and calendar integration. For a developer building a personal assistant or testing an automation concept, that’s exactly what you want.

For an enterprise security team? It’s a list of concerns.

Here’s what we consistently hear from teams that have hit the wall with OpenClaw in production:

- Expanded attack surface — when an agent can touch your filesystem and run shell commands, any vulnerability in the agent stack is a vulnerability in your infrastructure

- Prompt injection risk — a malicious instruction buried in an email, a webpage, or a document your agent reads can redirect its behavior in ways you never intended

- Audit trail gaps — regulated industries need demonstrable logs of what an agent did, when, and why. Unstructured logging doesn’t satisfy a compliance reviewer

- Dependency sprawl — more dependencies mean more surface area for vulnerabilities, and more complexity when something needs to be patched fast

None of these are bugs in OpenClaw. They’re natural consequences of a framework built for exploration rather than production hardening. The good news is that several teams have been building with exactly this problem in mind.

Before we get into the alternatives, it’s worth spending a moment on what we actually mean when we say “lightweight.” Because it’s a word that gets thrown around a lot and usually means very little.

What “Lightweight” Actually Means in a Production Context?

Ask ten engineers what makes a framework lightweight and you’ll get ten answers. Small binary? Few dependencies? Fast boot? Low memory? All of the above?

At Dextralabs, we’ve landed on a definition that’s more useful for production decision-making. A lightweight AI agent framework is one that minimizes four specific things: dependency count, compliance validation surface, time-to-patch when a vulnerability is discovered, and privilege footprint. That last one is the most important and the most overlooked.

| The Dextralabs Definition Lightweight = fewer dependencies + smaller compliance surface + faster patch cycles + minimal privilege footprint. It’s not about binary size. It’s about how much of your organization a compromised agent can touch. |

Keep that framing in mind as we go through the five alternatives below.

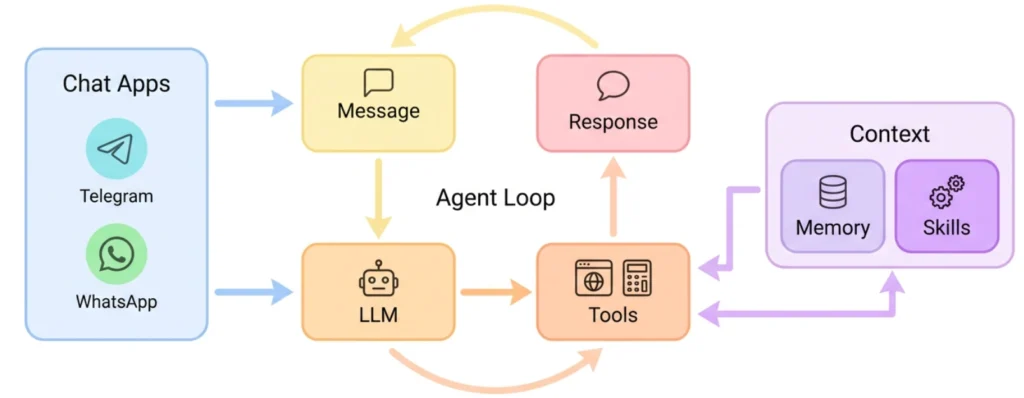

How Dextralabs Evaluates These Frameworks?

We don’t pick frameworks based on GitHub stars or how polished the landing page looks. At Dextralabs, we use a structured evaluation process we call the Secure Agent Readiness Assessment — SARA for short — that looks at five things:

- Security Architecture — what’s the privilege model, what are the isolation boundaries, how large is the attack surface?

- Compliance Alignment — can you produce the audit trails SOC 2, ISO 27001, and GDPR reviewers actually need?

- Observability Posture — how deep is the logging, is tracing available, does alerting integrate with your existing stack?

- Operational Scalability — how does it behave under load, and how complex is horizontal scaling?

- Ecosystem Maturity — how well-governed is the plugin/skill system, and is there a vendor you can actually hold accountable?

Every framework below has been assessed against these five dimensions. The goal isn’t to find a single winner, it’s to help you find the right fit for your specific situation.

You can also use this framework to assess your current OpenClaw deployment. If you’d like Dextralabs to run a formal SARA assessment on your stack, we offer that as a standalone engagement.

What Dextralabs Does Differently?

Choosing the framework is the easy part. The harder work is everything that comes after and this is where we see the most teams get into trouble.

We’ve built a three-phase deployment process that we use with every enterprise AI agent engagement. It’s not proprietary magic. It’s just the result of watching enough things go wrong to know what matters.

Phase 1: Framework selection grounded in risk, not features

We apply the SARA assessment before any architecture decisions get made. That means mapping your regulatory obligations, your infrastructure maturity, and your operational risk tolerance against the actual capabilities of the candidate frameworks. The goal is to rule out the wrong choices before you’ve invested time in them.

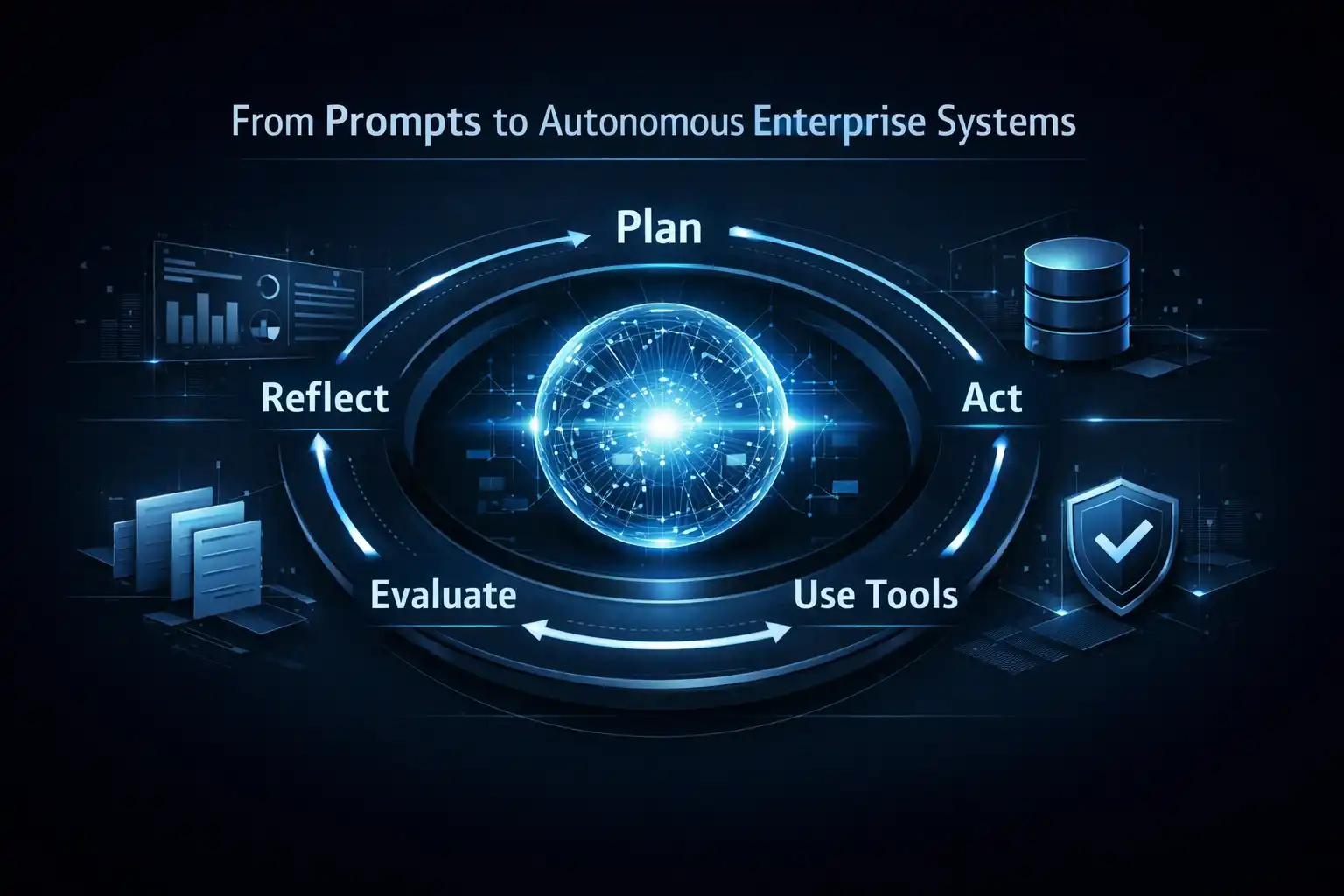

Phase 2: A layered security architecture, not a single control

Every Dextralabs AI agent deployment is built on five layers: an API gateway that controls inbound and outbound agent traffic, authentication middleware that validates every tool invocation, sandboxed execution environments that constrain what agents can actually do, centralized logging that creates a complete and auditable record, and continuous monitoring that can catch anomalous behavior before it becomes an incident.

No single layer is sufficient. The value is in the combination. Each layer catches failures the one above it missed.

Phase 3: Hybrid architecture where it makes sense

The most effective production stacks we’ve built combine a lightweight agent runtime with an external orchestration layer and purpose-built data connectors. This separation of concerns means you can swap components, scale independently, and audit clearly. It’s a more complex initial design, but it’s architecturally honest about what each piece is responsible for.

Is Your AI Agent Stack Ready for Production?

Dextra Labs’ Secure Agent Readiness Assessment (SARA) evaluates your OpenClaw or agentic AI deployment for security, compliance, scalability, and enterprise reliability — giving your team a clear roadmap before production.

Get Your Secure Agent Readiness AssessmentSo, Which One Should You Choose?

Honestly? It depends on a few things you know about your situation that no article can know for you.

If you’re in a regulated industry and your security team needs demonstrable isolation controls, start with NanoClaw. If you’re building automation that lives at the edge of your infrastructure on constrained hardware, PicoClaw was made for that. If you need to validate an idea quickly without a platform team, TrustClaw will get you there. If you want maximum auditability and the freedom to build exactly what you need, NanoBot with proper hardening is a serious option. And if you’re running complex enterprise workflows at scale and need something that won’t require rearchitecting six months from now, IronClaw is worth the setup investment.

The thing worth keeping in mind through all of this: lightweight doesn’t mean simple. The most effective production AI agent stacks we’ve seen aren’t the ones with the most features or the biggest ecosystems. They’re the ones where someone thought carefully about what the agent should and shouldn’t be able to do — and then built architecture that enforced those boundaries.

That thinking is the work. The framework choice is downstream of it.

Dextralabs helps organizations build AI agent systems that are ready for production, not just ready for demos. If you’re working through this decision and want a second perspective, we’re happy to talk.

FAQs:

Q. What is the best OpenClaw alternative for enterprise security in 2026?

For most regulated enterprises, NanoClaw is the strongest starting point. Its container-based isolation model gives your security team a concrete boundary to point to during audits, and it scores highest on security architecture and compliance alignment in Dextralabs’ SARA assessment. The catch is that it requires container expertise to implement correctly — misconfigured containers can undermine the very protections you’re trying to capture.

Q. Why are teams moving away from OpenClaw for production deployments?

OpenClaw’s design gives agents broad system access by default — filesystem, shell execution, browser control, email. That’s what makes it powerful for development. In production, the same access becomes a liability: expanded attack surface, prompt injection vulnerability, weak audit trails, and dependency sprawl. Teams aren’t abandoning the concept of autonomous agents; they’re looking for frameworks that were designed with production constraints in mind from the start.

Q. Which OpenClaw alternative is best for edge and IoT deployments?

PicoClaw, without much competition. Its Go-based runtime delivers a sub-10MB memory footprint and millisecond boot times. It was designed for exactly the kind of resource-constrained hardware you find at the edge of industrial infrastructure. Where it falls short is enterprise orchestration features, if you need compliance tooling or complex observability, you’ll be building that yourself.

Q. Can NanoBot be deployed in production without additional security work?

Not safely, no. NanoBot’s design is deliberately minimal and auditable, which is a genuine strength. But it ships without the security layers a production deployment needs: no authentication middleware, no sandboxed execution, no centralized logging. At Dextralabs, we deploy NanoBot with a five-layer security model on top. Without those layers, the risk profile is comparable to an unhardened OpenClaw deployment.

Q. What’s the practical difference between NanoClaw and IronClaw?

NanoClaw is focused on security isolation, the container model is the whole point, and everything else is secondary. IronClaw is focused on structured orchestration, pipeline-based workflows, reusable components, enterprise-scale architecture. NanoClaw is the better choice when your primary concern is what the agent can’t do. IronClaw is the better choice when your primary concern is reliably orchestrating what the agent should do, at scale.

Q. Is TrustClaw appropriate for HIPAA or GDPR-regulated deployments?

This is genuinely situational. TrustClaw’s managed architecture limits your visibility into the underlying infrastructure, which is a problem for regulated deployments where you need to demonstrate control over data processing. Before committing, you’d want a Data Processing Agreement, clarity on data residency, and access to audit logs your compliance team will accept. If those answers are satisfactory, it may work. If they’re not, NanoClaw or IronClaw are more reliable choices for regulated environments.