Industry: Legal / Corporate M&A | Region: Singapore | Client Type: Mid-Size Law Firm (45 lawyers)

About the Client

The client is a well-established law firm in Singapore’s Central Business District with a strong corporate and cross-border M&A practice. They handle acquisitions, joint ventures and restructurings for clients operating across Southeast Asia, with a particular concentration in Singapore-India and Singapore-Indonesia deal corridors.

The firm had built its reputation on thoroughness. Partners prided themselves on catching issues that other firms missed. But that thoroughness came at a cost: their junior associates were spending the bulk of their time on document review rather than developing the analytical skills that would make them better lawyers.

The Problem

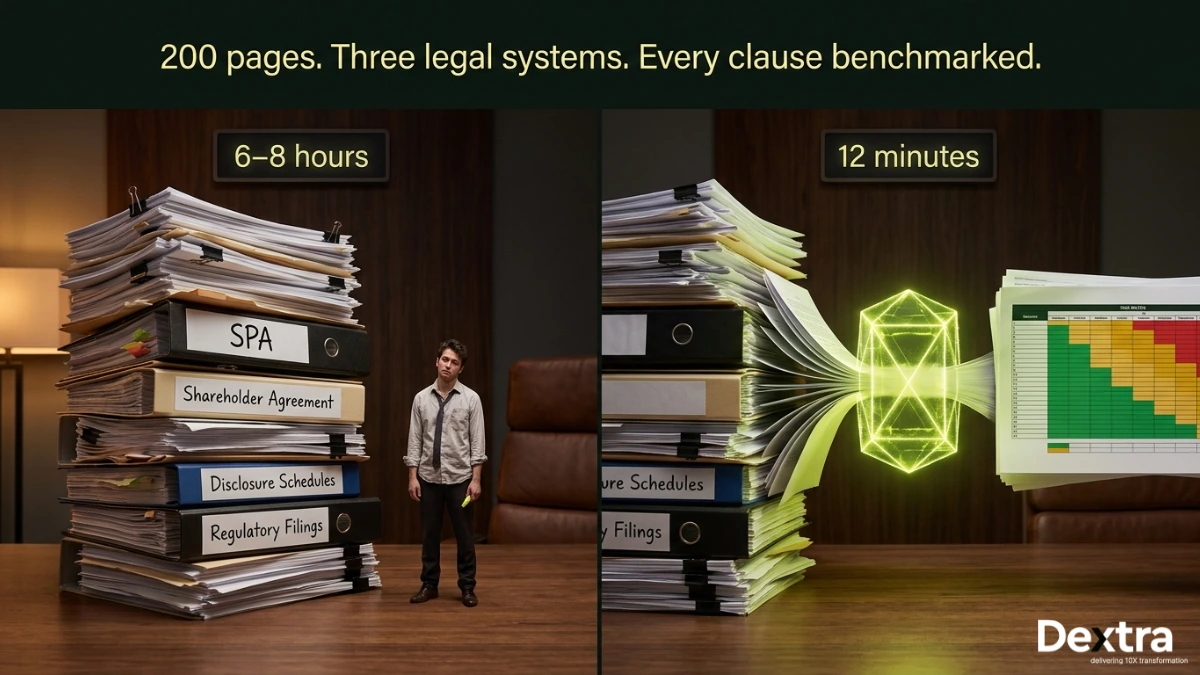

A typical cross-border M&A deal at this law firm involved three to five core transaction documents: a Share Purchase Agreement (SPA), shareholder agreements, disclosure schedules, regulatory filings and sometimes side letters or escrow arrangements. Across all documents, a single deal easily hit 180 to 220 pages.

For every deal, a junior associate would read through the entire document set and build a clause extraction matrix: identifying all the indemnity provisions, representations and warranties, material adverse change clauses, non-compete provisions, governing law clauses and dozens of other standard and non-standard provisions. They’d then compare each clause against the firm’s internal playbook, a living document that captured the firm’s negotiation positions and risk thresholds for different clause types.

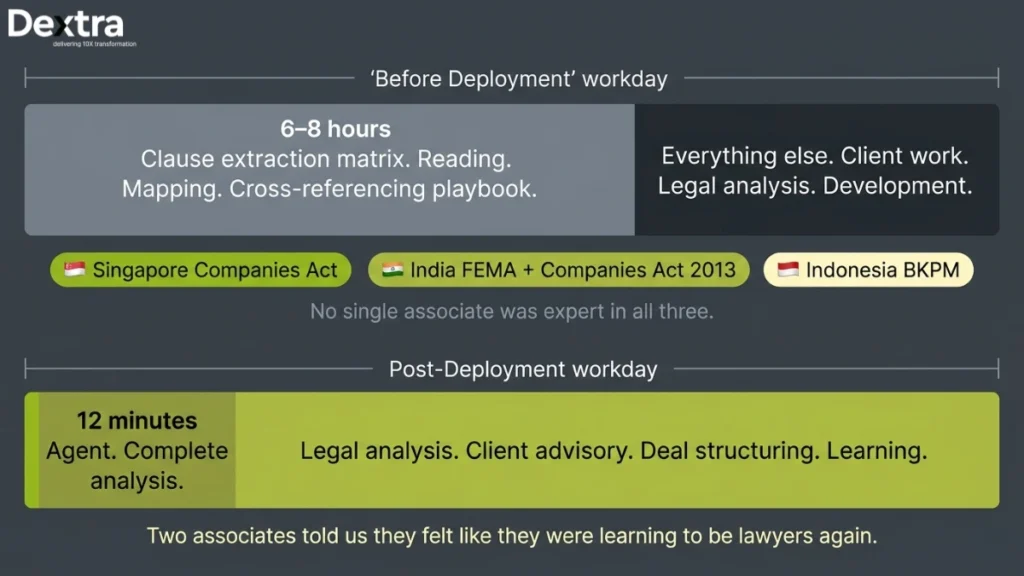

This process took 6 to 8 hours per deal. With deal volume up 40% in the past two years, the bottleneck was real. The firm was turning away work not because they lacked clients, but because they lacked the capacity to review documents fast enough. Hiring more associates was an option, but training them to the firm’s standard took 12–18 months.

The jurisdictional complexity made it worse. A Singapore-India deal meant the SPA had to be reviewed against Singapore’s Companies Act, India’s Companies Act 2013 and FEMA regulations. A Singapore-Indonesia deal brought in Indonesian Company Law and BKPM investment requirements. Associates had to understand the legal nuances of each jurisdiction to flag issues correctly and no one person was an expert in all three.

What Dextralabs Built?

Contract Review AI Agent with Jurisdiction-Aware Reasoning

At Dextra Labs, we start with building contract review AI agent. The system starts with a layout-preserving PDF parser that handles the formatting inconsistencies common in legal documents, multi-column layouts, tables within tables, cross-references and defined terms that appear in bold or italics. The parser produces a structured representation of the document that maintains the relationships between sections, definitions and cross-references.

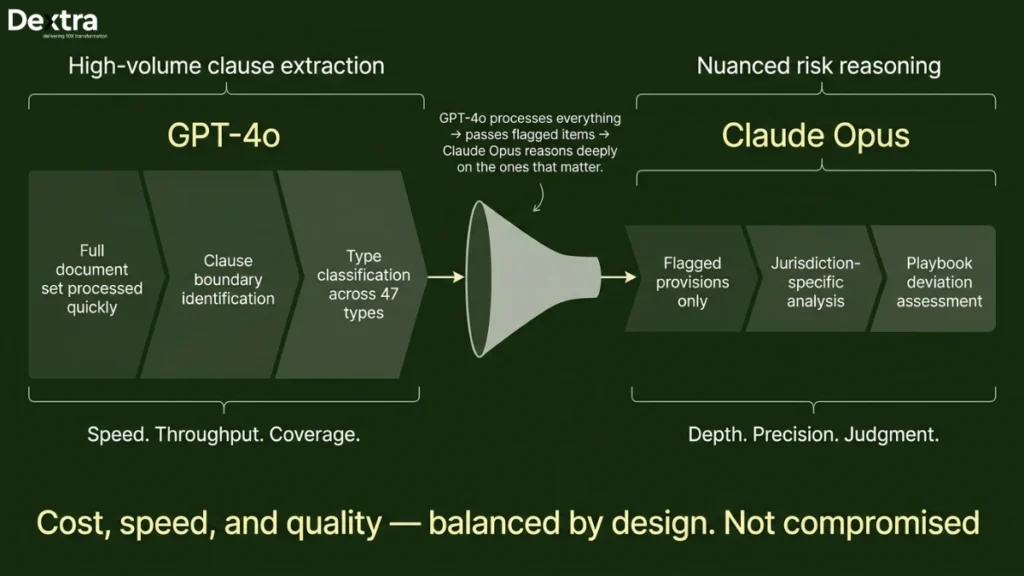

A clause extraction pipeline then identifies 47 standard clause types across three legal systems. This isn’t keyword matching. The AI agent understands that an indemnity clause in a Singapore SPA might be structured very differently from one in an Indian SPA and that Indonesian law imposes specific requirements on how non-compete provisions can be drafted. We built jurisdiction-specific clause taxonomies with distinct extraction rules and risk thresholds for each.

Playbook Benchmarking

This is where the system goes from useful to indispensable. We built a RAG pipeline that indexes the firm’s 500-page internal playbook. When the ai agent extracts a clause, it doesn’t just identify it, it benchmarks it against what the firm would typically accept in that jurisdiction. A non-compete lasting 36 months might be standard in Singapore but problematic under Indian law. The agent flags these jurisdiction-specific deviations with explanations grounded in the playbook.

Risk Matrix and Review Interface

The final output is a structured risk matrix that partners and senior associates can review in minutes. Each clause is tagged with its type, jurisdiction, risk level (green/amber/red), a summary of any deviation from the playbook and the specific playbook reference. Lawyers can annotate, override and approve directly in the interface. The entire analysis for a 200-page document set completes in roughly 12 minutes.

Architecture and Technical Decisions

At Dextralabs, we used a dual-model architecture. GPT-4o handles the high-volume clause extraction work where speed matters, it processes the entire document set quickly and identifies clause boundaries and types. Claude Opus handles the nuanced risk reasoning on flagged provisions, where analytical depth matters more than throughput. This split lets us balance cost, speed and quality.

The system is deployed on AWS in the ap-southeast-1 (Singapore) region. All document data is encrypted at rest with customer-managed keys. The deployment is compliant with Singapore’s PDPA and follows IMDA’s Model AI Governance Framework. The firm had specific concerns about client confidentiality, so we built the system with no data retention, documents are processed and discarded; only the extracted analysis persists and it’s stored in the firm’s own infrastructure.

Tech Stack

GPT-4o · Claude 3 Opus · LlamaIndex · Qdrant · AWS ap-southeast-1 · Next.js · Python FastAPIResults

| 12 min Full contract analysis (was 6–8 hrs) | 3,400+ Associate hours saved in first 6 months | 47 Clause types across 3 jurisdictions | 94.7% Extraction accuracy (partner-verified) |

In the first six months, the system saved over 3,400 associate hours. Those hours didn’t just disappear from the payroll, associates redirected that time into legal analysis, client advisory work and deal structuring. Two associates told us they felt like they were actually learning to be lawyers again instead of functioning as expensive document reviewers.

The firm took on 30% more deal volume in the six months after deployment without adding headcount. Partners reported that the risk matrices caught cross-jurisdictional conflicts that would previously have surfaced late in negotiations, reducing deal timeline by an estimated 5–7 days on complex transactions.

Accuracy was validated by having three senior partners independently review the agent’s output on 40 historical deals. The extraction accuracy across all clause types and jurisdictions was 94.7%, with most misses occurring in highly non-standard bespoke provisions rather than in the standard clauses that represent 90% of the review workload.

“Our associates used to dread the clause extraction phase. Now the agent does in 12 minutes what took half a day and it catches cross-jurisdictional conflicts we’d sometimes miss until the eleventh hour.”

— Managing Partner, Corporate M&A Practice, Singapore

Engagement Timeline

11 weeks from discovery to pilot deployment with three live deals:

Weeks 1–2: discovery sessions with partners and associates, playbook analysis and jurisdiction taxonomy design.

Weeks 3–6: document parser and clause extraction pipeline development.

Weeks 5–8: RAG pipeline over internal playbook and risk reasoning development.

Weeks 9–10: integration testing with historical deal documents.

Week 11: live pilot on three concurrent deals with partner oversight.

Running a legal practice that’s capacity-constrained by document review?

Let’s talk about how AI agents can give your lawyers their time back.

👉 Book a 30-min Call for AI Agent development Services