As a CTO today, you’re balancing innovation with stability. On one side, the pressure to integrate generative AI into products and operations is relentless. On the other hand, the ecosystem of LLM tools is fragmented, noisy, and filled with both proven and untested solutions.

In the business world, large language models, or LLMs, have evolved from being unique to essential. LLMs are now vital for mainstream AI systems, whether they are enabling chatbots, copilots, or intelligent search experiences. However, successfully managing these models has gotten increasingly difficult as they get more potent and intricate.

Enter LLMOps tools, a category of platforms and practices designed to manage the entire lifecycle of LLM applications. From fine-tuning and evaluation to deployment and monitoring, LLM platforms ensure that your AI apps are not only functional but also scalable, secure, and compliant.

At Dextra Labs, we work closely with organizations to integrate cutting-edge LLMOps platforms into real-world production systems. In this guide, we’re highlighting the 15 essential LLMOps tools that are leading the way in 2025 for scalable, reliable, and efficient language model AI platforms.

The truth is: choosing the right stack isn’t just about speed, it’s about long-term viability, security, compliance, and cost control. Below, I’ve broken down the key LLM tools by category, with features, use cases, pros, and cons — all from a CTO’s perspective.

What is LLMOps?

LLMOps stands for Large Language Model Operations. Similar to MLOps, it refers to the tools, techniques, and best practices used to develop, deploy, evaluate, and monitor LLM-based systems.

As LLMs become central to business-critical applications, organizations face unique challenges:

- Scalability: How do you efficiently run multi-billion parameter models in production?

- Evaluation: How do you measure hallucinations, factuality, and performance?

- Deployment: How do you serve models in real-time with low latency?

- Monitoring: How do you track drift, failures, or prompt effectiveness?

- Compliance: How do you ensure responsible AI usage with governance, bias checks, and auditability?

LLMOps tools aim to solve these problems, individually or end-to-end.

Here are the top LLMOps for AI Application Development:

API Access – “Fast-Track to Powerful Models”

Skip infrastructure and tap directly into state-of-the-art, powerful models. Perfect for fast pilots or teams who prioritize time-to-market over deep customization.

1. OpenAI API

What it’s for: It has plug-and-play access to GPT models for copilots, chatbots, and automation.

Features: Pre-trained GPT models, embeddings, fine-tuning, and Azure integrations.

Use Cases: Enterprise copilots, customer support bots, and workflow automation.

Pros: Enterprise-ready, fast time-to-market, with strong documentation.

Cons: It had vendor lock-in with limited control, and its costs rise sharply with scale.

What it’s for: Access to Claude, designed with safety and compliance as first principles.

Features: Safety-first guardrails, strong reasoning ability.

Use Cases: Regulated industries (finance, healthcare, legal).

Pros: Compliance-friendly, safe defaults.

Cons: Smaller ecosystem, evolving support, less feature-rich than OpenAI.

Fine-Tuning Frameworks – “Make Models Your Own”

When off-the-shelf models aren’t enough, these tools let you tailor LLMs to your domain, data, and tone.

3. Transformers (Hugging Face)

What it’s for: The gold standard library for training and customizing open-source LLMs.

Features: 100k+ models, multi-framework compatibility, vibrant community.

Use Cases: Domain-specific copilots, classification, internal assistants.

Pros: Most widely adopted, flexible, strong ecosystem.

Cons: GPU-hungry, requires ML engineers to manage complexity.

4. Unsloth AI

What it’s for: Cost-efficient fine-tuning llm for teams that want performance without massive infra spend.

Features: Optimized LoRA/QLoRA workflows, model export (vLLM, GGUF).

Use Cases: SMEs or teams testing tailored copilots and niche models.

Pros: Faster, cheaper, infra-light.

Cons: New player, smaller enterprise footprint, long-term support unknown.

Experiment Tracking – “Know What Worked, and Why”

As models evolve, experiment tracking ensures your team isn’t flying blind.

What it’s for: A command center for monitoring every experiment, dataset, and model version.

Features: Dashboards, lineage tracking, audit trails.

Use Cases: Enterprises running AI labs or multiple model experiments.

Pros: Industry standard, excellent collaboration tooling.

Cons: Expensive at enterprise scale, data residency challenges in regulated industries.

LLM Integration Ecosystem – “From Model to Application”

Frameworks to turn raw LLM power into actual business workflows.

6. LangChain

What it’s for: The most popular orchestration framework for chaining LLMs with tools and APIs.

Features: RAG workflows, agents, connectors, and observability via LangSmith.

Use Cases: Building copilots, assistants, and multi-step workflows.

Pros: Rich ecosystem, huge developer adoption.

Cons: Abstraction overhead, fragmented ecosystem, upsells for advanced features.

7. LlamaIndex

What it’s for: Lightweight alternative to LangChain, especially for RAG-focused apps.

Features: Data ingestion, indexing, and retrieval APIs.

Use Cases: Internal knowledge search, smaller-scale copilots.

Pros: Developer-friendly, easy to prototype.

Cons: Less enterprise-grade scaling and observability.

Vector Search Tools – “The Memory Layer for LLMs”

LLMs need a memory layer to retrieve facts. These databases make that possible at scale.

8. Chroma

What it’s for: Lightweight, open-source vector database for quick prototyping.

Features: Python-native, minimal setup.

Use Cases: Small-scale assistants, POCs.

Pros: Easy and fast to start.

Cons: Weak scaling and uptime guarantees for enterprise workloads.

9. Qdrant

What it’s for: High-performance, production-ready vector DB.

Features: Rust backend, hybrid deployment (cloud, on-prem, hybrid).

Use Cases: Enterprise-grade RAG, recommendations, AI-powered search.

Pros: High performance, flexible deployment.

Cons: Requires infra expertise, steeper learning curve.

Model Serving Engines – “From GPU to End User”

Efficient serving frameworks ensure your models run fast and cost-effectively.

10. vLLM

What it’s for: Ultra-efficient inference engine that lowers latency and infra cost.

Features: PagedAttention batching, optimized GPU usage.

Use Cases: High-traffic AI apps, latency-sensitive workloads.

Pros: Infrastructure cost savings, great efficiency.

Cons: Ecosystem still maturing, integration overhead.

11. BentoML

What it’s for: Flexible framework for packaging and serving ML models.

Features: Deployment pipelines, observability, “bentos” packaging.

Use Cases: Enterprises standardizing model → production handoff.

Pros: Flexible, scales well across teams.

Cons: Steeper learning curve, DevOps heavy.

Deployment Services – “Managed AI Without the Headaches”

For teams that want LLMs in production but don’t want to manage infrastructure.

12. Hugging Face Inference Endpoints

What it’s for: Managed deployment for OSS models with autoscaling included.

Features: One-click deploy, monitoring, Hugging Face ecosystem tie-in.

Use Cases: Teams deploying OSS models quickly.

Pros: Fast, simple, reliable.

Cons: Vendor lock-in, limited customization.

13. Anyscale

What it’s for: Large-scale AI infrastructure based on Ray.

Features: End-to-end training, serving, monitoring.

Use Cases: Enterprises with large distributed AI workloads.

Pros: Enterprise-grade scalability, full-stack support.

Cons: Complex setup, requires Ray expertise.

Observability & Monitoring – “Trust, but Verify”

Because in production, models need more than performance — they need governance and guardrails.

14. Evidently

What it’s for: Open-source monitoring for data and model drift.

Features: Drift reports, CI/CD integration.

Use Cases: Early-stage teams, smaller production AI apps.

Pros: Free, extensible, flexible.

Cons: DIY dashboards, not enterprise-ready.

15. Fiddler AI

What it’s for: Enterprise observability with compliance in mind.

Features: Bias detection, explainability, alerts, governance dashboards.

Use Cases: Banking, insurance, healthcare, other regulated spaces.

Pros: Compliance-ready, strong explainability tooling.

Cons: Expensive, heavy integration effort.

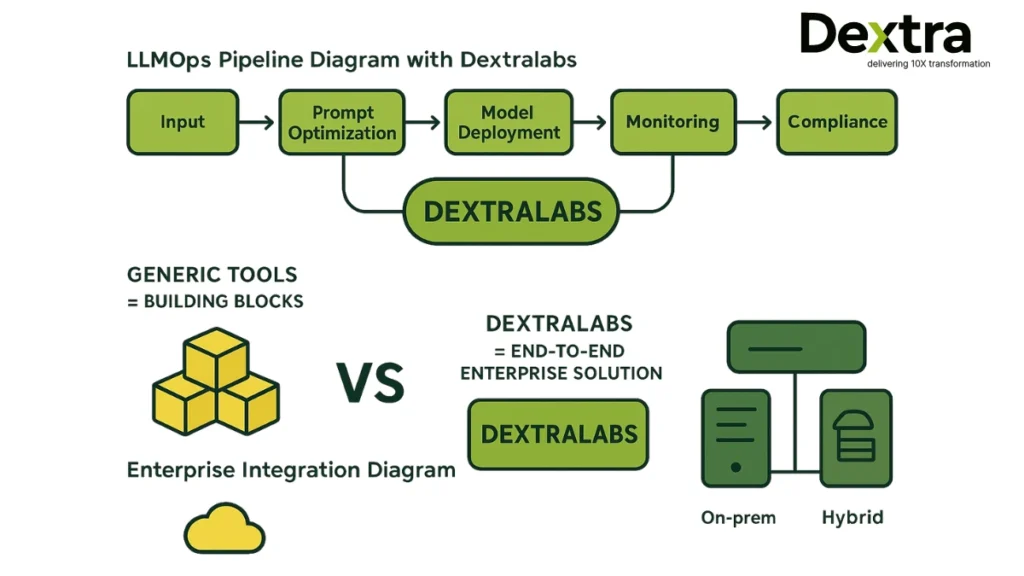

Beyond Tools: Expert LLMOps Solutions with Dextralabs

Many of the listed technologies are already enterprise-grade and production-ready; however, rather than addressing the problem from start to finish, the majority of tools are better at fine-tuning, deploying, and monitoring certain aspects of the LLM lifecycle. In order to integrate these technologies into a scalable, secure, and compliant LLMOps stack, enterprises frequently require specialized designs, infrastructure methods, and integration frameworks.

And that’s where DextraLabs comes in. By creating end-to-end LLMOps solutions that integrate the finest features of these platforms with specialized infrastructure, governance, and performance optimization targeted to your sector and regulatory environment, we assist businesses in bridging this gap.

That’s where Dextra Labs comes in.

What We Offer:

- Enterprise-grade LLM Deployment – On-prem, hybrid, or cloud-native setups tailored to compliance needs.

- LLM Evaluation Frameworks – Measure accuracy, hallucination rates, safety, and fairness.

- Prompt Engineering & Optimization – Reduce costs and boost effectiveness with refined prompt strategies.

- Custom LLMOps Pipelines – Monitoring, drift detection, observability, governance, and auditability built-in.

Why Choose Dextra Labs?

- Trusted by leading enterprises in Singapore and beyond

- Proven expertise in LLMOps, AI agents, and production-grade systems

- End-to-end support: from model selection to deployment and optimization

Final Thoughts

The LLMOps space in 2025 is vibrant, with powerful tools at every layer of the stack. But selecting the right ones, and stitching them together into a reliable pipeline, requires strategic thinking and enterprise context.

From a CTO’s perspective, every tool is a trade-off:

- OpenAI vs Anthropic: Speed vs governance.

- Chroma vs Qdrant: Ease of use vs scalability.

- Evidently vs Fiddler: Open-source flexibility vs enterprise compliance.

At Dextralabs, we don’t just help you pick the right tools—we help you operationalize them into production systems.

Ready to go beyond experimentation? Reach out to Dextralabs and let’s build something powerful together.

FAQs on top LLMOps:

Q. Where can practitioners track the evolving LLMOps landscape?

Curated lists like Awesome-LLMOps and periodic market scans from industry analysts aggregate active tools, features, and categories to inform selection. Vendor blogs and comparison posts (e.g., TrueFoundry, integrators) provide practical overviews and integration tips aligned to common enterprise needs.

Q. What are common pitfalls when scaling LLM apps?

Underinvesting in evaluation leads to silent regressions; make pre-release gates with offline eval suites and canary monitors standard. Ignoring cost/latency observability causes budget overruns and poor UX; ensure dashboards and alerts on token spend, p95 latency, and error rates are in place.

Q. How should an enterprise choose among platforms vs. assembling best-of-breed?

Platforms reduce integration overhead and centralize governance/monitoring, ideal for regulated environments or large teams; examples include Databricks and TrueFoundry. Best-of-breed stacks offer flexibility and cutting-edge features but need stronger engineering investment in glue code, observability, and CICD alignment.

Q. How do we evaluate LLM quality beyond accuracy?

LLMOps stacks use a mix of automated metrics (e.g., BLEU/ROUGE for certain tasks), rubric- or model-graded evals, and human-in-the-loop reviews to assess relevance, safety, faithfulness, and latency. Platforms such as Comet and LangSmith-integrated pipelines support side-by-side comparisons and dataset-level evaluations to monitor regressions before release.

What’s the role of vector stores and data/versioning in LLMOps?

Versioned data lakes and vector databases underpin reliable RAG, dataset lineage, and rollback; Deep Lake blends vector search with dataset versioning for LLM workflows. Data-centric approaches (e.g., Snorkel) help programmatically label and curate datasets, improving LLM fine-tuning and retrieval quality over time.